Why Teams Replace Few-Shot Example Management with Logic

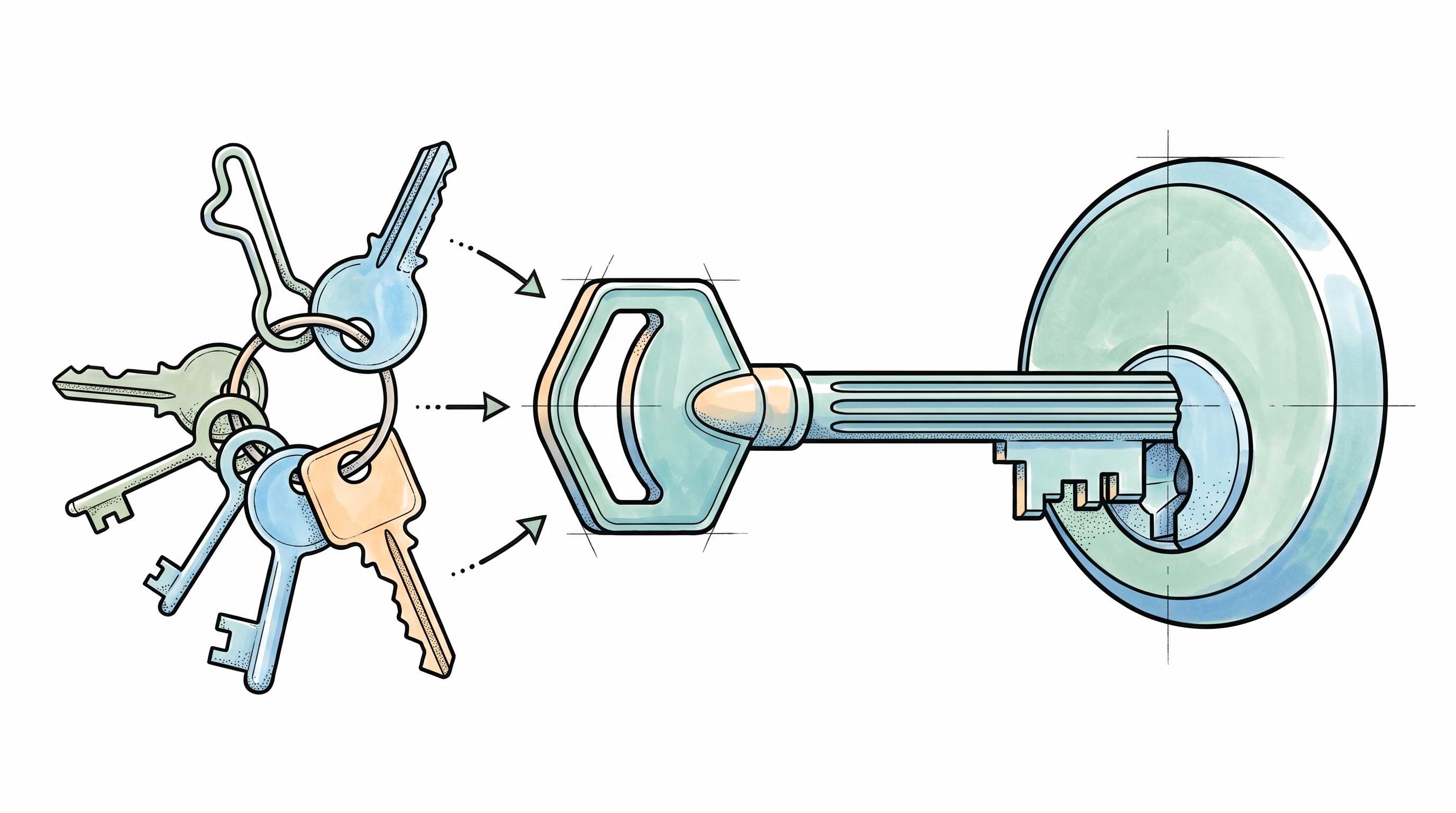

Every backend engineer knows what it takes to manage database migrations: version the schema, test against staging data, roll back if something breaks. The discipline is mature, the tooling standardized, the failure modes well-documented. Few-shot prompting in production LLM applications demands similar rigor, but the tooling and discipline simply don't exist yet. Example selection, ordering, formatting, and count each independently affect output quality, and none of them have standard infrastructure for versioning, testing, or rollback.

The gap becomes visible quickly. A few-shot prompt that classifies support tickets correctly in development starts producing inconsistent results at scale because production inputs don't match the distribution of your curated examples. You add examples to cover edge cases, and performance on straightforward inputs degrades. A model provider updates their weights, and a significant portion of your prompts behave differently without a single change on your side. What looked like a solved problem in a notebook becomes an ongoing engineering burden that competes with core product work for the same limited bandwidth. Logic's spec-driven platform sidesteps this entirely: instead of managing examples, teams write a natural language spec and Logic handles the infrastructure that few-shot prompting forces teams to build themselves.

Four Few-Shot Variables, One Prompt

Few-shot prompting looks deceptively simple: include a handful of input-output examples in your prompt, and the model learns the pattern. In practice, four independent dimensions of few-shot example management each introduce their own failure modes, and they compound each other.

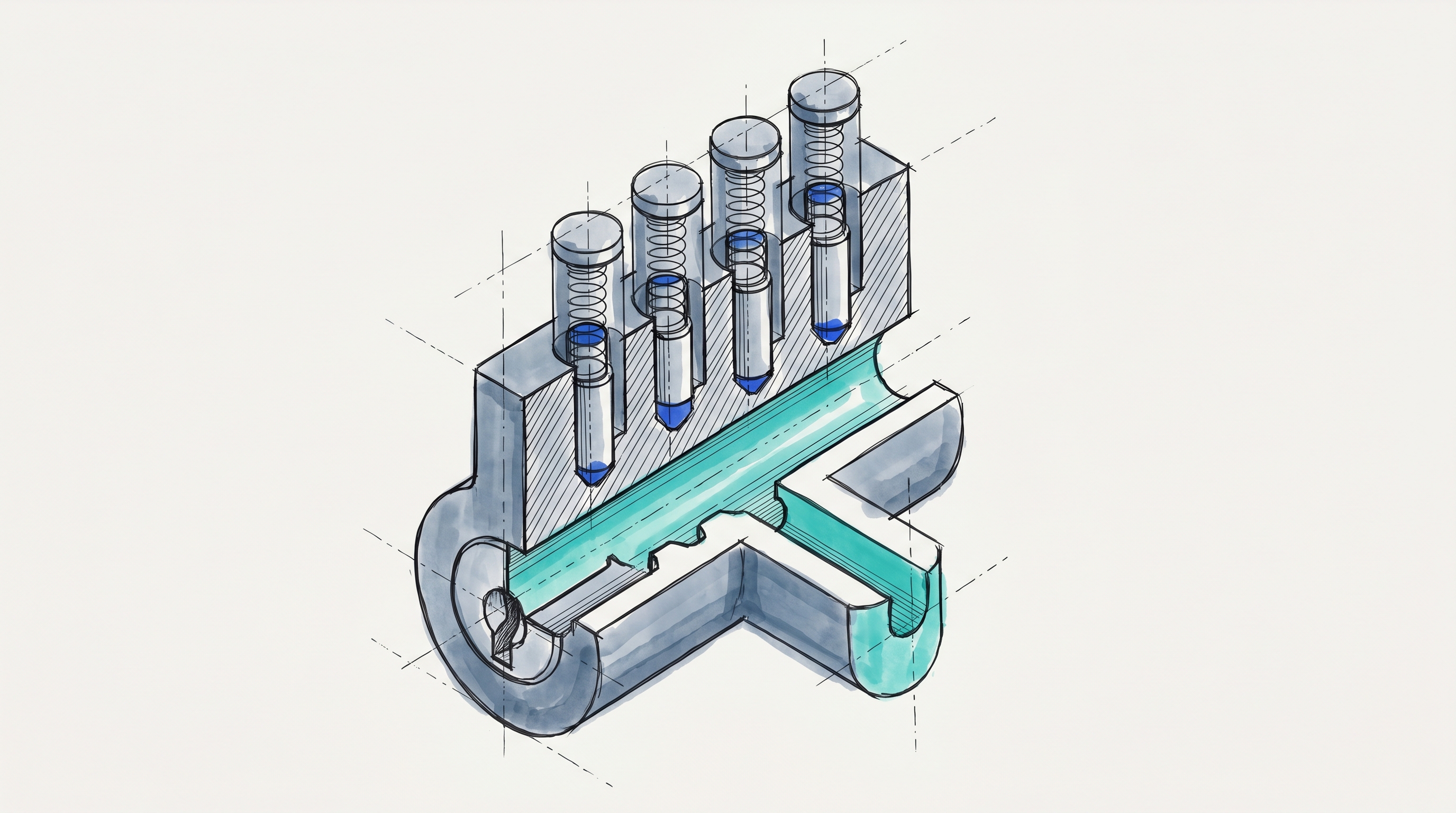

Selection determines which examples appear in the prompt. Empirical studies evaluating selection strategies across multiple model families consistently find that example quality has an outsized effect on output accuracy, with performance varying drastically depending on which samples the model sees. When examples aren't balanced across output classes, models exhibit majority label bias, systematically over-predicting whichever label appears most frequently. This bias doesn't surface as an obvious error and requires stratified evaluation to detect.

Ordering is not a stylistic choice. Published research on in-context learning confirms that few-shot performance can be sensitive to the order of demonstrations, with studies reporting substantial effects from recency bias and demonstration-order instability. Order sensitivity appears to persist even as model size increases, though larger models can partially offset the effect. A retrieval system that returns semantically appropriate examples in varying ranked orders across queries produces non-deterministic ordering as a structural consequence.

Count follows a non-monotonic performance curve. Researchers have documented what they call the "few-shot dilemma," where LLM performance peaks at a specific number of examples and then gradually declines with excessive examples. Google's prompting documentation warns explicitly that too many examples can cause the model to overfit the response. The first few examples typically capture the majority of accuracy gains, while adding more beyond that yields diminishing returns at considerably higher token cost.

Formatting is sensitive at the character level. Google's prompting guidance emphasizes that structure and formatting of few-shot examples must remain consistent to avoid undesired output formats, specifically calling out XML tags, whitespace, newlines, and example splitters. Structural placement of the task description can also override format conditioning from examples. The result is unintended outputs despite correct examples.

These four dimensions don't operate in isolation, and solving one doesn't fix the others. Dynamic retrieval can improve selection quality, but it won't address ordering sensitivity or formatting inconsistencies. All four interact in ways that are difficult to predict without production-scale testing infrastructure.

The Few-Shot Maintenance Problem Nobody Budgets For

Building the initial few-shot prompt is a quick exercise. Maintaining it in production is where engineering time compounds.

Model updates silently invalidate calibrated examples. Unlike software dependencies that change only on explicit version upgrades, closed LLM providers can modify model behavior without announcement. Developers have documented that LLM outputs can change after model updates, even when prompts stay the same. In an AI context, "different" means every downstream step that depended on the old behavior now has to be retested. Few-shot examples calibrated to one model version become a liability when the provider updates.

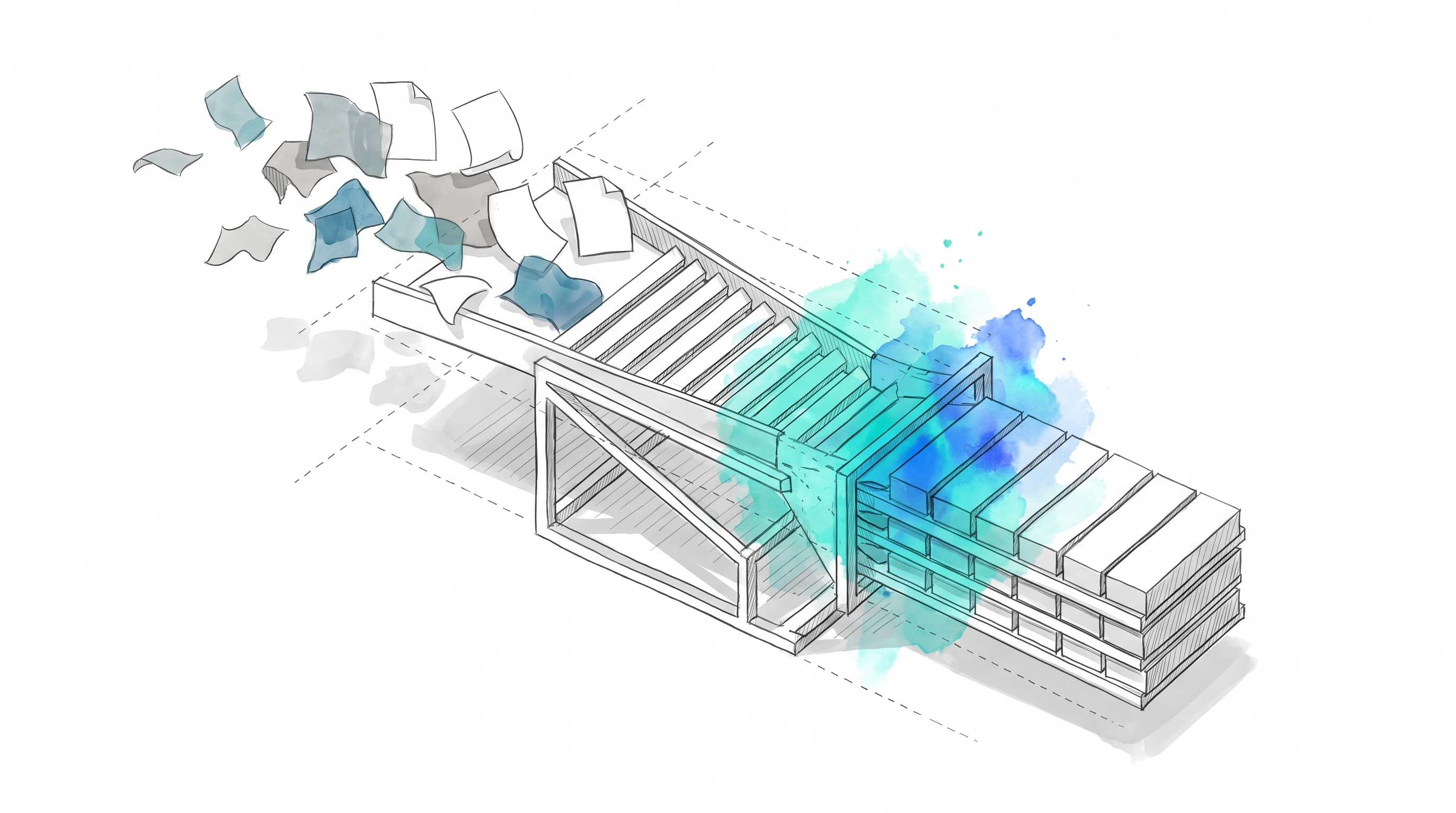

Reactive example accumulation creates prompt debt. Teams add examples every time the model does something undesired. Accumulated examples make the prompt sensitive to changes in unpredictable ways. Anthropic's context engineering guidance addresses this directly, recommending that teams curate a small set of diverse, canonical examples rather than stuffing every edge case into the prompt. The software engineering equivalent is the "God Object" anti-pattern: a prompt grows so complex that performance on common inputs degrades even as it handles more edge cases.

No standard infrastructure exists for prompt regression testing. Developer communities actively discuss approaches: "How do you block prompt regressions before shipping to prod?" and "How are you detecting LLM regressions after prompt/model updates?" appear as dedicated threads without consensus answers. Even prompts that seem robust can still fail unexpectedly when tested against edge cases or used in production.

In practice, the majority of LLM development time goes to error analysis and evaluation rather than building the application itself. Versioning, regression testing, and rollback all require custom infrastructure that every team builds from scratch.

{{ LOGIC_WORKFLOW: rewrite-copy-for-brand-and-seo| Rewrite copy for brand and SEO }}

From Examples to Specs: Sidestepping the Management Problem

The core tension with few-shot prompting at scale is that you're encoding behavior through examples, then building infrastructure to manage those examples across selection, ordering, count, formatting, versioning, and regression testing. Logic's spec-driven platform inverts this: you encode behavior through a natural language spec, and Logic transforms that spec into a production-ready agent with typed REST APIs, auto-generated tests, version control, and execution logging.

Instead of crafting and maintaining example sets where each variable independently affects output quality, you describe what you want the agent to do. Logic determines how to accomplish it. The spec can be as detailed or concise as the task requires: a 24-page document with prescriptive input/output/processing guidelines, or a 3-line description. Logic infers what it needs to create the agent either way.

When you create an agent, 25+ processes execute automatically: research, validation, schema generation, test creation, and model routing optimization. Logic generates production-ready infrastructure quickly, complete with typed endpoints, automated tests, versioning, rollbacks, and execution logging. The resulting API includes auto-generated JSON schemas from the agent spec, strict input/output validation, API contracts that stay stable by default unless a schema-breaking change is explicitly approved, and multi-language code samples.

Three specific capabilities directly address the problems that make few-shot example management expensive:

Auto-generated testing replaces manual regression suites. Logic generates 10 test scenarios automatically based on the agent spec, covering typical use cases and edge cases with realistic data combinations, conflicting inputs, and boundary conditions. When tests fail, Logic provides side-by-side comparison of expected versus actual output with structured analysis of which fields didn't match. Teams can add custom test cases or promote any historical execution into a permanent test case with one click. Test results flag potential issues; the team decides whether to proceed.

Version control with instant rollback replaces prompt versioning from scratch. Every spec version is immutable and frozen once created. Teams can compare versions, pin agents to specific versions for stability, and hot-swap business rules without redeploying. When a model provider update changes behavior, rolling back a version is one click rather than a manual process of restoring previous example sets and retesting.

Intelligent model orchestration replaces per-model example calibration. Since optimal example count and ordering are model-specific, teams managing few-shot prompts across multiple providers face a multiplication of calibration work. Logic automatically routes agent requests across GPT, Claude, and Gemini based on task type, complexity, and cost. Engineers don't have to manage model selection, although they can choose to manually override and do so if they prefer.

The Production Evidence

Garmentory, a fashion marketplace, needed to moderate product listings at scale. The kind of classification task where few-shot prompting initially seems like the right approach, until example management across product categories, formatting consistency, edge case accumulation, and regression testing make it an ongoing infrastructure project.

Instead, Garmentory deployed Logic agents that went from processing 1,000 to 5,000+ products daily, reduced review time from 7 days to 48 seconds, and dropped their error rate from 24% to 2%, all without a custom ML pipeline or model training process.

DroneSense faced a similar pattern with document processing: the kind of extraction task where teams typically build few-shot prompts with format examples, then sink ongoing time into maintaining those examples as document formats change. With spec-driven agents, they reduced processing time from 30+ minutes to 2 minutes per document, with no custom ML pipelines or model training required.

Own or Offload: The Infrastructure Decision

The real alternative to Logic is building and maintaining prompt management infrastructure in-house. That means solving six infrastructure concerns most teams significantly underestimate: testability, version control, observability, model independence, robust deployments, and reliable responses. If your agents handle customer-facing classification or internal document processing, each of these concerns applies regardless of use case.

Logic handles all six so engineers focus on application behavior rather than prompt plumbing. Teams evaluating LangChain for production or comparing frameworks like CrewAI against LangChain still need to design their own testing and versioning strategy on top of those tools. Logic takes a declarative approach: write a spec, and Logic handles orchestration, infrastructure, and production deployment. You can prototype in 15 to 30 minutes and ship to production the same day.

After engineers deploy agents, domain experts can update classification rules or extraction criteria directly if the team chooses to allow it: changes that in a few-shot system would require re-curating example sets and retesting prompt behavior. Every change is versioned and reversible, with Git-like diffs and approval workflows for non-technical spec editors. Failed tests flag regressions but don't block deployment; the team decides whether to act on them or ship anyway. API contracts stay stable even as agent behavior evolves: spec changes update behavior without touching endpoint signatures, and schema-breaking changes require explicit engineering approval before taking effect.

Whether the agent handles customer-facing product moderation or internal document processing, the infrastructure requirements are identical: testing, versioning, and reliable execution across model providers. Logic handles those requirements across 250,000+ jobs processed monthly at 99.999% uptime, so engineering bandwidth goes toward what differentiates the product, not toward managing example libraries. For teams exploring whether zero-shot prompting approaches might reduce maintenance burden, spec-driven agents eliminate the distinction entirely.

Frequently Asked Questions

How does Logic handle the example ordering problem that affects few-shot prompts?

Logic agents operate from declarative specs rather than input-output example pairs. Because behavior is encoded in the spec instead of example permutations, teams do not need to optimize recency, rank ordering, or demonstration placement. That removes a major source of instability that few-shot systems inherit by design. For teams evaluating production reliability, the practical implication is that behavior can be defined in the spec while avoiding separate retrieval logic built solely to manage example order.

What happens when a model provider updates a model and agent behavior changes?

Logic provides auto-generated tests, synthetic scenarios, pinned versions, and rollback controls tied to the spec. If a provider update changes outputs in an undesirable way, teams can compare results against prior expectations, review failures, and revert to a previous version while investigating. Multi-provider routing also reduces dependence on a single vendor. In practice, engineering teams can validate behavior against the test suite first, then use rollback controls if outputs no longer match requirements.

Can Logic agents handle tasks that teams often prototype with few-shot prompts, such as classification and extraction?

Logic agents handle classification, extraction, scoring, and moderation tasks that teams often prototype first with few-shot prompts. In those cases, the spec defines decision criteria, output categories, and edge-case handling directly rather than leaving those rules implicit in examples. That makes behavior more explicit and easier to audit over time. Teams evaluating migration often begin with a high-volume classification or extraction workflow and compare results against the existing prompt-based system.

How do teams migrate from existing few-shot prompt infrastructure to Logic?

Most teams migrate one agent at a time rather than replacing every prompt path at once. Logic agents are exposed as typed REST APIs, so engineers can integrate them into existing systems and run them alongside current prompts during evaluation. Documentation includes example requests, responses, and code samples in multiple languages. A common rollout starts with parallel evaluation on a single workflow, followed by traffic switching only after the new agent meets the team’s requirements.

What does Logic's auto-generated testing actually cover?

Logic's auto-generated tests provide a baseline suite that teams can strengthen over time. The platform generates 10 scenarios from the spec, including realistic combinations of inputs, ambiguous cases, conflicting information, and boundary conditions. Engineers can add custom cases for domain-specific requirements or promote historical executions into permanent regression tests. In practice, teams often treat the generated suite as a starting point and then add production-derived cases as requirements and edge cases become clearer.