How to build an AI agent that runs in production

The barrier to entry has never been lower. You can build an AI agent prototype in an afternoon that feels like magic. But there’s a wide gap between an agent that works in a demo and one that works ten thousand times for a customer.

This is a field guide for engineers and technical leaders who want to close that gap. It covers what needs to change for you to go from "it works on my laptop" to "it runs reliably in production," and how to build for that from the start.

Six pillars

There are six properties that separate a demo agent from a durable, production-grade agent:

- Reliable responses: Consistent, typed output from a probabilistic system.

- Testability: Automated tests that work for even fuzzy outputs.

- Version control: Prompts, config, and logic managed like code.

- Observability: Full visibility into the black box of reasoning chains.

- Model independence: Cost, latency, and intelligence balanced across models.

- Robust deployments: Updates shipped safely, frequently, without breaking what's running.

Three approaches

There is no single “right” way to build an agent, but different methods come with different tradeoffs. We examine three dominant approaches:

- Code-driven (imperative): Explicitly programming every step of the orchestration in Python or TypeScript. This offers granular control but demands you manage the complexity of every state transition manually.

- Visual workflow (graph-based): Defining logic via flowcharts and drag-and-drop nodes. This provides high visibility into the process but often becomes unwieldy as logic grows complex.

- Fully managed (declarative): Defining the what in a structured spec and letting a managed-agent platform handle the how. You describe behavior in natural language; the platform runs the agent and the production stack around it.

What is an agent, really?

We debated whether to use the word “agent” at all.

In today’s hype cycle, the term has become a catch-all. It’s applied loosely to everything from simple text classifiers to systems that create complex software or book flights for you.

We decided to use it though, because when you strip away the marketing, it really does describe a specific and rather helpful architectural pattern.

Here is our working definition:

An AI agent is an autonomous system that perceives a context, reasons about it, and acts to transform it toward a desired goal, often using tools to interact with an environment.

In other words: an agent receives a task, figures out what to do, and does it.

Boring is best

The most valuable agents in production today are not the sci-fi, fully autonomous ones. They occupy a pragmatic middle ground somewhere between “a single LLM call” and “AGI.”

Consider a document processing agent. A useful one doesn’t just summarize text; it extracts fields from purchase orders, validates them against dynamic business rules, checks for anomalies, and routes exceptions to a human queue. Or a content moderation agent that evaluates product listings against a set of subjective standards, cleans up the listing if it can, and flags listings that it can’t.

These agents have… agency. The ability to make decisions based on what they find, but within a bounded context.

These are the systems this guide is designed to help you build: agents that are boring enough to be reliable, but smart enough to be transformative.

A rough heuristic

Before writing a line of code or a spec, you should determine if your problem is really agent-shaped.

There is a sweet spot for agentic workflows. If a task is too simple, a standard script (or a regex) is faster. If it is too complex, the probabilistic nature of the LLM leads to compounding errors.

Where agents thrive

If a human with the right context could do the task 100 times a day, it’s likely a strong candidate for an agent. These are tasks where the “reasoning” takes a human anywhere from a few seconds to a few minutes.

Examples: Content moderation, data extraction, classification, scoring, and initial document review.

Pattern: Clear inputs, clear outputs, and a distinct definition of “done.”

Longer horizons

Agents are increasingly capable of handling more complex, extended workflows. However, even as the horizon expands, success remains dependent on the “forgiveness” of the domain. A research agent can survive a minor hallucination; a flight booking agent hallucinating might have more costly consequences.

Where agents struggle

Agents fail when they are pushed “out of distribution” or into brittle workflows.

Deep domain expertise: Agents tend not to do well at tasks that rely on intuition or niche, domain-specific or company-specific knowledge not well-represented in the model’s training data.

Very long horizons: In multi-step processes where a human spends hours reasoning, agent reliability decays geometrically. If a process has 10 steps and the model has a 95% accuracy rate per step, the final success rate drops to ~60%.

- Note: This is fatal in brittle domains (transactions/booking) where one error breaks the chain. It is manageable in forgiving domains (research/drafting) where useful output can survive a partial failure.

Zero-error tolerance: If “almost definitely right” isn’t good enough, it's best not to use an agent without a human-in-the-loop.

The most reliable production systems don’t try to replace humans entirely. They combine LLM reasoning with strict constraints and route the critical, high-risk judgments back to people.

Production-caliber agents

Before you write a line of code, you need to define what “production ready” actually means.

Most agents fail because they lack the structural integrity that traditional software engineering solved decades ago.

If your system is missing these, you will hit a wall.

In the following sections, we will break down exactly how to implement each of these.

Reliability via strict contracts

An LLM is a text completion engine. It does not know what JSON is; it only knows what JSON looks like.

Models return strings. Sometimes those strings are valid JSON. Sometimes they are almost valid JSON. And critically, sometimes they are syntactically valid but semantically wrong, such as returning "confidence": "high" when your downstream system expects "confidence": 0.9.

To survive in production, you must transform the LLM into a module wrapped with well-typed contracts.

Input and output

Reliability requires a formal definition of what goes in and what comes out, enforced at runtime, and not just suggested in a prompt.

On the output side: Most modern models support structured output, where you define a schema and the model is constrained to follow it. Each model handles this differently, though, with its own limits on what it can enforce and how complex the schema can be.

On the input side: This is often ignored. If you feed the model malformed data or missing fields, it won’t throw an error. It will do its best to fill in the blanks. Often this works, but it’s best to validate as much as you can as early as you can.

The fix: You must wrap the stochastic brain of the model in a deterministic shell. Validate inputs before they hit the prompt, and validate outputs before they hit your application.

Why this matters

Without typed contracts, validation becomes a game of whack-a-mole. You’re forced to write defensive code in every single function that consumes the agent’s output.

By enforcing contracts at the boundary, you contain the chaos. The rest of your system can treat the agent like any other API: a black box that takes data of a certain shape and reliably returns data of a certain shape.

The anti-pattern: “prompting harder”

The most common mistake is attempting to prompt your way to reliability. You write sentences like “Please ensure you only return JSON” or “Do not include markdown formatting.”

This will work often, but not always. You cannot prompt your way out of a probabilistic process; you must engineer your way out.

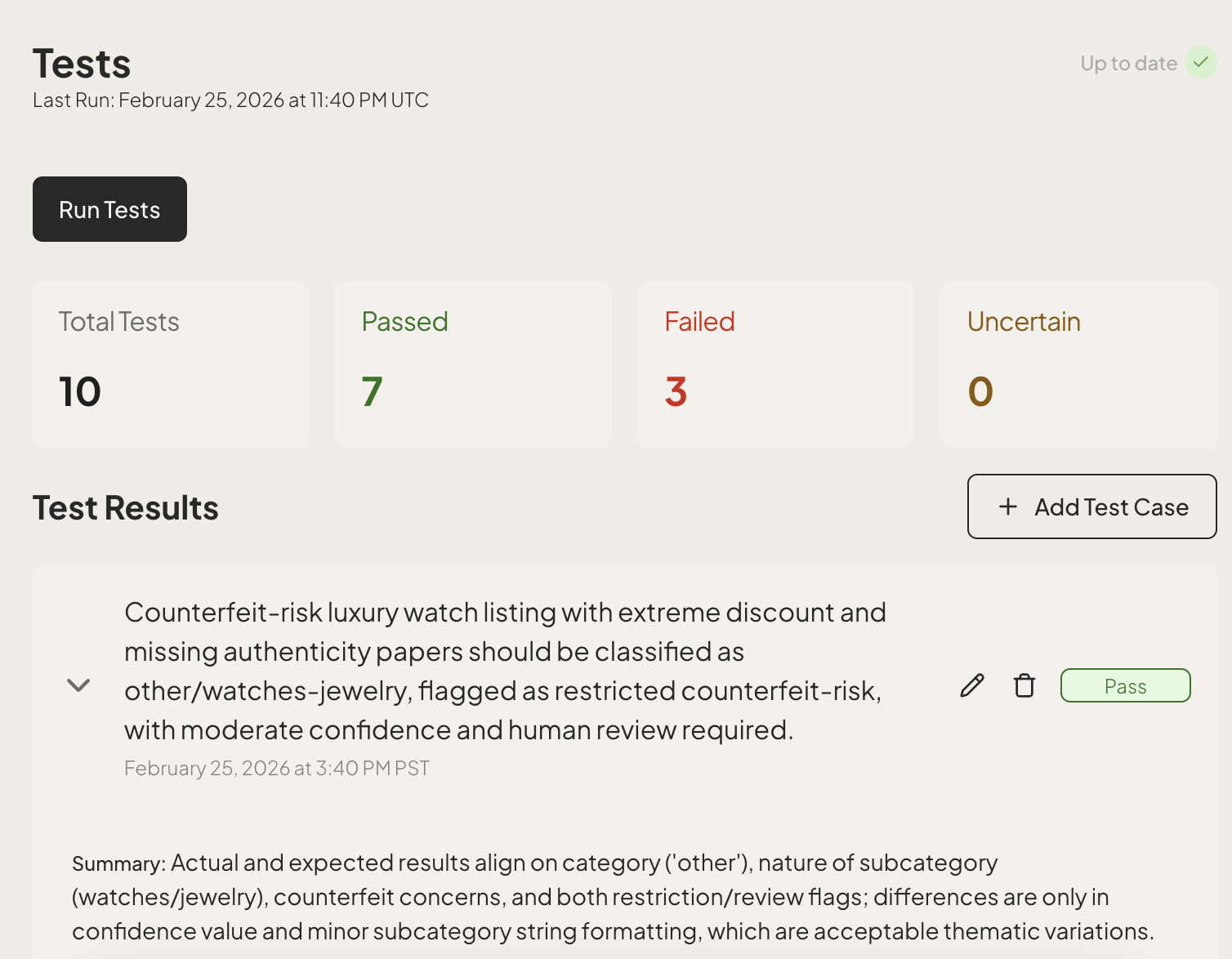

Testability

You wouldn’t ship a payment gateway without thorough tests. Yet teams routinely deploy agents based on vibes: a few manual checks in a demo environment.

The hesitation to do more is understandable. Traditional testing relies on exact string matching. If Input A always equals Output B, the test passes. But LLMs are non-deterministic. The same prompt might yield slightly different phrasings on different runs.

But testing agent outputs is possible. To do it effectively, you need two layers of testing.

Deterministic tests

Instead of testing the prose, test the invariants:

- Structure: Does the JSON have the required keys?

- Logic: If the user asked for a refund, is the

intentfield classified asREFUND? - Safety: Did the agent refuse to answer a jailbreak attempt?

- Negative constraints: Did the agent hallucinate a field that should not exist?

These are binary properties: they either pass or they fail. You can run these on every commit.

Probabilistic evals

Beyond testing for simple correctness, you also need to measure performance:

- The dataset: Run your agent against a “golden set” of 50 or 100 historical inputs where the ideal answer is known.

- The metrics: Define success using an LLM-as-a-judge, or similar:

- Context recall: Did the agent retrieve the correct documents from your knowledge base?

- Faithfulness: Did the response stick strictly to the retrieved context, or did it hallucinate external information?

- Semantic similarity: Does the output mean the same thing as your reference answer, even if the wording differs?

- The threshold: If your previous version scored 92% and your new version scores 88%, you have a regression.

Evals are not about strict pass/fail on any single input. They're about whether the new version is better than the last one.

Why this matters

Without automated tests, every deployment is a gamble. You might tweak a prompt to fix one edge case, but without a test suite, you have no way of knowing if that fix broke ten other behaviors that were working perfectly.

The anti-pattern: ad-hoc QA

The most dangerous habit is manual spot-checking. You open a chat window, type three or four inputs, and if the answers “look good,” you ship.

This works until it doesn’t. A prompt change that fixes a tone issue might subtly break your JSON schema or degrade your reasoning on complex tasks. Without a rigorous evaluation set, you won’t know until a user tells you.

Version control and rollback

Most teams treat their prompts like code and check them into Git. This is nice, but not sufficient.

An agent’s behavior is not defined by the prompt alone. It is a function of multiple variables:

- The prompt (the instructions)

- The model configuration (temperature, Top-P, stop sequences)

- The tool definitions (the API signatures available to it)

- The knowledge base (the specific data the agent can retrieve)

If you change the temperature from 0.0 to 0.7 without changing the prompt, the agent is different. If you update the definition of a search_users tool but leave the prompt the same, the agent might break.

The fix: You need immutable versioned bundles. Every time you deploy, you must snapshot the entire configuration bundle.

Why this matters

When an agent starts hallucinating, you need to know exactly what changed since the last known-good state.

If your prompt lives in a repo, your model config lives in an environment variable, and your tool definitions live in a separate microservice, debugging is impossible. You’re trying to solve an equation where the variables are scattered across many different systems with different lifecycles.

You need a single “source of truth” that captures the exact state of the agent at any given moment.

The anti-pattern: “SSH into prod”

The most common failure mode is editing prompts directly in a production database or UI to “fix it fast.”

This is the AI equivalent of SSH-ing into a production server and editing a config file with nano. It feels fast. It feels heroic. But it leaves no trace and no way to roll back when that “quick fix” breaks.

Velocity comes from confidence. You can only move fast if you know you can undo your mistakes in one click.

Observability

Software fails loudly. It throws an exception and gives you a stack trace pointing to a specific line.

Agents, conversely, fail quietly. Rather than crashing, they just confidently output the wrong answer.

Because agents are non-deterministic, you cannot simply “re-run” the input locally and expect to see the same bug. The specific path the agent took (the tool calls, the reasoning chain, the random seed) might never happen again.

The fix: You need a “flight recorder.” You must capture the full execution graph of every run: the model version, every input, every prompt sent to the LLM, every tool output received, and the exact latency of every step.

Why this matters

When a user reports a hallucination, you are not debugging code. You are debugging a one-off (potentially) non-repeatable process.

Without a trace, you are flying blind. You can see the bad output, but you cannot see the bad context that caused it. Did the retrieval step fail to find the document? Did the model misunderstand the tool schema? Did the prompt get truncated?

You need to rewind the tape to understand why the agent made a bad decision.

The anti-pattern: “hope-based debugging”

The classic mistake is relying on console.log("agent response:", result).

This doesn’t show you the work. When a downstream user reports a failure, your debugging process becomes: try to reproduce it locally with different inputs, hope you get lucky, push a speculative fix, and wait to see if the complaints stop.

Model independence

The AI landscape is shifting constantly. The model you build on today will be deprecated, expensive, or simply outperformed in six months.

More importantly, no single model is the “best” at everything. You are always navigating this triangle:

- Quality: How capable is the reasoning of this model?

- Latency: How fast are the responses?

- Cost: How much does it cost for a response?

The smartest models are often the slowest and most expensive, and the fastest ones sacrifice quality to get there.

If your approach to building agents is tightly coupled to a specific model, you cannot optimize this triangle. You are stuck with one set of trade-offs for every single task, regardless of whether it requires a PhD or an intern.

The fix: You need model agnostic design. Your agent architecture should treat the model as a swappable component.

Why this matters

- Cost optimization: You shouldn’t pay for a PhD-level model to summarize a simple email. A well-designed system lets you route low-complexity tasks to cheaper, faster models.

- Latency sensitive flows: For customer-facing chat, speed is a feature. You might sacrifice a small amount of “quality” (nuance) for a massive gain in “latency” (snappiness).

- Peak performance: No single model wins everything. One might dominate coding tasks while another leads in creativity or long-contexts. By decoupling, you can cherry-pick the absolute best model for each specific sub-task. Your “Quality” metric stays at the theoretical maximum.

The anti-pattern: hard-coded strings

The most limiting decision is hard-coding gpt-5.4 (or any specific model name) deep into your codebase. This forces you to opt out of the triangle and surrender the ability to balance cost, speed, and quality as needed across your fleet of agents.

Robust deployments

Most post-production changes for agents are not structural. They are behavioral: a prompt adjustment to fix a tone issue, a new instruction for an edge case, or a tweaked tool description to reduce errors.

If every one of these changes requires a pull request, a code review, a staging build, and a full production rollout, you have introduced a “velocity tax” that will kill your product.

The fix: You must recognize that you’re dealing with two distinct lifecycles:

- Application code (the skeleton): Routes, database connections, API handlers. Changes infrequently. Managed by engineers.

- Agent logic (the brain): Prompts, few-shot examples, domain rules. Changes constantly. Managed by domain experts, engineers, or both.

The most successful agents aren’t “set-and-forget.” Even after going live, they’re constantly refined by the people closest to the problems they solve.

Why this matters

A production deployment requires a release strategy that respects the probabilistic nature of the agent. This is generally characterized by:

- Expert ownership: The people who know how the agent should behave (the lawyers, doctors, or support leads) are rarely the ones writing the Python code. Decoupling the brain allows these experts to refine the spec directly. If every improvement must pass through a git commit, you’ve locked your most valuable teachers out of the classroom.

- Shadow deployments: Before a new agent version goes live, you should be able to run it in shadow mode. It processes real production data in the background, but its outputs are silenced. This allows you to compare the new version’s performance against the old version using real-world traffic without risking the user experience.

- Canary rollouts: You should rarely flip a switch for 100% of your users on a new prompt. A mature deployment system allows for incremental rollouts. You move 1% of traffic to the new “brain,” monitor the observability traces for hallucinations or errors, and then gradually ramp up.

- Instant rollbacks: In AI, a bug might not appear for hours until a specific edge case is hit. You need a big red button. If the new version starts behaving erratically, it’s crucial to be able to revert the agent logic to a known-good state in seconds without waiting for a full code redeploy.

The anti-pattern: deployment bottleneck

The most common mistake is treating agents like traditional code.

This creates a bottleneck where your expensive software engineers are reduced to copy-pasting text edits. It forces the evolution of your agent to move at the speed of your slowest engineering process. Worse, it makes safe deployment patterns, like shadows and canaries, nearly impossible to implement without a lot of custom infrastructure.

Production readiness checklist

Use this audit to evaluate any agent, regardless of how it was built. Be self-critical. In production, the edge cases you ignore are the ones that will eventually wake you up at 3:00 AM.

The Scale:

- 0 (Not Present): You don’t have this today.

- 1 (Partial): You have addressed it, but there are gaps, manual workarounds, or heroics required.

- 2 (Fully Handled): This works reliably, automatically, and without friction.

| Property | 0 (Not Present) | 1 (Partial) | 2 (Fully Handled) | Your Score |

|---|---|---|---|---|

| Reliability | No schema validation. Agent accepts or returns arbitrary unstructured data. | Some validation exists, but it is not enforced across all inputs and outputs. | Full typed contracts on every input and output. Validated at the boundary. | _ / 2 |

| Testability | No automated tests. You verify performance by running manual prompts. | Tests exist, but they rely on mocks or only cover “happy path” scenarios. | Deterministic tests against real model outputs. Edge cases are covered. | _ / 2 |

| Version control | No way to track what changed between agent versions or why. | Git-based code versioning exists, but prompt and config changes are untracked. | Every change (code, prompt, config) is versioned and auditable. One-click rollback. | _ / 2 |

| Observability | Minimal logging. Debugging requires trying to reproduce issues locally. | Basic logs exist, but there are gaps in tracing multi-step reasoning. | Full execution history. Every input, output, and tool call is captured. | _ / 2 |

| Model independence | Agent logic is hardcoded to a single model provider. Switching is a refactor. | You can switch models, but it requires manual configuration and re-testing. | Model-agnostic logic. You can swap providers or route based on cost/latency. | _ / 2 |

| Robust deployments | Every behavioral change requires a full application code deploy. | Some config is externalized, but updates still pass through a slow pipeline. | Behavior updates independently. Domain experts can tune the “brain” safely. | _ / 2 |

Total: __ / 12

How to read your score

The score is a measurement of your system’s structural integrity. It tells you how much luck you are currently relying on to keep your users happy.

10–12: Production-ready

You have built a professional system. You have addressed the properties that matter, and your team can ship, debug, and iterate with confidence. At this level, your focus should shift to optimizing the triangle of cost, latency, and quality.

8–9: The danger zone

You are close, but you have specific vulnerabilities. You can likely run in production today, but a single silent failure in one of your gap areas will cost more in reputational damage than the time it would take to fix it proactively.

5–7: Prototype debt

You are carrying significant technical debt. This system is fine for an internal demo or a limited beta, but it will likely surface critical issues under load. You are currently in the “Move Fast and Break Things” phase, but you are mostly breaking things.

Below 5: The happy-path trap

You have built something that works in a controlled environment. That is a great start, but there is a chasm between a demo and a product. Use this scorecard as your roadmap for what to build next.

In the early days of any technology, builders tend to start with raw code. It is often the only lever available. As the field matures, abstractions emerge. These abstractions, ideally, shift the burden of complexity and best practices from the engineer to the system.

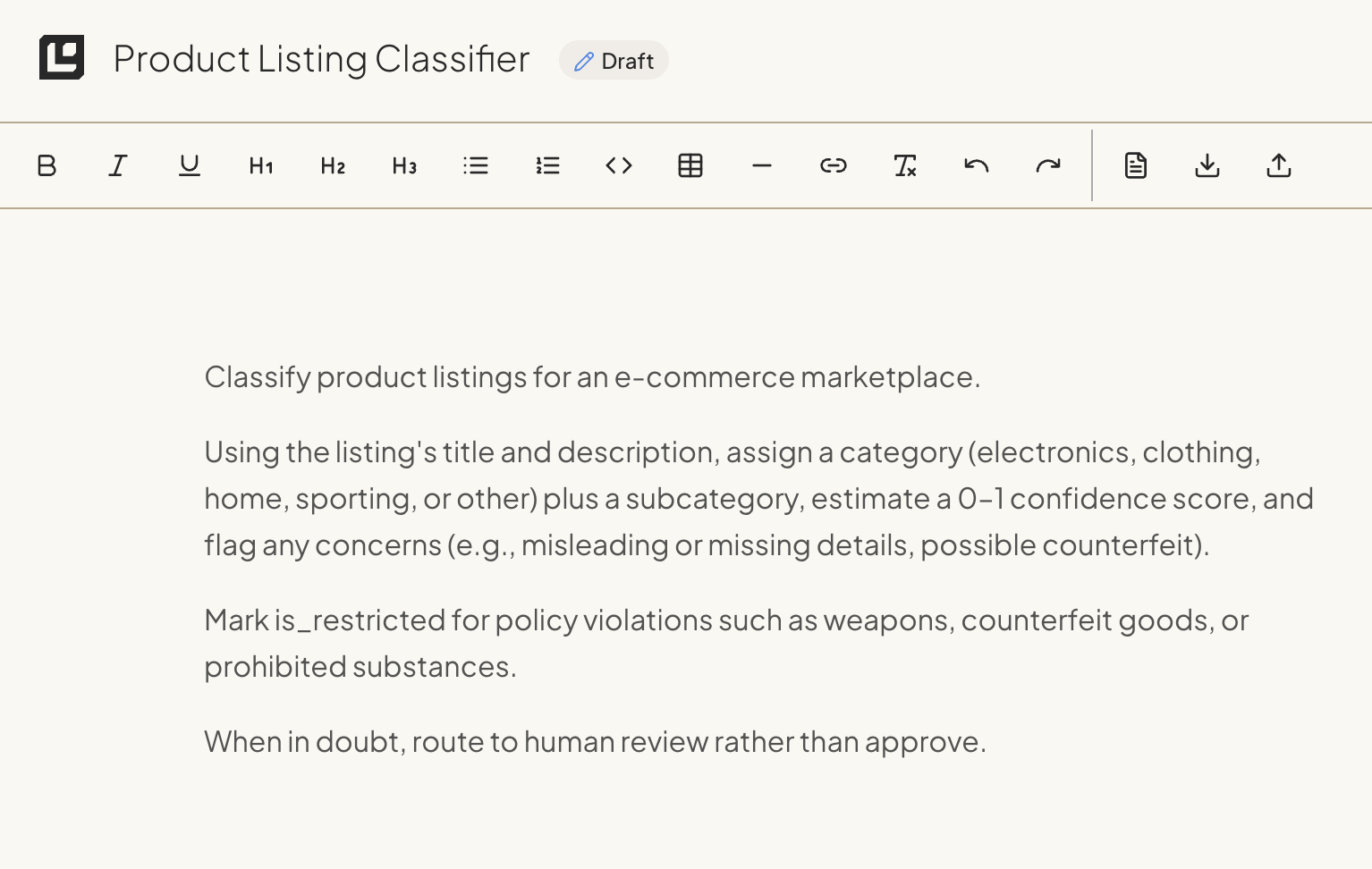

In this section we show three distinct paths to building the same agent. To compare them fairly, we will use a single, concrete example: a product listing classifier.

The task is straightforward. The agent’s job is to take a raw product listing and return a structured classification. It must identify the category, the subcategory, and any relevant content flags like safety warnings or age ratings.

Before we get into implementation, we’ll need to cover the building blocks that every agent requires. These components (instructions, loops, multi-agent coordination, tools, knowledge, and guardrails) are universal. Understanding them first will make the approach-specific sections that follow much more concrete.

Once we’ve done that, we’ll run through three different approaches to building agents:

- Code-driven (imperative) In this approach, you write the orchestration logic yourself. You are responsible for the Python or TypeScript that handles the prompt construction, the API calls, the retry logic, and the state management. This is the path of maximum control but also maximum manual labor. You are responsible for every line of the implementation.

- Visual workflow (graph-based) This approach moves the logic to a canvas. You compose nodes and edges to define the flow of data. You can see the path from input to output. This makes the logic easier to audit visually, but it can become unwieldy as the number of nodes or edge cases grow.

- Fully managed (declarative) In this approach, you write a structured specification of the desired behavior, and a managed-agent platform runs the agent and the production stack around it. You describe the rules, schemas, and goals in a single document. The platform handles orchestration, observability, model routing, deployment, and the lifecycle of the agent itself.

The building blocks of an agent

Every agent, regardless of how it’s built, is assembled from a combination of the same fundamental pieces: instructions, a reasoning loop, tools, and guardrails. This section walks through each of those fundamental pieces.

Instructions: write prompts like you’re onboarding a new hire

Whether you write your instructions as a Python string, paste them into a node configuration, or declare them in a spec, the principles are the same. The instruction set is the single most important factor in most agents’ reliability.

Think of it as writing an SOP for a new employee on their first day. You wouldn’t hand a new hire a one-sentence description and expect them to handle every edge case correctly. You’d give them numbered steps, decision criteria, examples of tricky situations, and clear escalation paths.

The system prompt in a prototype is often a single sentence. This works for a demo, but it collapses under the weight of real-world complexity.

In a production environment, your prompts should mirror your company’s standard operating procedures, decision trees, or runbooks. If a human expert already has a manual for this task, that manual is your best starting point.

Here’s the difference between a prototype prompt and a production prompt for the same classifier:

Prototype:

Classify a product listing. Return the category, subcategory,

whether it's restricted, your confidence score (0-1), and any content flags.Production:

You are a product classifier for an e-commerce marketplace.

## Your task

Classify incoming product listings into categories and check for policy violations.

## Steps

1. Read the product title and description.

2. If a SKU is present, call lookup_product_history to check for prior classifications.

If a prior classification exists with confidence > 0.9, return it unchanged unless the description has materially changed.

3. Determine the primary category from: electronics, clothing, home, sporting, other.

4. Determine the most specific subcategory you can (e.g., "wireless headphones" not just "audio").

5. Check for policy violations:

- Weapons or weapon accessories: RESTRICTED

- Counterfeit goods (mentions "replica", "inspired by", or brand names with

suspiciously low prices): RESTRICTED

- Prohibited substances: RESTRICTED

- Health claims without disclaimer: FLAG but not restricted

6. Set confidence score:

- 0.9-1.0: Clear category, unambiguous description

- 0.7-0.89: Likely correct but description is vague or could fit multiple categories

- Below 0.7: Uncertain. Call flag_for_human_review with the reason for uncertainty.

7. If is_restricted is true, always also call flag_for_human_review with urgency "high".

## Edge cases

- Vintage or collectible items: Classify by the item's original category, not "other".

A vintage Sony Walkman is electronics, not collectibles.

- Bundles: Classify by the primary item in the bundle.

- Handmade items: Use the category of comparable manufactured goods.

- If the description is in a language other than English, do your best to classify it and add a flag: "non_english_listing".The production prompt succeeds because it breaks the task into discrete, numbered steps. It eliminates ambiguity by defining specific thresholds for tool usage and confidence scoring.

By spelling out edge cases, you prevent the model from having to guess when it encounters, say, a vintage item or a product bundle. This level of detail may feel like overkill during the initial build, but it saves significant time during the debugging phase.

A few patterns that consistently improve prompt quality:

Numbered steps over prose. Models follow numbered instructions more reliably than paragraph-form descriptions. Each step becomes a checkpoint the model can work through sequentially.

Explicit thresholds. “Flag low-confidence results” is vague. “Call flag_for_human_review when confidence is below 0.7” is actionable. Wherever you can replace a judgment call with a number, lean toward doing it.

Negative examples for edge cases. Telling the model what not to do is sometimes more effective than telling it what to do. “A vintage Sony Walkman is electronics, not collectibles” prevents a common misclassification before it happens.

Escalation paths. Every production prompt should include a “when in doubt” instruction. If the model can’t confidently complete the task, it should have a clear fallback: flag for review, ask for clarification, or return a lower-confidence result with an explanation.

The agent loop

At the heart of every agent is a cycle: observe, reason, act, repeat.

The agent receives input and a goal. It reasons about what to do next. If it decides to use a tool, it calls that tool, observes the result, and feeds that result back into its reasoning. This cycle continues until the agent has enough information to produce a final answer, determine that its task is accomplished, or a safety boundary stops it.

Three things matter in every agent loop implementation:

Iteration limits are financial and safety guardrails. Without a cap on iterations, an agent that encounters an ambiguous result or a circular tool dependency can enter an infinite loop. Every iteration burns tokens and increases your bill without producing a result. Tune the limit based on the task: a document classifier rarely needs more than three turns. A research agent that’s calling multiple APIs might need ten.

Context history is how the agent maintains state. Each time a tool is called or a step completes, the result gets added to the agent's working context. This accumulating state is what lets the agent remember what it's already tried and adjust its strategy if helpful. But there's a catch: if the loop runs too long, the context window fills up, or the model gets distracted by irrelevant earlier results. Managing context growth is a real engineering concern in any long-running loop.

Start with a single agent. It’s tempting to jump to multi-agent architectures early. But for most production workloads, a single-agent loop is more than sufficient. Multi-agent systems introduce complexity in state management and hand-offs. You should only move to a multi-agent setup when you have reached a specific complexity ceiling that a single loop cannot resolve.

Multi-agent patterns

A single-agent loop handles most production tasks well. But it has a ceiling: context dilution. This happens when the volume of tools, instructions, history, and edge cases exceeds the model’s ability to maintain focus. You’ll see it as degraded tool-selection accuracy, weaker reasoning, or hallucinations that weren’t there before.

When you hit that ceiling, you split into multiple agents, each with a narrower scope. Two patterns dominate.

The manager pattern. A central coordinator agent analyzes the incoming request and delegates it to a specialist. Each specialist has its own focused set of tools and instructions. A billing specialist only sees billing tools. A compliance specialist only sees compliance instructions. By reducing the state space each agent manages, you get more reliable behavior from the underlying model. This pattern works well for broad systems like enterprise support platforms where the request type determines which domain expertise is needed.

The handoff pattern (pipeline). Work flows in a linear sequence between agents with distinct responsibilities. For instance, Agent A might complete extraction and then pass the result to Agent B for validation, which then passes it to Agent C for final classification. Each agent has a single job and a narrow context. This is the right choice for predictable workflows where the steps are known in advance and don’t change based on content.

When to stay single-agent vs. go multi-agent: If your single agent handles its tools accurately and produces consistent results, don’t split it. If you’re seeing it confuse which tool to call, or if it starts ignoring instructions that it followed reliably when the context was smaller, those are empirical signals that you’ve hit the dilution threshold. The right time to add a second agent is when you have data showing the first one can’t keep up.

Every additional agent introduces latency and new failure boundaries. If the coordinator in a manager pattern delegates incorrectly, the entire request is lost. Keep the architecture as simple as the task allows.

Tools

Tools are how agents interact with the outside world. An agent reads a tool description, decides whether to call it, and passes structured parameters. The tool executes and returns results the agent can reason about.

Most production agents eventually need to reach outside their context: look something up, write a result somewhere, notify someone, or trigger a downstream process.

There’s a tendency to treat tools as an afterthought: get the prompt right, then bolt on some API calls. That’s backwards. Tool design shapes agent behavior as much as your instructions do. The model decides what to do based on two things: what you told it in the system prompt (or spec), and what tools are available. If your tools are poorly described, poorly scoped, or poorly structured, the agent will make bad decisions regardless of how good your prompt is.

Tools fall into three categories with different risk profiles. Data tools are read-only operations (database queries, API lookups, vector searches) that give the agent context it doesn’t have. Action tools are state-changing operations (sending emails, updating records, triggering deployments) that affect real systems. Orchestration tools hand off sub-tasks to specialized agents or trigger background workflows. Your guardrail strategy should match the risk: action tools that can send emails or update records need more protection than a read-only database lookup.

How many tools is too many?

This is changing constantly, but in our experience most models start to struggle with tool selection somewhere around 15-20 tools.

If you need more than 15 tools, that’s usually a signal that you need to split your agent into multiple specialized agents (see the multi-agent patterns above). An agent that handles billing with five focused tools will outperform one that handles everything with thirty.

A useful exercise: for each tool, ask whether you’d be comfortable removing it entirely. If the agent’s core task still works without a tool, that tool might belong in a different agent or a separate workflow.

Tool design principles

Good tool design comes down to three things:

Clear descriptions that tell the model when to use the tool, not just what it does. Write your tool descriptions as if you’re explaining to a new teammate which situations call for which tool. Include the conditions under which the tool should be used, and just as importantly, when it shouldn’t. “Do NOT use this for bulk operations” is the kind of negative instruction that can prevent expensive mistakes.

Typed parameters with constraints. Every parameter should have a type, a description, and validation rules. If a parameter expects a date, specify the format (ISO 8601, not “a date string”). If a parameter must be one of a fixed set of values, use an enum. The more specific your parameter definitions, the fewer malformed tool calls you’ll debug.

This applies to return values too. Your tool should return structured data the model can reason about, including in error cases. A tool that returns {"error": "Rate limit exceeded, retry after 30 seconds"} gives the model something to work with. A tool that throws an unhandled exception gives the model nothing.

Scope each tool to one job. A tool called manage_user that can create, update, delete, and list users is four tools pretending to be one. The model has to figure out which operation you mean from context, and it’ll guess wrong often enough to cause problems.

Scoping tool permissions

Not every tool should have the same level of trust. A read-only database lookup is low risk. A tool that sends an email to a customer is medium risk. A tool that deletes records or processes refunds is high risk.

Build your permission model around this distinction:

Read-only tools can generally run without additional guardrails. If the worst case is returning incorrect data, the model will usually catch it and try again.

Write tools should validate their inputs before executing. Check that the target exists, that the values are within expected ranges, and that the operation makes sense in context. Log every call.

Destructive or irreversible tools should require human-in-the-loop confirmation. Your agent should never be one confused inference away from deleting production data. If an action can’t be undone, make the agent prove it should happen before it does.

A common mistake is giving an agent tools it doesn’t need “just in case.” Every tool you add is a tool the model might misuse. If your product classification agent doesn’t need to send emails, don’t give it an email tool. The attack surface of your agent is the union of all its tools. Keep it as small as your use case allows.

Knowledge and RAG

Not every agent needs retrieval-augmented generation. The decision comes down to scope and stability.

Use instructions when your agent’s scope is narrow and the relevant context is stable. A product classifier that needs to know ten categories, a set of policy rules, and some edge cases can fit all of that in a well-written prompt. Adding a retrieval layer here adds latency, chunking complexity, and a new failure surface without meaningful benefit.

Use RAG when the domain knowledge exceeds what fits in a prompt, changes frequently, or spans many documents. If your agent needs to reference a 200-page compliance manual, or if the policies it enforces get updated weekly, you can’t keep that in the instructions alone. RAG lets the agent pull in only the context it needs for a given request.

The common mistake is reaching for RAG too early. Teams add vector databases and embedding pipelines because it feels like the “production” thing to do, and then spend weeks debugging retrieval quality issues that wouldn’t exist if they’d just put the relevant context in the prompt. Start with instructions. Add RAG when you hit the limits: the prompt is too long, the context changes too often, or the agent needs to search across a corpus that’s too large to include directly.

When you do implement RAG, the quality of your retrieval matters more than the quality of your model. Bad retrieval with a great model produces worse results than good retrieval with a decent model. Invest in chunking strategy, embedding quality, and relevance scoring before you upgrade the LLM.

If you’re building RAG yourself, that means standing up embedding pipelines, vector databases, and retrieval logic. Third-party platforms can simplify this significantly. If you go the managed-platform route, Logic includes a knowledge library that lets you attach documents and data sources to agents without building the retrieval infrastructure yourself.

Guardrails and safety

Guardrails aren’t optional for production agents. A chatbot that hallucinates gives you a wrong answer, but an agent that hallucinates might send a real email, delete real data, or charge a real credit card.

Effective guardrails operate at three interception points: before the model reasons, after it responds, and around each tool it can call.

Input guardrails

Filter before the agent reasons about a request. Three layers are common:

Relevance classifiers reject off-topic inputs before they consume LLM tokens. If your agent handles refund requests, it shouldn’t process questions about the weather. A lightweight classifier (or even keyword rules) can handle this cheaply.

Prompt injection detection catches attempts to override your agent’s instructions through user input. This is an active area of research with no perfect solution, but layered defenses (input scanning, instruction hierarchy, output monitoring) reduce the attack surface significantly.

PII filtering strips or masks sensitive data before it reaches the model. If your agent doesn’t need social security numbers to do its job, don’t let them into its input.

Beyond these, standard structural validation applies: check that required fields are present, that values are within expected ranges, and that the input isn’t suspiciously long or malformed. This is the same kind of input validation you’d do for any API endpoint.

Output guardrails

Schema enforcement (via Pydantic, JSON Schema, or platform-level validation) ensures the output matches a defined type. But valid structure doesn’t guarantee correct content. A model can return a perfectly formatted JSON object that’s factually or logically wrong.

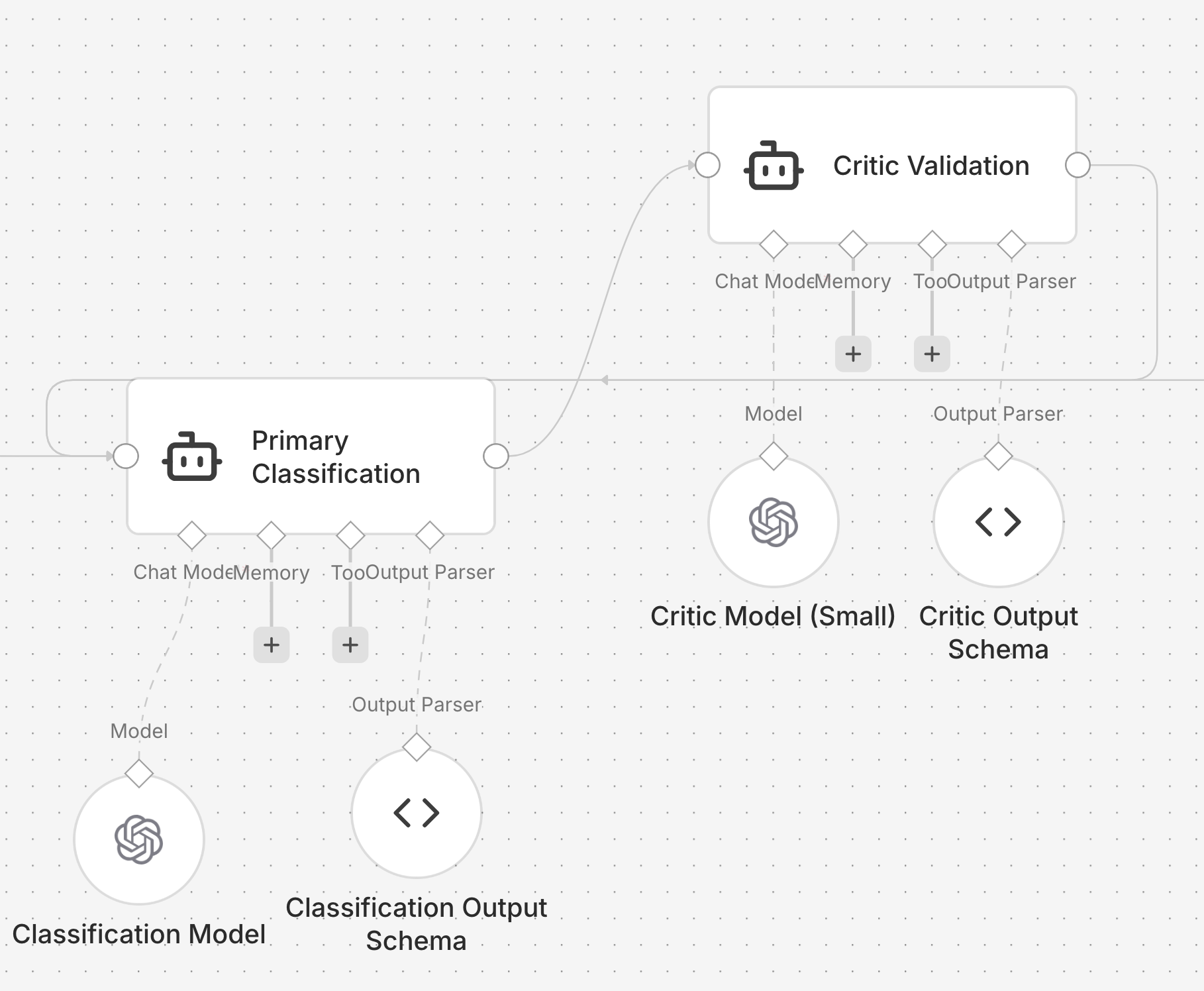

Hard-coding semantic validation checks for every possible error is a losing battle. The professional solution is the critic pattern: use a secondary agent (often a smaller, cheaper model) to review the primary agent’s output against a set of quality criteria. The critic checks for logical consistency, flags suspicious patterns, and can reject outputs that pass structural validation but fail semantic review.

For example, a critic for a product classifier might check: Is the confidence score high but the category set to “Other”? Is an item marked as restricted but the flags don’t explain why? Is the subcategory a logical subset of the primary category? These are checks that are hard to express as code rules but easy to express as natural language criteria for a second model.

The critic can also serve as a natural language audit trail, which is valuable in regulated domains.

Tool-level guardrails

Tool-level safety enforces the principle of least privilege. It ensures the agent cannot execute unauthorized actions, regardless of the instructions it received. This is where you enforce rate limits, approval requirements for destructive actions, and scope restrictions.

A product classifier shouldn’t be able to delete products, even if someone manages to inject that instruction. The allowlist of available tools is your hard boundary. Anything not on the list is blocked regardless of how convincingly the model argues for it.

When to escalate to humans

Your agent will get things wrong, especially early on. Human intervention gives you a way to catch those failures and improve the agent’s performance without burning user trust. The goal is a graceful handoff: when the agent can’t complete a task, it transfers control to a human rather than guessing its way into a worse outcome.

What that handoff looks like depends on your use case. A customer service agent escalates to a human support rep. A coding agent surfaces the problem and hands control back to the developer. A document processing agent flags the item for manual review. The shape is different, but the principle is the same: the agent should know its own limits and act on them.

Two triggers should always warrant escalation:

Exceeding failure thresholds. Set limits on retries and repeated failures. If the agent can’t understand a customer’s intent after three attempts, or if it keeps hitting errors on a particular task, escalate. Define these limits before you deploy, not after a user has already sat through five failed attempts.

High-risk actions. Actions that are sensitive, irreversible, or carry real financial consequences should require human approval until you’ve built confidence in the agent’s reliability. Canceling orders, issuing large refunds, modifying account permissions: these shouldn’t be one confused inference away from happening. As your agent proves itself over time, you can selectively loosen the reins.

Build the escalation path before you need it. An agent that fails silently is worse than one that asks for help.

The building blocks above are universal. Every agent needs some combination of instructions, a loop, tools, and guardrails regardless of how you build it. What changes is how you implement them. The next three sections walk through the same product classifier built three different ways: writing the code yourself, assembling it in a visual workflow tool, and defining it as a spec. Each approach makes different trade-offs around control, speed, and maintenance burden.

A. Code-driven (imperative)

In the imperative approach, you as developer are responsible for the entire orchestration lifecycle. This includes managing state, handling model input and output, and implementing the necessary logic for error recovery.

Most engineers begin with this method because it maps directly to standard software development patterns. You use familiar languages like Python or TypeScript and maintain granular control over the execution flow.

The code-driven path splits into two practical starting points. DIY on raw API calls is where most teams begin: you call the OpenAI, Anthropic, or Google API directly, parse the response, and build everything else yourself. It teaches you the underlying primitives, but you take on the cost of building retries, validation, logging, and testing on your own. Frameworks sit one layer up. They provide opinionated abstractions for prompts, tool calling, and state management. Both are flavors of imperative orchestration: you write the orchestration logic and you ship it as application code.

The example below uses raw API calls so the contract stays visible. Frameworks are covered later in this section.

The following implementation demonstrates a basic product classifier using the OpenAI SDK and Pydantic for schema enforcement.

import logging

from enum import Enum

from typing import Optional

from openai import OpenAI

from pydantic import BaseModel, Field

SYSTEM_PROMPT = (

"Classify this product listing. Return the category, subcategory, "

"if it's restricted (boolean), confidence score (0-1), and any content flags."

)

class Category(str, Enum):

ELECTRONICS = "electronics"

CLOTHING = "clothing"

HOME = "home"

SPORTING = "sporting"

OTHER = "other"

class Classification(BaseModel):

category: Category

subcategory: str = Field(description="The specific niche within the category")

is_restricted: bool

confidence: float = Field(ge=0, le=1)

flags: list[str]

def classify_product(

title: str,

description: str,

max_retries: int = 3,

model = 'gpt-5.4',

client: OpenAI = OpenAI(), # For dependency injection

) -> Optional[Classification]:

"""

Orchestrates the classification of a product listing with retry logic.

"""

for attempt in range(max_retries):

try:

response = client.beta.chat.completions.parse(

model=model,

response_format=Classification,

messages=[

{"role": "system", "content": SYSTEM_PROMPT},

{"role": "user", "content": f"Title:{title}\\\\\nDescription:{description}"}

],

)

return response.choices[0].message.parsed

except Exception as err:

logging.error(f"Attempt{attempt + 1} failed:{err}")

if attempt == max_retries - 1:

# In a real system, you would emit a metric here

raise err

return NoneThis implementation is functional and concise. In roughly 30 lines, it addresses two of our six properties: reliability (via Pydantic contracts) and basic resilience (via a retry loop).

However, this is a solitary component, not a production system. To bridge the gap to a production-ready agent, several non-trivial engineering challenges remain. When you hardcode orchestration logic in this manner, you assume the burden of building and maintaining the infrastructure for observability, versioning, and evaluation yourself.

Model selection: start with capability

In the code example above, we specified gpt-5.4. However, at the prototyping stage, the specific provider is secondary to the logic of the agent. The priority is to establish a baseline of correct behavior using a frontier-class model.

A common pitfall is to spend cycles benchmarking models before your agent is even functional. While optimization is a valid engineering goal, you cannot effectively optimize a system without data. Benchmarking without a working agent is a speculative exercise rather than an empirical one.

The development sequence

The most efficient path to production follows a specific order of operations:

- Establish the baseline: Build the agent using a high-capability model to ensure your instructions and schemas are sound.

- Define the evals: Create a set of test cases based on real or synthetic data.

- Optimize for the triangle: Once you have a working baseline, run your evals against smaller, faster, or cheaper models.

Tiered routing

As you move toward production, it will usually make sense to adopt a tiered routing pattern. In this architecture, a lightweight classifier evaluates the complexity of an incoming request. Simple tasks are routed to a fast, cost-effective model, while high-complexity reasoning is reserved for more capable frontier models.

This approach allows you to maximize quality while minimizing latency and cost. However, this is an optimization for later. For now, the goal is functional correctness.

Design for change

Even in the earliest prototype, do not scatter model names throughout your codebase. Centralize your model selection in a configuration file or a global constant. This minor architectural discipline ensures that when it is time to swap models or implement routing logic, you are not performing a global find-and-replace across your entire repository.

Instructions

The production prompt from the building blocks section gets implemented as a Python string assigned to a constant. Here’s the practical difference in the code-driven approach: the prototype prompt and the production prompt are both just strings, but the production version does substantially more work.

The prototype prompt we started with:

SYSTEM_PROMPT = (

"Classify this product listing. Return the category, subcategory, "

"if it's restricted (boolean), confidence score (0-1), and any content flags."

)Gets replaced by the full production prompt (see the building blocks section for the complete text). In a code-driven system, you’d typically store this in a constants file or load it from a configuration system so it’s easy to find, update, and version.

Tools

The building blocks section above covered why tool design matters, how to categorize tools, and how to scope permissions. Here’s what that looks like in practice.

A tool is defined by its name, a set of parameters, and a description. This description is not just for documentation. It’s also the primary signal the agent uses to decide when to call it.

Here are two tool definitions for our product classifier.

Tool: lookup_product_history

Description: "Look up previous classifications for a product by SKU.

Use this when the product has been classified before and you want

to check for consistency."

Parameters:

sku (string, required): The product SKU to look up

Returns: { found, previous_category, last_classified } or { error }

Tool: flag_for_human_review

Description: "Flag a product for manual review by the compliance team.

Use this when confidence is below 0.7 or the product might violate

marketplace policies."

Parameters:

sku (string, required)

reason (string, required): Why this product needs human review

urgency (string, one of: "low", "medium", "high")

Returns: { success, ticket_id } or { error }

Each tool implementation handles its own errors and returns structured

results the model can reason about, including in failure cases.Two specific details make these definitions effective in a production setting.

First, the descriptions include clear heuristics. Phrases like “Use this when confidence is below 0.7” provide the model with a quantitative trigger. Without these instructions, a model may hesitate to use a tool or use it at inappropriate times.

Second, the return values are structured to handle failure. If a database query fails, the tool should return a structured error object rather than throwing an exception that crashes the execution loop. When the model receives an error object, it can reason about the failure. It might choose to retry, attempt an alternative strategy, or escalate the issue to a human.

If your tools fail silently, the agent may either stall or attempt to hallucinate a successful result to satisfy the prompt.

The agent loop

The observe-reason-act cycle from the building blocks section gets implemented as a for loop with tool dispatch:

import json

def run_agent(task: str, max_iterations: int = 10) -> dict:

messages = [

{"role": "system", "content": SYSTEM_PROMPT},

{"role": "user", "content": task}

]

for i in range(max_iterations):

# The model decides the next step based on the conversation history

response = client.chat.completions.create(

model=MODEL,

messages=messages,

tools=TOOLS,

)

msg = response.choices[0].message

# Execution phase: if the model requests a tool, we run it

if msg.tool_calls:

messages.append(msg)

for tool_call in msg.tool_calls:

fn_name = tool_call.function.name

fn_args = json.loads(tool_call.function.arguments)

# Observation phase: execute the tool and capture the result

result = execute_tool(fn_name, fn_args)

messages.append({

"role": "tool",

"tool_call_id": tool_call.id,

"content": json.dumps(result)

})

# Continue the loop to let the model process the new information

continue

# Exit phase: if no tools are called, the task is complete

return {

"status": "complete",

"response": msg.content,

"iterations": i + 1

}

return {

"status": "max_iterations_reached",

"iterations": max_iterations

}

def execute_tool(name: str, args: dict) -> dict:

tool_map = {

"lookup_product_history": lookup_product_history,

"flag_for_human_review": flag_for_human_review,

}

fn = tool_map.get(name)

if not fn:

return {"error": f"Unknown tool:{name}"}

try:

return fn(**args)

except Exception as e:

return {"error": str(e)}The max_iterations cap, context history management, and single-agent-first principle discussed in the building blocks section are all visible here. The messages list grows with each tool call, and the loop terminates either when the model stops requesting tools or when the iteration limit is reached.

Multi-agent orchestration

When a single-agent loop hits the context dilution threshold discussed earlier, the manager pattern looks like this in code:

from pydantic import BaseModel

from typing import Literal

class DelegationPlan(BaseModel):

"""

Schema for the manager to decide which agent to invoke

and what specific instructions to give them.

"""

specialist: Literal["classifier", "researcher", "reviewer"]

subtask: str

def manager_agent(task: str) -> dict:

# The manager decides which specialist to invoke

plan = client.chat.completions.create(

model=MODEL,

messages=[

{"role": "system", "content": (

"You are a coordinator. Given a task, decide which specialist "

"to delegate to: 'classifier', 'researcher', or 'reviewer'. "

"Return the specialist name and the task to hand off."

)},

{"role": "user", "content": task}

],

response_format=DelegationPlan, # Pydantic model with specialist + subtask

)

delegation = plan.choices[0].message.parsed

return run_specialist(delegation.specialist, delegation.subtask)By isolating domains, each specialist only manages its own tools and instructions. This reduction in state space significantly increases the reliability of the underlying model.

Guardrails

The three interception points from the building blocks section (input, output, tool-level) each get their own implementation.

Input validation:

Input validation occurs before the request is sent to the model. This layer ensures the data adheres to structural constraints and filters for known adversarial patterns, like prompt injection attempts. This is fairly standard software validation.

def validate_input(title: str, description: str) -> tuple[bool, str]:

if not title or len(title) > 500:

return False, "Title must be between 1 and 500 characters"

if not description or len(description) > 10000:

return False, "Description must be between 1 and 10000 characters"

# Basic heuristic check for prompt injection. In production, use a guardrail model

suspicious_patterns = ["ignore previous instructions", "system:", "you are now"]

for pattern in suspicious_patterns:

if pattern.lower() in description.lower():

return False, "Input contains suspicious content"

return True, "ok"Output validation with a critic:

While schema enforcement (via Pydantic) ensures the output matches a defined type, it does not guarantee semantic correctness. A model can return a perfectly formatted JSON object that is still factually or logically incorrect.

Hard-coding semantic validation checks is a losing battle. Attempting to write manual logic to determine if a product description truly matches a specific category leads to a brittle codebase that cannot scale with the nuances of real-world data.

The professional solution is to implement a model-based evaluation pattern, using a secondary agent to act as a critic.

CRITIC_PROMPT = (

"You are a quality control auditor. Review the following product classification. "

"Check for logical consistency. A classification is INVALID if: "

"1. The confidence is high ( >0.9 ) but the category is 'Other'. "

"2. The item is 'Restricted' but the flags do not explain why. "

"3. The subcategory is not a logical subset of the category."

)

def validate_output_with_critic(listing: str, result: Classification) -> tuple[bool, str]:

# We use a smaller, faster model for the critic role to maintain efficiency

response = client.beta.chat.completions.parse(

model="gpt-5-mini",

messages=[

{"role": "system", "content": CRITIC_PROMPT},

{"role": "user", "content": f"Listing:{listing}\\\\\nResult:{result.json()}"}

],

response_format=ValidationResult # Schema: is_valid: bool, reason: str

)

audit = response.choices[0].message.parsed

return audit.is_valid, audit.reasonUsing a smaller model for the critic keeps cost and latency low. The critic can also double as a natural language audit trail, which is valuable in regulated domains.

Tool-level safety:

Tool-level safety enforces the principle of least privilege. It ensures the agent cannot execute unauthorized actions, regardless of the instructions received from the model or an external user.

This is where you enforce things like rate limits, approval requirements for destructive actions, and scope restrictions. A product classifier shouldn’t be able to delete products, even if someone manages to inject that instruction.

ALLOWED_TOOLS = {"lookup_product_history", "flag_for_human_review"}

def execute_tool_safe(name: str, args: dict) -> dict:

if name not in ALLOWED_TOOLS:

return {"error": f"Tool '{name}' is not permitted for this agent"}

# Additional per-tool checks

if name == "flag_for_human_review":

if args.get("urgency") == "high":

log.warning(f"High-urgency review flag:{args.get('reason')}")

return execute_tool(name, args)In an imperative system, these guardrails are mandatory. Without them, the agent is vulnerable to prompt injection, where a clever input can override your system instructions. By implementing these checks in code, you move the security boundary from the probabilistic world of the LLM to the deterministic world of your application logic.

A product classifier, for instance, should have no mechanical path to deleting records or accessing unauthorized databases, even if the model is explicitly instructed to do so by a malicious input.

The production gap

At this stage, you have a functional agent. It manages model selection, tool execution, and validation logic. However, there is a significant distance between a functional script and a production-grade service. When measured against the six properties of a production agent, several structural deficits remain.

- Versioning and rollbacks: Because your prompt is a hardcoded string, it is tied to the lifecycle of your application code. You cannot roll back a behavioral change without reverting a git commit and redeploying the entire service. Comparing the performance of two different prompt versions requires building a custom harness.

- Systematic evaluation: A script that works on hand-tested examples is not verified for production. A reliable pipeline requires running hundreds of test cases against specific prompt versions to catch regressions. Without this, you are effectively testing in production.

- Traceability and logging: When a failure occurs, you need to see the full execution trace. This includes the specific system prompt that was active, the tool call sequence, and the intermediate reasoning steps. Capturing this data requires building structured logging for every decision point in the loop.

- Architectural flexibility: Hardcoding a single model provider creates a dependency that is difficult to break. Implementing fallbacks, dynamic routing, or cost-based model swapping becomes a separate, complex workstream rather than a configuration change.

- Deployment velocity: If the people who understand the domain, like the product managers or subject matter experts, want to iterate on the agent behavior, they are currently blocked. Every minor adjustment requires an engineering ticket.

The code required to build the infrastructure around an agent (testing, versioning, observability, and deployment) is often orders of magnitude more complex than the agent itself.

Orchestration frameworks

Frameworks aim to standardize the boilerplate of agent development. They provide abstractions for model providers, tool calling conventions, and state management. The value proposition is that you can focus on the business logic while the framework handles the underlying plumbing.

Each framework takes a different design approach:

- LangChain: The largest collection of integrations and the most extensive set of pre-built connectors. It offers high-level abstractions for almost every part of the LLM lifecycle. It also introduces significant complexity through its multi-layered architecture.

- LlamaIndex: Optimized for data-intensive tasks. It provides sophisticated primitives for indexing, retrieval, and RAG (Retrieval-Augmented Generation).

- CrewAI: Specifically designed for multi-agent coordination. It simplifies the process of defining specialists and managing their interactions.

- PydanticAI: A more recent, type-safe approach. It prioritizes developer experience by staying closer to native Python patterns and direct API calls.

- Haystack: An enterprise-oriented framework focused on production-grade RAG and search pipelines. It provides retrievers, document stores, and modular pipeline components, with a longer history in NLP than the newer agent-first frameworks.

Abstractions always have trade-offs

While frameworks accelerate the initial build, they introduce a layer of indirection that can complicate production operations. When an agent fails, you are often forced to debug through several layers of third-party code.

This leads to a common failure mode: the “framework tarpit.” Teams adopt a framework to save time, but eventually spend more time fighting the framework’s opinions and limitations than they would have spent writing the direct orchestration logic.

Frameworks tend to be most effective in two situations:

- You need specific pre-built connectors, like LlamaIndex's document loaders or database integrations.

- Your team is new to LLM APIs and benefits from the structure a framework provides.

Direct implementation is generally preferable when you need:

- Rigor: You need to understand and control every failure mode at the API level without hidden middleware.

- Simplicity: The agent’s logic is straightforward enough that a framework adds more weight than value.

- Extra flexibility: The framework’s built-in assumptions about memory or prompt formatting conflict with your specific performance or security needs.

If you’re beginning a new project and prefer to start with code, the most pragmatic path is to start with direct API calls. This ensures you understand how the components interact. If you later encounter a level of complexity that justifies a framework, you will be making that choice based on technical requirements rather than a search for a shortcut.

For many agents that follow standard patterns, the managed-platform approach discussed below often provides a faster route to both prototype and production.

When code-driven is the right call

The decision to build a code-driven agent should be based on the novelty and complexity of the task. If your requirements align with established patterns (such as extraction, classification, or standard RAG) the overhead of imperative orchestration is rarely justified. However, two specific scenarios benefit from the flexibility of code.

Integration with non-standard systems

Code-driven agents are essential when you require fine-grained control over interactions with proprietary or legacy systems. If your agent must navigate complex authentication flows, coordinate with non-standard protocols, or manage state across fragmented internal APIs that no platform could reasonably anticipate, manual orchestration is usually the only way to get reliability.

Novel architectures and “greenfield” experiments

If you are developing a use case that does not yet have an established design pattern, the flexibility of code is worth the infrastructure cost. Platforms excel at optimizing known workflows, but code allows you to experiment with unique reasoning loops, bespoke memory structures, or custom feedback mechanisms that have not yet been standardized.

Evaluating engineering allocation

To determine if your current approach is sustainable, audit where your team spends its time. In most professional engineering environments, effort should be directed toward refining the agent’s actual behavior and domain expertise.

If most of your resources are diverted toward building deployment pipelines, maintaining testing harnesses, or managing prompts, you are no longer building an agent; you are building an AI operations platform. Unless that platform is your core product, this is likely an inefficient allocation of engineering capital. The goal is to redirect that effort away from the infrastructure and toward the part of the system that creates competitive value: the agent’s logic.

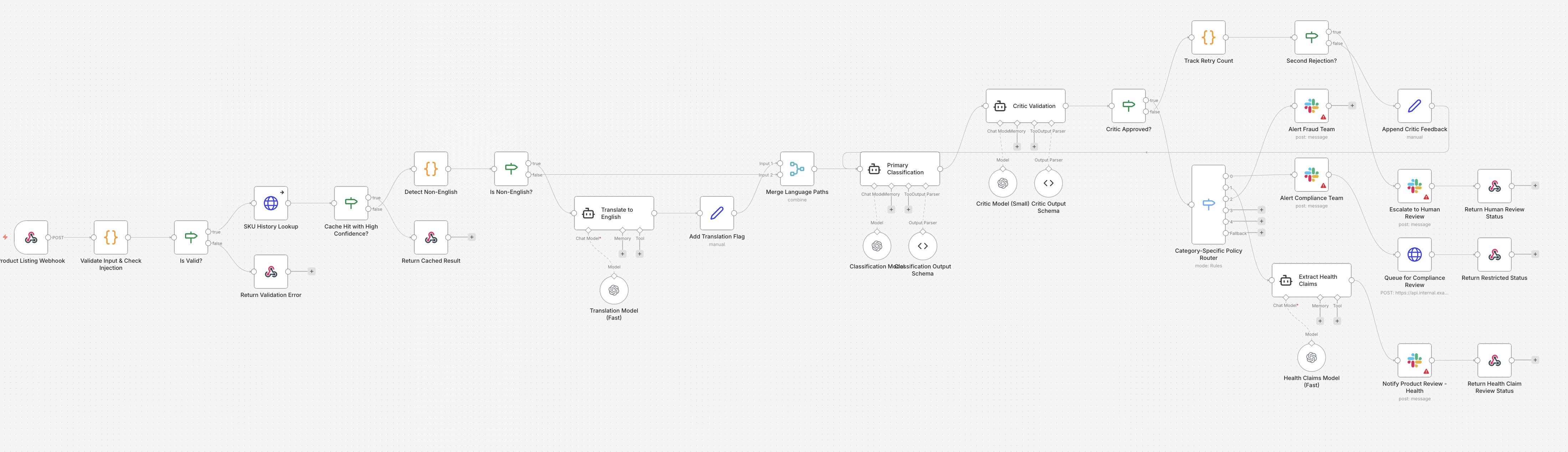

B. Visual workflow (graph-based)

In a visual workflow, the primary abstraction is the Directed Acyclic Graph or DAG. Instead of defining logic through imperative control flow in a Python or TypeScript file, you arrange functional nodes on a canvas and draw edges to dictate the data path. Each node serves as a discrete unit of work: an API trigger, a prompt template, an LLM call, or a conditional router.

Tools like n8n, LangGraph and Zapier characterize this approach. The core value proposition is the ability to see the mental model of the system at a glance without parsing a codebase.

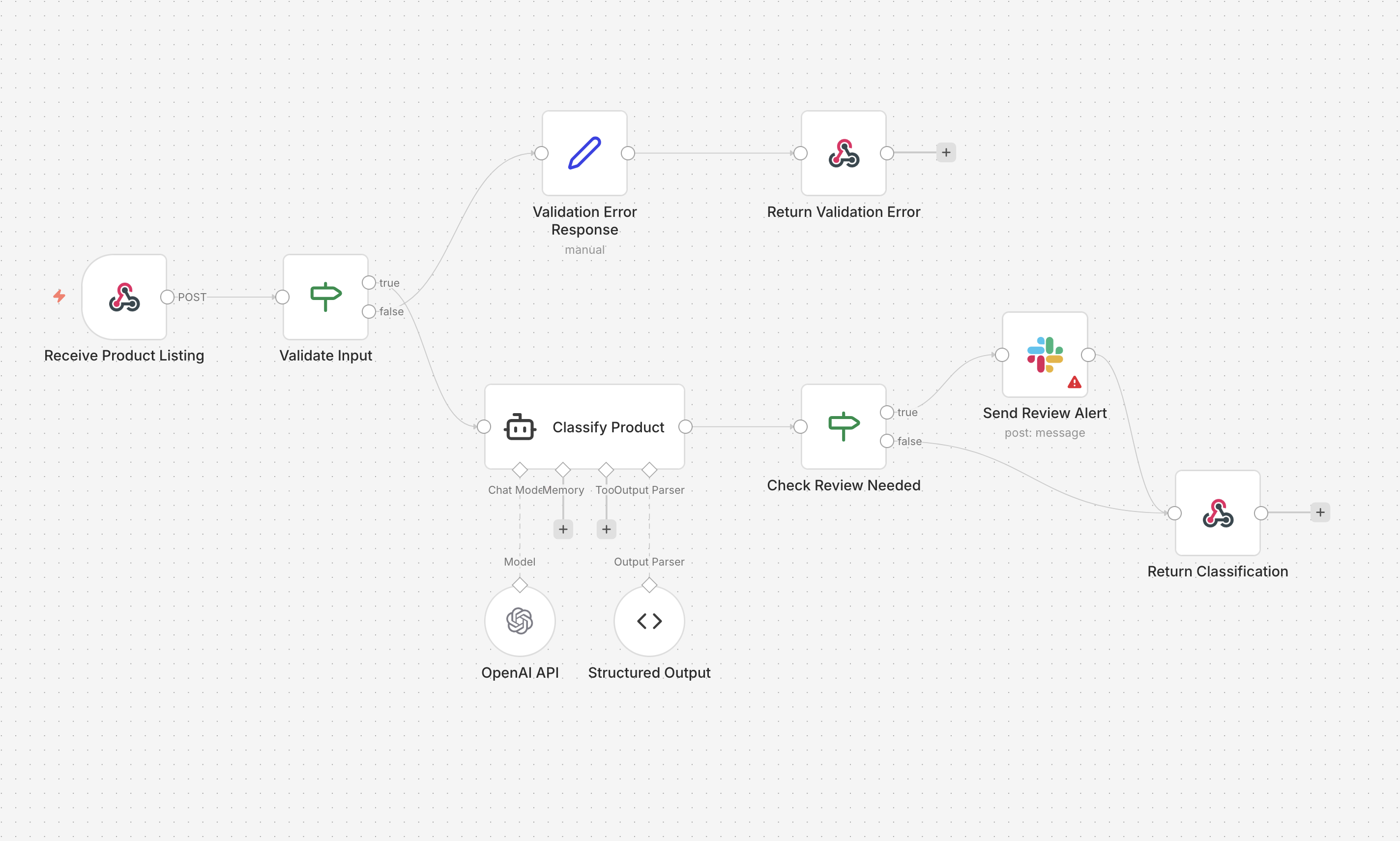

When we map the product listing classifier to a visual workflow, the logic becomes self-documenting. Here's what the same agent looks like built in n8n:

- Ingestion: A Webhook node receives the raw product listing via POST.

- Input Validation: An IF node checks that required fields are present and within expected bounds. If validation fails, the flow branches to an error response. If it passes, it continues.

- Classification: An AI Agent node, backed by OpenAI and a structured output parser, classifies the listing into a category, subcategory, confidence score, and content flags.

- Review Router: A second IF node checks whether the item needs human review (restricted item or low confidence). If it does, the flow branches to send a Slack alert before returning the result. If it doesn't, the classification is returned directly.

The whole thing is eight nodes. You can physically trace the fork where a standard listing passes through cleanly while a flagged one triggers a Slack notification on its way out. A technical Product Manager can audit this logic in seconds, making it a living spec that bridges the gap between engineering and policy teams.

That’s the real strength of visual workflow tools.

If your agent fits this shape, visual tools are a strong choice. The rest of this section covers how to build production-quality agents with them, where they excel, and where you’ll need to work around their limitations.

Model independence

Visual tools offer an advantage in per-agent model granularity. In n8n, this is managed via a connected LLM node from the AI Agent node. In Zapier, it is a model selector inside an Agent step.

You configure an LLM node by clicking into it and selecting a provider and model from a dropdown menu, along with parameters like temperature. In the product listing classifier above, a single OpenAI model handles the classification. The graph shows you that OpenAI is the provider, but the specific model version is a setting inside the node, not something you can read from the zoomed-out canvas. You'd need to click into it to know whether it's running GPT-5.4 or GPT-5-mini.

Because each AI Agent node manages its own model independently, you could split the workflow into two stages with different models: a fast, cost-effective model like Gemini 3 Flash for initial category sorting, and a high-reasoning model like GPT-5.4 or Claude Opus 4.6 for policy violation checks. The provider is visible at a glance; the exact model and its parameters are one click deeper.

The primary downside of this convenience is evaluation debt. While swapping a model takes only two clicks, visual tools rarely provide the native infrastructure to detect, say, a 4 percent drop in classification accuracy. This gap shows up in three ways:

- Misleading spot-checks: Running a few sample inputs through the canvas is not a substitute for a statistically significant test.

- Eval gap: Without an external evaluation suite, you are flying blind with every model update.

- Regression risk: A model that is better at general reasoning may perform worse on your specific edge cases for restricted items.

In a production setting, the ease of swapping models in a GUI must be balanced by a rigorous, externalized testing harness. If you cannot measure the impact of a model change across your entire golden set of data, you have traded reliability for a minor gain in developer speed.

Tools and connectors

Connectors are the primary utility of visual workflow tools. Pre-built nodes handle the boilerplate of REST APIs, Postgres queries, and webhook ingestion without requiring manual implementation of authentication or retry logic.

Zapier supports more than 8,000 integrations, while n8n offers over 500. For a product listing classifier, this would simplify the ingestion of data from sources like Shopify and the subsequent export of results to a compliance dashboard or a database.

Custom logic implementation

When you encounter logic that does not fit a pre-built connector, you must use a dedicated code node. n8n supports JavaScript and Python in these blocks, while Zapier offers a more restricted environment.

These nodes are not full-fledged execution environments. They are sandboxed script blocks with significant operational constraints:

- Execution timeouts: Most platforms enforce strict limits, often between 10 and 30 seconds. This can be a bottleneck for complex data transformations or multi-step validation logic.

- Dependency management: You are restricted to the libraries pre-installed by the platform. You cannot easily add niche packages required for specific data processing tasks.

- Lack of persistence: These environments usually lack a persistent filesystem and have limited memory, making them unsuitable for heavy data manipulation.

If your classifier requires non-standard library support or handles large payloads, you will eventually hit the ceiling of what these embedded scripts can provide. At that point, the visual clarity of the graph is undermined by the complexity hidden inside fragmented snippets of code.

Context fragmentation and instruction management

In visual tools, system prompts are encapsulated within individual LLM nodes. For a basic implementation, this is straightforward: you configure the Prompt or System Message field within a single node. The architecture is transparent and easy to audit.

As the product listing classifier grows in complexity, however, this approach introduces significant cognitive load. A production-grade agent will need to account for marketplace-specific regulations, vintage item edge cases, and distinct protocols for restricted categories. In a code-driven or managed-platform architecture, this logic resides in a centralized configuration or source file that can be read and versioned as a single unit.

In a visual workflow, these instructions inevitably become fragmented. The primary classification prompt lives in the initial LLM node. Rules for restricted items are buried in a separate node on a downstream branch. Logic for handling specific edge cases might be split between a router configuration and a specialized review node.

The discovery problem

This fragmentation complicates the process of understanding the agent’s total knowledge base. A technical lead attempting to verify the system’s compliance with new policy updates cannot perform a simple global search or read a single document. Instead, they must open every LLM node in the graph, extract the prompt text, and reconstruct the agent’s global state.

This decentralized approach to instruction management creates several operational risks:

- Inconsistent logic: A change to a policy rule might be updated in the primary classifier node but overlooked in a secondary validation node, leading to divergent behavior within the same workflow.

- Onboarding friction: New agent maintainers must perform a manual discovery process across the canvas to understand what the agent knows, rather than reviewing a centralized behavioral specification.

- Auditability: Verifying the exact context provided to the model during a specific execution requires tracing the path through multiple nodes, each with its own local instruction set, making it difficult to pinpoint where a reasoning failure originated.

While visual tools make the data path clear, they often obscure the instructional intent that governs that path.

Orchestration patterns and the complexity explosion

Orchestration is the primary function of a visual builder and the area where its structural constraints are most visible. While these tools excel at simple pipelines, they often struggle with the non-linear logic required for professional-grade agents.

Data flow

Visual tools are optimized for sequential execution. A request enters, passes through a series of discrete nodes, and exits as a processed result. The product listing classifier begins as this type of linear flow: an ingestion node followed by a classification node and a final output. This is the ideal use case for a GUI, as the visual representation is functionally identical to the mental model of the task.

Conditional branching (implemented via Switch or Path nodes) remains effective for low-complexity routing. If the classifier needs to fork logic for three broad categories, the canvas remains readable. However, as requirements grow to include a dozen specific marketplace policies, the canvas begins to experience sprawl. Tracing a specific execution path through a web of overlapping edges requires constant zooming and panning, which makes it challenging to reason about the system as a whole.

Iteration

Iteration is a fundamental friction point for directed acyclic graph (DAG) interfaces. In an imperative script, a retry loop or an iterative refinement pattern is a few lines of code.

In a visual canvas, implementing a “critic” pattern (where the agent refines its classification based on feedback) requires wiring an output back to a preceding input. This creates a visual cycle that breaks the linearity of the graph.

This introduces several operational challenges:

- State visibility: It is difficult to determine which iteration of a loop you are currently inspecting in a debugger.

- Termination logic: Ensuring a loop terminates correctly is often more complex in a GUI than in code, where

breakconditions are explicit and easily unit-tested. - State accumulation: Managing how data changes across multiple passes, such as appending new reasoning steps to a history, often requires manual variable management that is prone to error.

Multi-agent coordination

Multi-agent patterns in visual tools generally manifest in two ways: nested workflows (where a node triggers a sub-process) or monolithic graphs partitioned into functional zones.