Make vs n8n: Complete Comparison for Workflow Automation

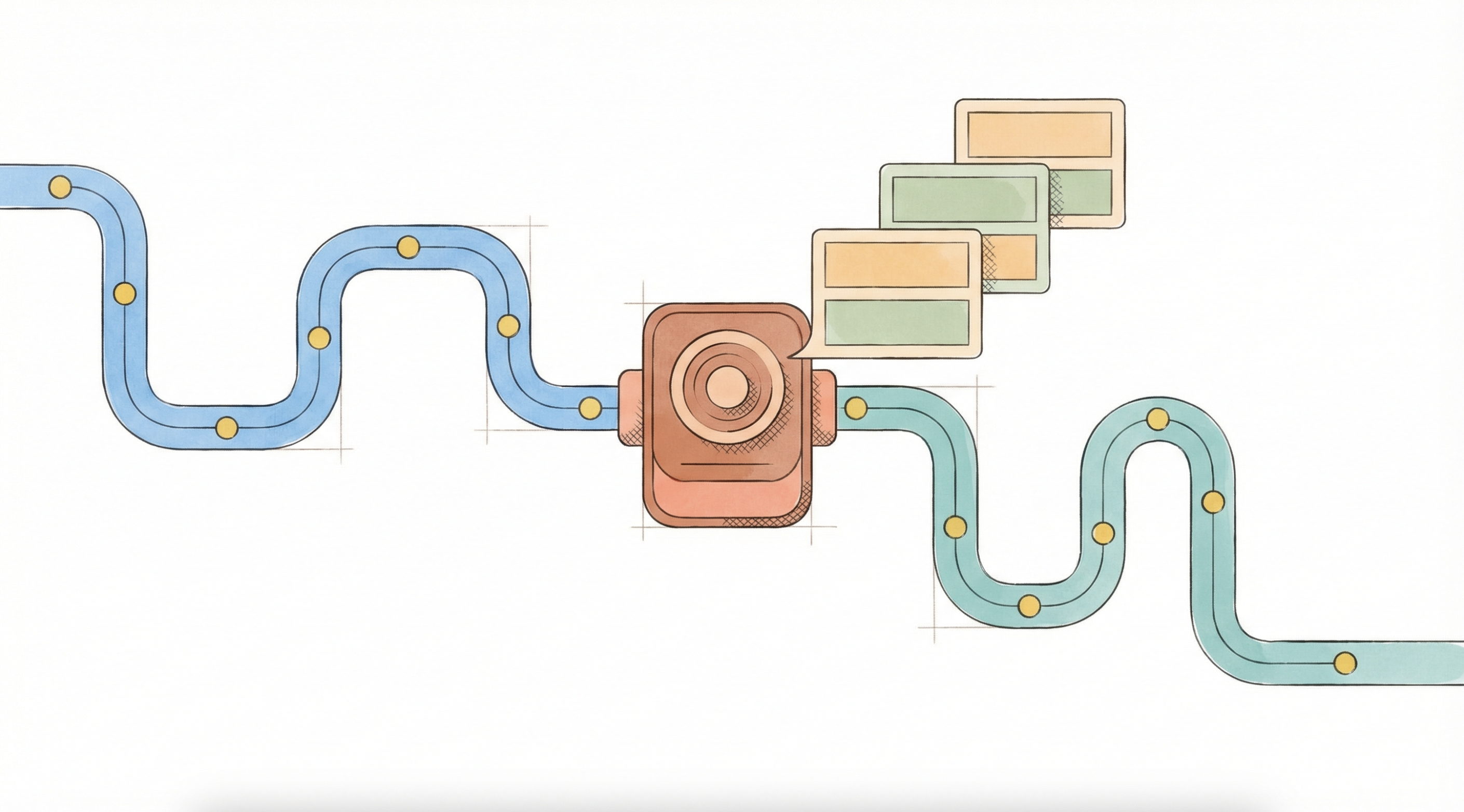

Make and n8n take different approaches to workflow automation. Make offers a visual interface with 3,000+ pre-built modules so you can connect SaaS tools with minimal setup. n8n offers open-source flexibility with self-hosting options and deeper customization for teams that need more control over their infrastructure. This comparison breaks down interface usability, hosting, integrations, and flexibility so you can evaluate both on the factors that matter to your team.

One gap neither platform addresses: AI reasoning. Make and n8n excel at moving data between systems, but neither is built to handle the inference, classification, and judgment calls that production AI workloads require.

Side-by-Side Feature Comparison

Here's how Make and n8n stack up on the factors that matter most:

Feature | Make | n8n |

Ease-of-use | Drag-and-drop editor with 3,000+ templates | Visual builder with JSON fields and optional code creates a steeper learning curve |

Deployment | Cloud-only: sign up and start building, no servers to manage | Hosted or self-hosted options; self-hosting gives you data control but requires server management |

Integrations | 3,000+ ready-made modules connect to the SaaS tools you already use | Hundreds of connectors plus community and custom nodes work with any API |

Flexibility | Good fit for standard business flows; hits limits when you need custom logic | Built-in JavaScript, advanced branching, and AI Agent nodes handle complex, evolving processes |

Support | Docs, videos, and active community forums | Open-source community covers most questions, but assumes technical knowledge |

Both platforms require technical expertise to manage at scale. Make's visual interface feels more approachable at first, but complex workflows will still need dedicated automation specialists. n8n's flexibility comes with even steeper technical requirements, so you'll want technical resources on hand from the start.

Make: Visual Automation for Connected SaaS Stacks

Make is built around a drag-and-drop canvas with 3,000+ pre-built modules. If your team's priority is connecting existing SaaS tools without writing code, Make's setup time is genuinely short. That said, configuring data transformations between steps and managing complex flows still requires someone with workflow expertise.

Ease of use

The interface is approachable by design: guided prompts walk you through OAuth connections, error messages identify which module stalled, and an extensive template library means you're rarely starting from scratch. Teams new to workflow automation will find the learning curve manageable compared to most alternatives.

Deployment

Make runs exclusively in its managed cloud. There are no servers to provision, patch, or monitor, and updates happen without any intervention from your team. If your legal or compliance requirements dictate that customer data stays on private infrastructure, Make's only workaround is an on-premise agent available on its Enterprise plan.

Integrations

The 3,000+ module library covers Google Workspace, Shopify, and hundreds of niche SaaS platforms. Multi-step flows like routing a Shopify sale to an Airtable record and triggering a notification work through a modular assembly approach, making common SaaS-to-SaaS connections fast to configure.

Flexibility

Make fits teams whose workflows map cleanly to available modules. When complexity grows, the platform's constraints become apparent: heavy data transformations burn through the operation-based quota quickly, real scripting requires an Enterprise plan, and workflows with significant branching logic will need dedicated automation specialists to build and maintain.

n8n: Flexible Automation for Teams with Technical Resources

n8n uses a visual builder, but surfaces considerably more technical control than Make. Open any node and JSON fields appear alongside optional JavaScript inputs. Working with API keys, data pinning, and function nodes is expected rather than exceptional, which means n8n rewards teams with engineering resources and creates friction for those without them.

Ease of use

The learning curve is steeper than Make's by design. Teams treating n8n as a production tool typically need at least one dedicated automation specialist. If you're evaluating n8n expecting a minimal-setup experience, the reality will catch up quickly once workflows move beyond simple linear triggers.

Deployment

n8n operates as a spectrum. The cloud-hosted option handles infrastructure for you. The self-hosted open-source edition gives you full data sovereignty, which matters for teams with strict compliance requirements, though it comes with the full responsibility of server provisioning, backups, updates, and security. If your legal team needs customer data to stay within a private network, n8n's self-hosting path is a real option rather than an Enterprise-tier add-on.

Integrations

The integration catalog starts at roughly 1,000 connectors including community contributions, but trades catalog size for total flexibility. Any service with an API connects through the HTTP Request node, and teams can build custom nodes when off-the-shelf connectors don't exist. That capability assumes comfort with manual API configuration and JSON handling.

Flexibility

This is where n8n separates itself. Custom JavaScript runs directly inside a Code Node, so data transformation, internal API calls, and complex conditional logic stay within the canvas without requiring a separate service. Advanced error handling supports step-by-step testing with data pinning, automatic retries, and fallback workflows when upstream APIs fail. For workflows that need to evolve with business requirements rather than stay fixed, this depth is practically significant.

{{ LOGIC_WORKFLOW: score-order-fraud-risk | Score order risk and detect fraud }}

How Logic Adds Intelligence to Make and n8n

Make and n8n are workflow orchestrators. They handle triggers, data routing, and system connections reliably, but neither is built to run AI agents with the infrastructure production requires. The demo-to-production gap is real: typed APIs, auto-generated tests, version control, multi-model routing, and execution logging don't come included.

That's the gap Logic fills. Logic is an AI agent infrastructure platform. You write a natural language spec describing what an agent should do, and Logic generates a production-ready API in approximately 45 seconds, complete with typed endpoints, auto-generated tests, version control, and multi-model routing. When requirements change, you update the spec; the agent updates instantly without touching the API contract or redeploying integrations.

Where this matters in a Make or n8n workflow: your automation handles data movement, and your Logic agent handles AI reasoning on that data. Content moderation, document classification, fraud signal evaluation, and compliance checks are judgment calls that can't be resolved with conditional branches. Your n8n or Make workflow calls the Logic API, receives a structured output, and continues orchestrating from there.

DroneSense demonstrates what this looks like in production. Using Logic for AI document processing, their team reduced processing time from 30+ minutes to 2 minutes per document, with no custom ML pipelines required, and refocused the ops team on mission-critical work. The AI reasoning layer was the bottleneck; offloading it to Logic resolved it.

The alternative is building that AI layer yourself: prompt management, testing infrastructure, versioning, model selection, error handling, and structured output parsing. Most engineering teams can build it; the question is whether they should own that infrastructure, or offload LLM infrastructure to a platform built specifically for it, the same way AWS handles compute or Stripe handles payments. Logic gives teams a clear path to offload the LLM infrastructure layer while retaining full control over their business logic.

Choosing the Right Workflow Platform

Make offers more integrations and community resources. n8n offers better deployment flexibility and customization depth. The choice comes down to your technical capacity and what your workflows actually require.

If your priority is connecting existing SaaS tools quickly without writing code, Make gets you there faster. The module library and template catalog accelerate initial setup, and the managed cloud removes infrastructure overhead entirely.

If data sovereignty, self-hosting, or complex branching logic matter more than speed of setup, n8n fits better, though you'll need engineering resources to build and maintain workflows at scale.

If you're undecided, spinning up a proof-of-concept in both platforms will make the difference concrete: Make's speed is apparent immediately, and so is n8n's depth.

Both platforms handle system connections and data routing well. Where they share a ceiling is AI reasoning: conditional logic can approximate simple rules, but classification, extraction, and judgment calls that require inference need a different layer entirely. If your workflows include AI decision-making, the infrastructure underneath that reasoning matters as much as the orchestration around it.

Logic handles the agent layer: write a spec, get a production API with typed schemas, auto-generated tests, and version control. Your Make or n8n workflow calls Logic for the reasoning, receives structured outputs, and continues from there. Engineering teams that have evaluated building this infrastructure themselves consistently find the timeline longer than scoped once prompt management, versioning, and error handling enter the picture.

Engineering teams building AI agents without managed infrastructure face the same compounding overhead: testing, versioning, error handling, and model routing before a single job runs in production. Logic handles that layer so your team ships agents instead of infrastructure. Start building with Logic.

Frequently Asked Questions

Can Make or n8n workflows call a Logic agent directly?

Yes. Logic agents are deployed as standard REST APIs with typed endpoints. Any workflow platform that can make an HTTP request, including Make and n8n, can call a Logic agent and pass the structured response downstream. No custom connector is required. The integration follows the same pattern as any external API call in either platform, which means teams can add Logic to an existing workflow without rebuilding the orchestration layer around it.

What kinds of decisions is Logic suited for in an automated workflow?

Logic handles AI reasoning tasks that conditional branches can't: content moderation, document classification, compliance evaluation, fraud signal scoring, and any judgment call that requires weighing multiple inputs against nuanced criteria. If the decision requires inference rather than a lookup, Logic is the right layer. Workflow tools handle the data routing before and after; Logic handles the evaluation in between.

How does Logic handle changes to agent behavior without breaking existing workflows?

When a Logic agent's spec is updated, the platform validates the change against the existing API contract and runs regression tests before deploying. The typed API schema stays intact, so Make or n8n integrations don't break when the underlying agent logic evolves. Teams can update business rules, evaluation criteria, or processing instructions without touching the integration layer.

What does it take to integrate Logic with an existing n8n or Make workflow?

Logic deploys as a REST API, so integration follows the same pattern as any external API call in either platform. Teams configure an HTTP request node, pass in the typed inputs the agent expects, and handle the structured JSON response downstream. Most teams have a working proof-of-concept within a single session, and Logic's typed endpoints mean the response format is predictable from the first call.

How is Logic different from adding an AI node directly inside n8n or Make?

Native AI nodes in n8n and Make provide raw model access. Logic provides production infrastructure around the model: auto-generated tests, version control, execution logging, multi-model routing, and structured outputs. The difference is in what happens after the model call; Logic handles the infrastructure layer that raw API access leaves to the engineering team, including test coverage, versioning, and execution visibility across every job that runs.