Zapier vs Make: Which Automation Fits Non-Technical Teams?

Your operations team needs to stop doing things manually, and the two names that surface in every automation conversation are Zapier and Make. Both promise to connect your apps and eliminate repetitive work without engineering involvement. For a seed-to-Series A startup where engineering bandwidth is already stretched thin, picking the right platform means your ops team gets unblocked without creating a new project for your engineers.

The decision looks straightforward until you dig into the details. Zapier and Make differ in learning curve, pricing mechanics, and how they handle complexity as workflows grow. Logic also enters the picture earlier than most ops teams expect: Zapier and Make handle routing and triggers, while Logic handles the AI reasoning layer when workflows need judgment, extraction, scoring, or moderation. Knowing where each platform excels, and where both stop, is the difference between automation that scales and automation that stalls at the first edge case.

What Zapier Does Well

Zapier is the fastest path from “we should automate this” to a working workflow. With 8,000+ connected applications, it covers nearly any SaaS tool your team already uses. The interface is deliberately simple: pick a trigger, add actions, and the workflow runs.

For non-technical teams, that simplicity is the product. Zapier’s AI Copilot lets users describe what they want in plain English (“summarize new leads in Slack every morning”), and the platform drafts the workflow, connects accounts, maps data, and tests steps automatically. Zapier’s ChatGPT/OpenAI features use API-key-based authentication for setup. Initial automations typically go live within a few hours of setup.

Pricing mechanics matter here. Zapier charges per task, where a task is any action after the trigger. Filters, Paths, and Formatter steps are free. A five-step workflow running 200 times per month consumes 1,000 tasks if every step is an action, but far fewer if most steps are filters or conditional rules. The Professional plan starts at $19.99/month billed annually ($29.99 monthly) for 750 tasks; the Team plan at $69/month billed annually adds SSO and shared folders.

What Zapier leaves to you:

Complex branching rules have a real learning curve, and workflows with many conditional paths require splitting into separate Zaps

Lacks native loop or iteration support; processing each item in a list requires workarounds

Error handling is limited to basic notifications with limited retry control

Per-task pricing can spike unexpectedly; Zapier automatically continues running workflows beyond plan limits by switching to pay-per-task billing at a premium over your base task rate

Zapier handles the 80% of business automation that looks like “when X happens in App A, do Y in App B.” CRM sync, notification routing, lead capture, approval workflows: these are its strengths. The platform excels at reliable execution of straightforward multi-step workflows. Zapier works best when the team values speed, simplicity, and broad app coverage over workflow depth.

What Make Does Well

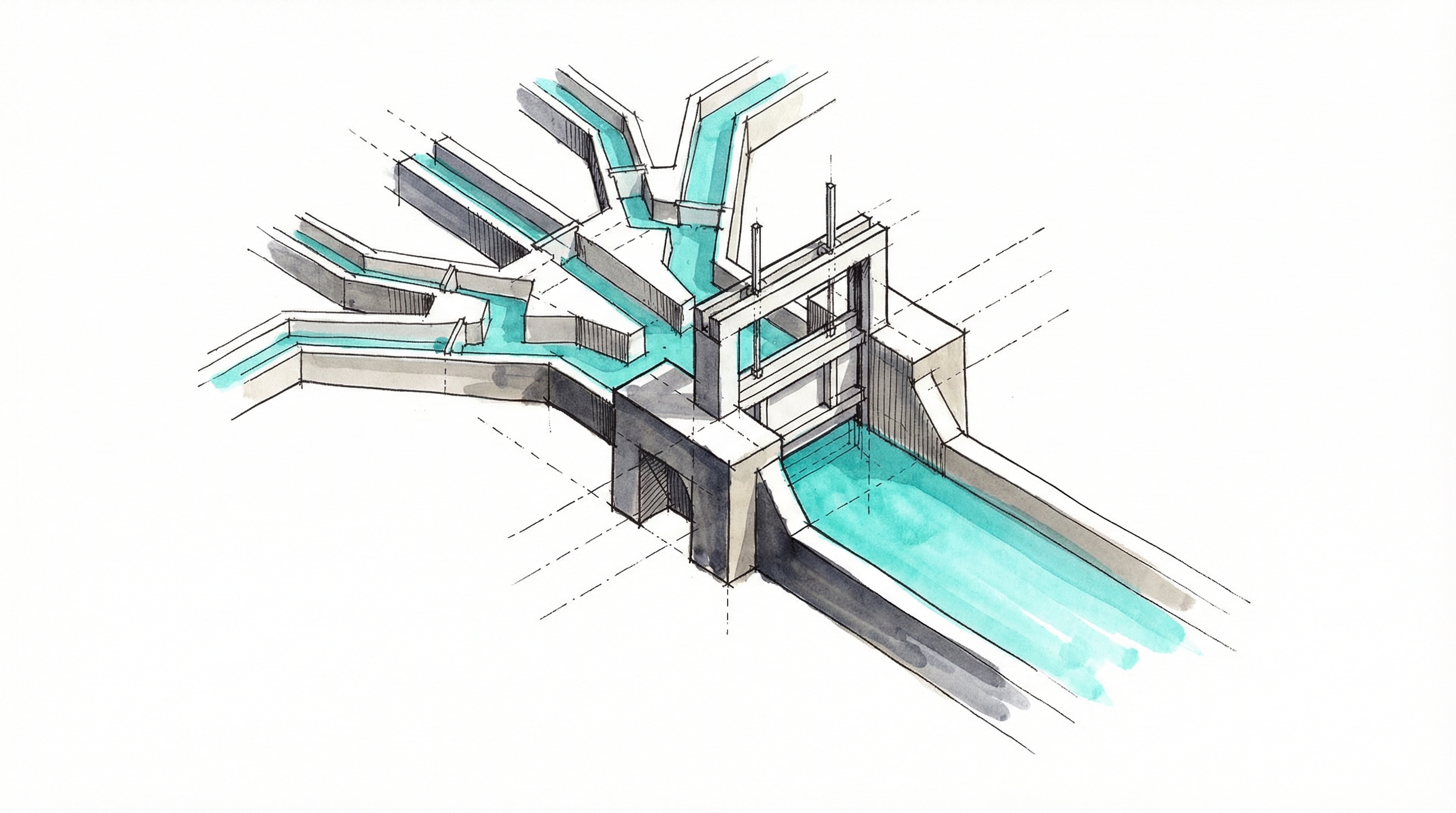

Make trades Zapier’s simplicity for power. The visual scenario builder displays workflows as a canvas where users see the entire flow at once, with built-in routers and error handling, plus flow-control tools, in a format that Zapier’s more linear editor does not offer directly. For teams whose workflows require complex conditional rules, Make handles it in a single scenario where Zapier would need multiple Zaps.

The integration library is smaller, 3,000+ apps versus Zapier’s 8,000+, but Make compensates with deeper control over data transformation and workflow architecture. If your ops team needs to iterate over arrays, handle errors with custom logic, or branch workflows into multiple parallel paths, Make supports it out of the box.

Pricing is Make’s strongest advantage. Make charges per credit, where each module execution (trigger, router, transformation, or action) generally counts as one credit. The Core plan starts at $9/month billed annually ($10.59 monthly) for 10,000 credits. For context: a six-step customer onboarding workflow (form → CRM → email → project tool → Slack → spreadsheet) running 200 times per month costs 1,200 credits on Make, well within the Core plan. The same workflow on Zapier would consume about 1,000 tasks, and that level of usage often exceeds the Professional plan’s included tasks, though Zapier’s free Filters, Paths, and Formatter steps can keep task counts lower in condition-heavy workflows.

The per-credit cost difference is significant. Zapier Professional works out to roughly $0.027 per task (annual billing). Make Core runs at approximately $0.0009 per credit, a roughly 30x difference in raw unit economics. The gap narrows when you account for the fact that Make counts more steps per workflow, but Make remains substantially cheaper at comparable volumes.

What Make leaves to you:

The learning curve is real and documented. Reviews and comparisons frequently describe Make as having a steeper learning curve and being more complicated for beginners or non-technical users than Zapier

Error messages are cryptic; a “422 response with payload” tells a non-technical user little that is actionable

Lacks live chat support, which is especially painful during onboarding when errors are most frequent

Credits deplete faster than expected when scenarios use high-frequency polling; on paid plans, a scenario polling every 5 minutes consumes credits on every check, even before processing any data

Make delivers better economics and more workflow power, but the upfront time cost is real. At least one team member usually needs to make an upfront learning investment before the platform pays off. Make pays off when workflows are more complex, volume is higher, and one owner can absorb the setup and debugging overhead.

{{ LOGIC_WORKFLOW: enrich-and-score-leads | Enrich and score sales leads }}

Head-to-Head: The Dimensions That Matter

Ease of use: Zapier wins clearly. Reviews generally describe Zapier as faster for simple workflows and Make as having a steeper learning curve for non-technical users. Zapier’s interface assumes little technical background; Make’s assumes familiarity with data structures and iterators.

Cost at scale: Make wins decisively. A startup scaling from 1,000 to 50,000 automations over 52 weeks generally spends substantially less on Make than on Zapier, though the exact total depends on workflow complexity and how each platform counts credits or tasks. The gap widens as volume grows.

Complex workflows: Make wins. Routers, loops, and native error handling give Make the edge for any workflow with more than basic if-then rules.

Integration breadth: Zapier wins with 8,000+ apps versus Make’s 3,000+. If your stack includes niche tools, check Zapier’s library first.

Support and debugging: Zapier wins for non-technical users. Auto Replay, Task History, and live chat on the Team plan provide a safety net. Make’s visual flow debugging is powerful but assumes the team can interpret technical error messages.

Free tier: Make offers 1,000 credits per month with up to 2 active scenarios and a 15-minute minimum polling interval. Zapier offers 100 tasks per month and is limited to two-step workflows. Make’s free tier is more useful for validation: 10x the task allowance and multi-step workflows included.

Where Both Platforms Hit the Same Wall

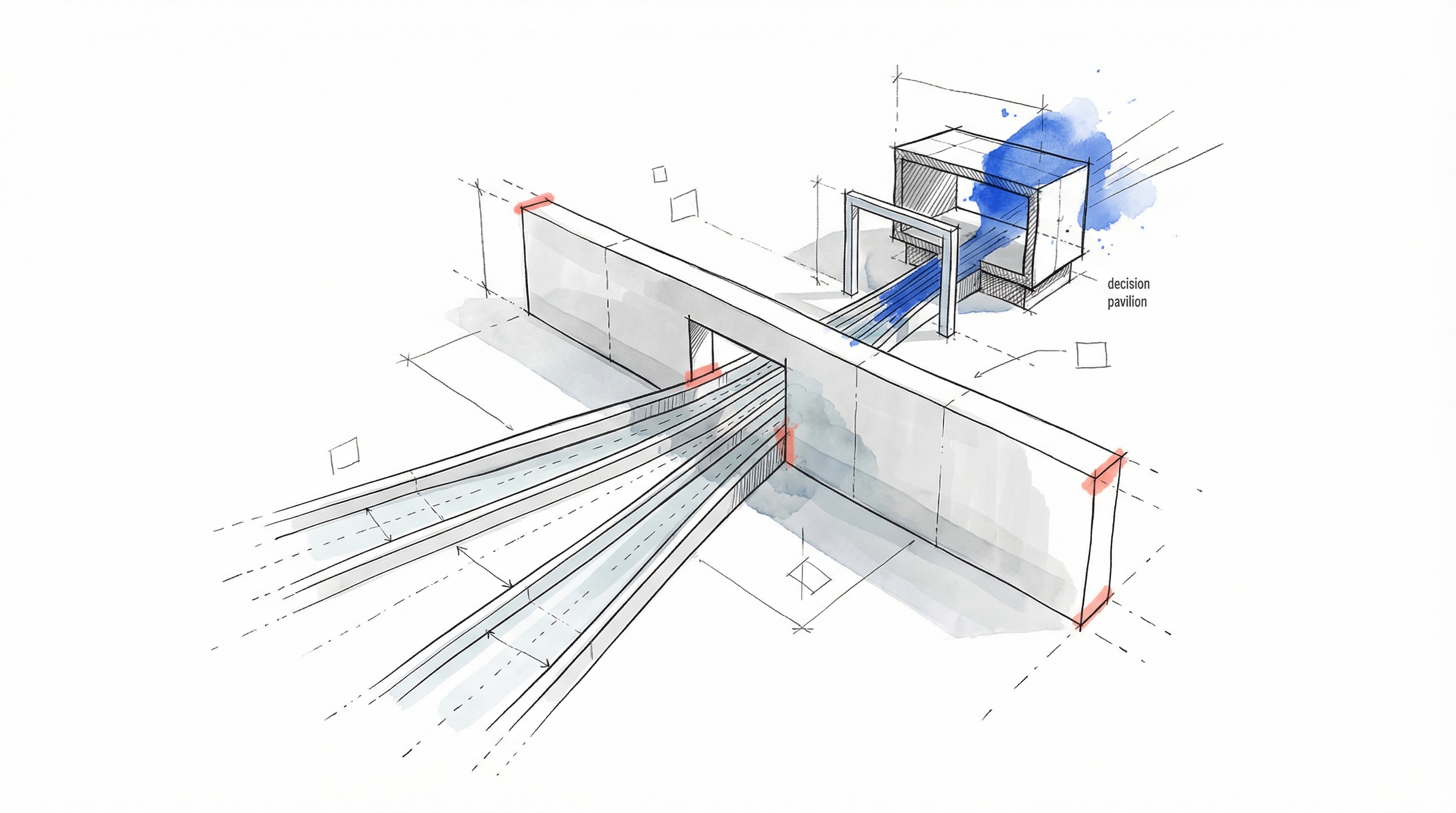

Zapier and Make both started as deterministic data routing tools: when this happens, do that. They work well for CRM sync, notification routing, lead management, and approval workflows. But the moment an automation needs agent infrastructure, both platforms share the same limitations.

Neither platform provides prompt versioning, typed schema enforcement across a production workflow, or testing infrastructure for LLM-powered workflows. Structured outputs are available at the model API layer; Logic’s value is handling typed schemas, validation, testing, version control, and execution logging together as the full production infrastructure stack.

The pattern is consistent: both platforms bolt AI features onto architectures designed for moving data between apps, not for running AI agents.

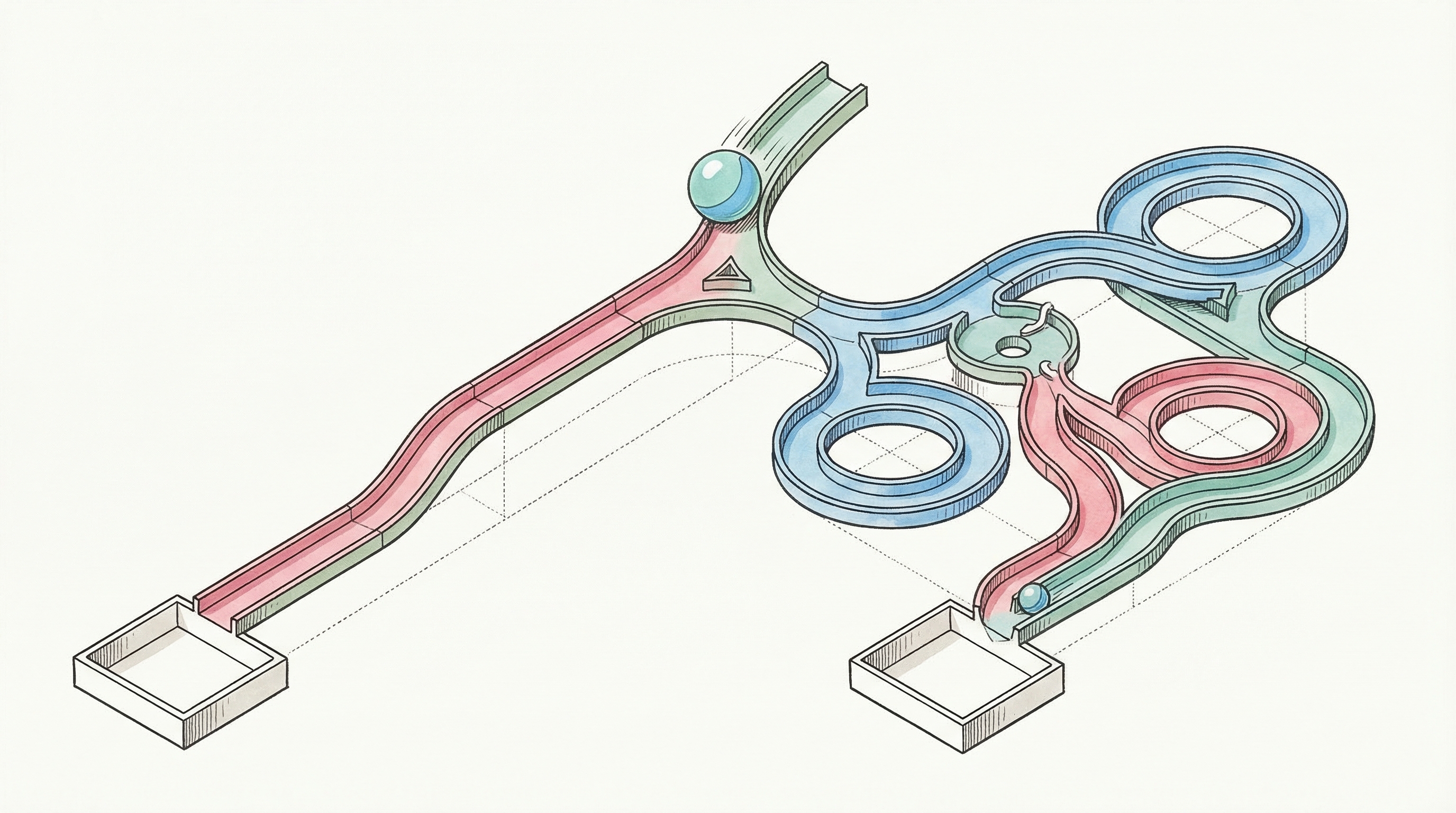

Logic fills a different role entirely: it is not a replacement for Zapier or Make, but complementary. Zapier and Make handle data routing and triggers. Logic handles the reasoning. Teams write a natural language spec describing what an agent should do, and Logic generates a production-ready agent. When an agent is created, 25+ processes execute automatically: research, validation, schema generation, test creation, and model routing optimization across GPT, Claude, and Gemini.

The practical workflow is straightforward: Zapier or Make triggers a process (a new form submission, incoming email, or updated CRM record), then calls a Logic agent via API for the decision-making step (classification, extraction, scoring, or content moderation), and routes the result back through the workflow. Data movement stays in the workflow tool; intelligence lives in the agent.

DroneSense uses this kind of separation for document processing: what took 30+ minutes per document manually now takes 2 minutes through a Logic agent, a 93% reduction, with no custom ML pipelines or model training required. The ops team refocused on mission-critical work once manual document review was off their plate.

The real alternative to Logic for these AI-powered steps is custom development. That means building testing harnesses, versioning systems, error handling, and model routing. What starts as a short project often stretches well beyond initial estimates. Logic handles that infrastructure so engineers stay focused on core product work, and it also lets engineers keep control while domain experts update rules safely through versioned, testable changes guarded by engineering-defined controls.

Decision Framework: When to Use Each

Choose Zapier when:

Your team is entirely non-technical with no capacity for a learning investment

Speed to first automation matters more than long-term cost optimization

Workflows are straightforward: trigger, filter, act, notify

You need apps from Zapier’s 8,000+ library that Make does not support

Support responsiveness matters; the Team plan provides priority support with faster, prioritized responses

Workflows are filter-heavy, since Zapier’s free conditional steps keep task counts low

Choose Make when:

At least one team member can make an upfront learning investment

Workflows require complex branching, loops, or conditional rules

As automation volume increases, cost economics become decisive

Budget optimization is a priority; Make’s per-credit economics are substantially cheaper than Zapier’s per-task pricing at comparable volumes

You need a more generous free tier for validation before committing spend

Run both when:

Your automation needs span both categories. Use Make’s free tier for complex, high-volume workflows and Zapier’s free tier for quick integrations with premium apps. Some teams use lightweight automation setups during early-stage validation to keep costs low.

Add Logic agents when:

Workflows require AI-powered decisions: classification, extraction, scoring, moderation

LLM outputs need typed schemas and validation, not prompt-and-hope

You need version control, auto-generated tests, and execution logging for AI steps

The AI component must be production-reliable

The Recommendation

For most seed-to-Series A startups with non-technical operations teams, start with Zapier if your team has zero technical comfort, or Make if even one person can handle the learning curve. Make’s economics are better at scale, but Zapier’s simplicity reduces the risk that automations stall because nobody can debug them.

Neither platform is the right place to build AI-powered workflows. When automation needs graduate from “move data between apps” to “make intelligent decisions about that data,” add a purpose-built tool for the reasoning layer. Logic is a production AI platform that helps engineering teams ship AI applications without building LLM infrastructure, including the typed APIs, auto-generated tests, version control, and multi-model routing that LLM-powered decision steps require. The platform processes 250,000+ jobs monthly with 99.999% uptime over the last 90 days. Start building with Logic and let Zapier or Make handle the routing while Logic handles the reasoning.

Frequently Asked Questions

How should teams govern automation access as usage expands across departments?

Teams usually need lightweight governance before automation spreads widely. A practical model includes one workflow owner per process, shared naming conventions, restricted edit permissions, and a monthly review of failed runs or unused scenarios. For Logic-powered steps, engineering should retain approval over schema changes while allowing domain experts to update business rules. That structure reduces accidental breakage and keeps automations understandable as more teams rely on them.

What metrics should teams monitor after launch to judge automation health?

Teams should track failure rate, time to resolution, monthly task or credit consumption, and the percentage of runs requiring manual correction. For Logic-based decision steps, additional useful metrics include schema validation pass rate, test results across versions, and execution logs tied to edge cases. Monitoring these signals reveals whether a workflow is saving time consistently or quietly shifting work into debugging, exception handling, and cleanup.

How should teams plan fallback handling when an automation or AI step fails?

Teams should define fallback paths before launch, not after a failed run affects customers or internal operations. Common safeguards include retry rules, Slack or email alerts, a manual review queue, and a clear owner for each workflow. For Logic steps, typed outputs and execution logging make escalation easier because downstream systems can detect invalid responses quickly. The goal is predictable recovery when something breaks, not perfect uptime.

How can teams evaluate whether a workflow is a good candidate for Logic first?

A strong first candidate usually has repetitive judgment, messy inputs, and a clear success definition. Examples include document extraction, lead scoring, moderation, or email classification where deterministic routing alone is not enough. Teams should favor a step with measurable outcomes, limited downstream risk, and existing historical examples for validation. That combination makes it easier to compare manual performance against Logic and decide whether broader rollout is justified.

What is the best way to document automations so handoffs do not create operational risk?

Teams benefit from simple documentation attached to every workflow: business purpose, trigger source, downstream systems touched, owner, backup owner, failure alerts, and last review date. For Make, screenshots of routers and branch logic help future maintainers understand scenario structure quickly. For Zapier, step-by-step descriptions and task assumptions reduce confusion. If Logic is involved, storing the agent version and expected output schema alongside the workflow makes troubleshooting faster.