LangGraph vs LangChain: Choosing the Right AI Workflow

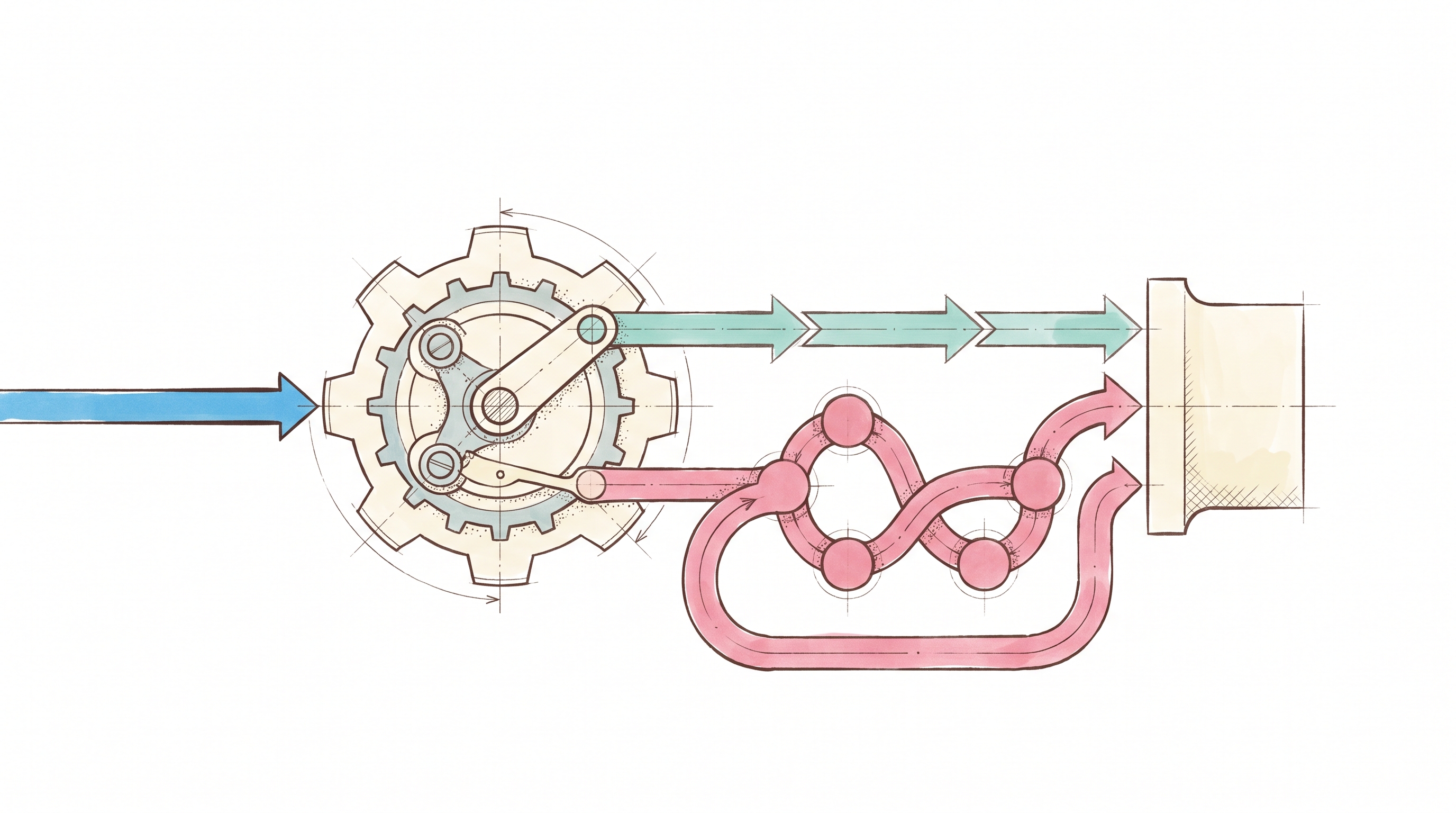

LangChain and LangGraph occupy distinct layers of the same ecosystem. LangChain provides model abstractions and agent construction via its create_agent primitive, while LangGraph adds a cyclic graph execution engine for stateful agent workflows. LangChain 1.0 (October 2025) completed the removal of legacy agent APIs (initialize_agent, deprecated since v0.1.0, and AgentExecutor) to the langchain-classic package, and positioned create_agent (built on LangGraph) as the primary path forward. LangGraph is recommended for complex workflows requiring fine-grained control; LangChain remains the faster starting point for common patterns. For a startup engineering team evaluating the two, the core question is whether the graph-based orchestration model is worth the infrastructure investment for a given workload.

That infrastructure investment is where most evaluations fall short. Both tools handle orchestration primitives, but neither ships with the production stack teams actually need: testing harnesses, version control, deployment pipelines, multi-model routing, or API contract protection around production integrations. LangChain's blog has described deployment infrastructure for LangGraph applications as complex and highlighted LangSmith Deployment (formerly LangGraph Platform) as a solution for those operational challenges. For a startup with three to five engineers, the evaluation extends beyond which orchestration model fits the workflow. It also covers whether the team should own the infrastructure surrounding it or offload that layer to a managed platform.

LangChain: Model Abstraction and Linear Orchestration

LangChain is an open-source framework for building agents and LLM-powered applications, with reusable components and integrations. The sections below cover where it delivers value, where it creates friction, and when it fits.

What It Does Well

LangChain's init_chat_model() function provides a unified interface across OpenAI, Anthropic, Google, and other providers, letting teams swap models without application code changes. Tool binding via llm.bind_tools(tools) attaches tool definitions to the model; teams still implement the tool-call/response loop manually. Structured output handling uses both provider-native and tool-based strategies.

For linear workflows (prompt, LLM call, optional tool call, output) LangChain's LCEL pipe operator composes steps sequentially in a pattern that maps to Unix pipes and functional composition. A basic agent is buildable in under 10 lines of code. Teams new to LLM development benefit from the scaffolding and abstractions, and the active community means most common patterns have documented implementations.

What It Leaves to You

LangChain's abstractions become the problem once requirements exceed linear flows. Octomind, a commercial AI testing product, used LangChain in production for over 12 months before removing it entirely, saying that it added code complexity and that its high-level abstractions became limiting.

Microsoft's Industry Solutions Engineering team documented similar friction: LangChain's abstractions made it difficult to adjust default agent prompts, modify tool signatures, or integrate custom data validation. Their assessment: "The heavily abstracted structure of LangChain can make it hard to try new complex ideas, often leading to messy workarounds."

Beyond the abstraction issues, the core LangChain library itself does not include a native version-control system for agent logic, though the LangChain ecosystem does offer deployment infrastructure via LangSmith Deployment, testing support, and a unified API for multiple providers. Pre-1.0 releases introduced breaking changes across major versions, with API deprecations and namespace restructuring that carry active migration costs for production teams.

When to Use It

LangChain fits when workflows are linear and predictable: prompt formatting, model calls with structured outputs, and simple tool integrations. It works well for rapid prototyping where time-to-demo matters more than production robustness, and for teams that primarily need multi-provider LLM abstraction without complex orchestration.

LangGraph: Stateful Graph-Based Agent Orchestration

LangGraph was built to solve a specific architectural limitation in LangChain's linear model. The sections below break down its architecture, its production gaps, and when the complexity pays off.

What It Does Well

LangChain modeled workflows as directed acyclic graphs with no mechanism for cycles. The LangChain team acknowledged this directly in their LangGraph launch post: "so far we've lacked a method for easily introducing cycles into these chains." LangGraph provides a graph execution engine built on three primitives: state (a shared typed schema), nodes (Python functions that read and write state), and edges (functions that determine execution order, including conditional branches).

This architecture adds capabilities that linear chains cannot express. Agents can retry and self-correct based on output quality. Execution can pause for human approval and resume without restarting. State persists across sessions through a checkpointer system supporting SQLite for development and Postgres for production. Multi-agent architectures compose hierarchically, with parent graphs coordinating child subgraphs.

LangGraph's execution model, inspired by Google's Pregel system, processes work in discrete super-steps with message passing along edges. Because edges can return execution to earlier nodes, cycles are a first-class construct. Time-travel debugging lets teams replay execution from any prior state checkpoint, a capability architecturally unavailable in LCEL chains.

A critical independence note: LangGraph does not require LangChain. LangChain components appear in documentation examples as convenient integrations, but they are optional.

What It Leaves to You

LangGraph's official documentation is explicit about its scope: it is "very low-level, and focused entirely on agent orchestration" and "does not abstract prompts or architecture." The four capabilities it claims are durable execution, human-in-the-loop, persistent memory, and debugging with LangSmith. Everything else is outside stated scope.

The production gaps are significant. LangGraph includes no built-in test runner for graph-level assertions, no unit or integration test harness for tool behavior, and no structured test contract for node inputs/outputs. State schema versioning has no native mechanism; a GitHub issue confirms LangGraph provides no built-in functionality for detecting incompatible changes in persisted state. Node error handling remains a feature request. Multi-model routing uses conditional edges and helpers like tools_condition, but LangGraph does not provide built-in cost-based routing.

Adding a new agent intent or updating workflow rules often requires restructuring the state schema. The learning curve is steeper than LangChain's; even LangChain's own "Three Years of LangChain" post acknowledged that LangGraph "did have a higher learning curve."

Production deployment without LangSmith means building observability, prompt versioning, and evaluation infrastructure independently.

When to Use It

LangGraph earns its complexity when workflows require cycles where agents must retry or self-correct, conditional routing determined by model decisions, human-in-the-loop approval mid-execution, persistent state that survives process restarts, or multi-agent coordination with defined handoff protocols. If the workflow is a straightforward classify-extract-respond pipeline, LangGraph's graph abstractions add setup overhead without corresponding capability gain.

{{ LOGIC_WORKFLOW: rewrite-copy-for-brand-and-seo | Rewrite copy for brand and SEO }}

Logic: Spec-Driven Agents with Production Infrastructure

Logic operates as a production agent infrastructure platform. Teams write natural language specs describing what an agent should do, and Logic transforms those specs into production-ready agents with the infrastructure already included. The sections below cover what ships out of the box, where Logic's scope ends, and when offloading infrastructure makes sense.

What It Does Well

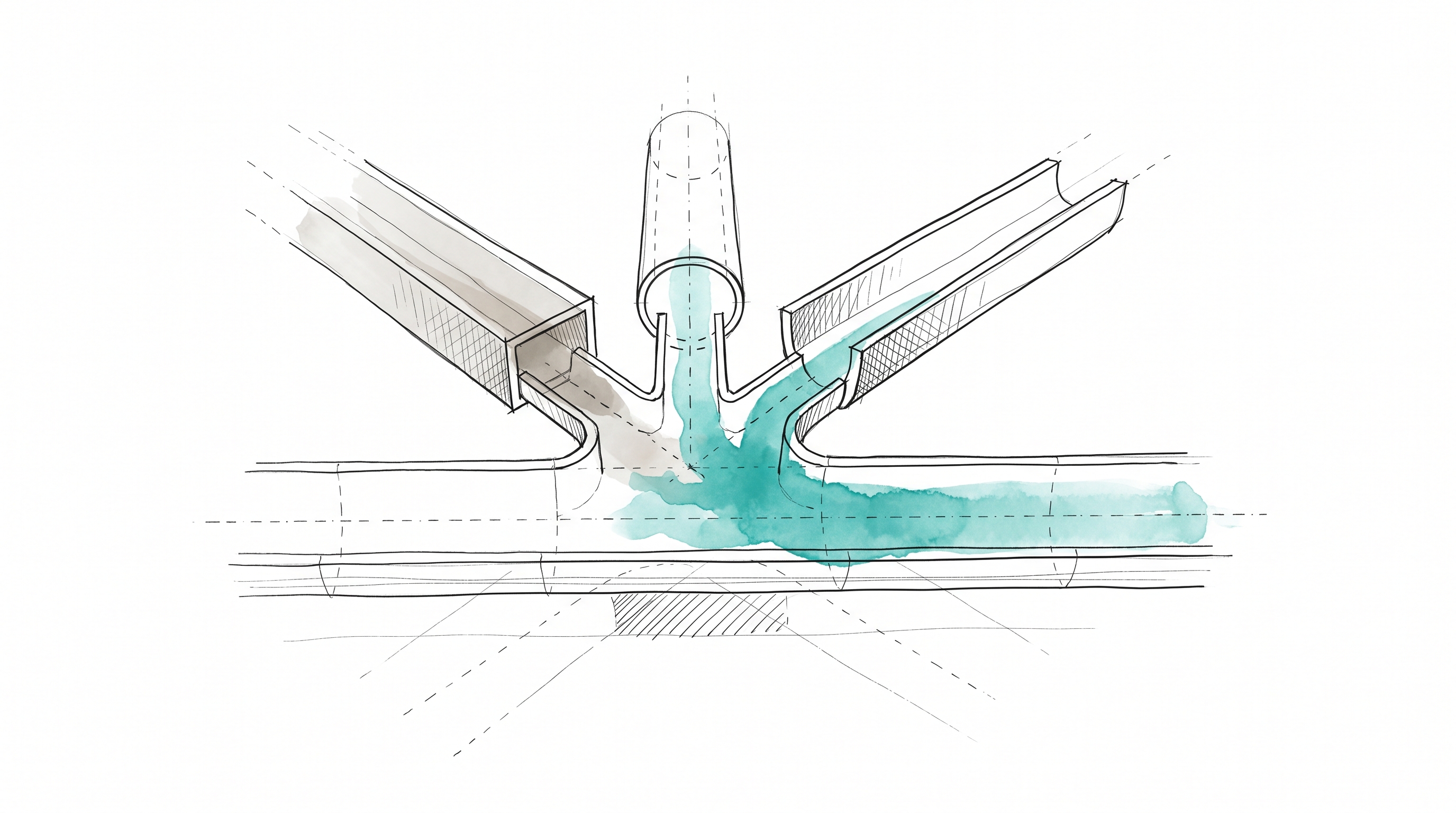

Engineers describe what they want an agent to do; Logic determines how to accomplish it and generates production-ready infrastructure with typed APIs, auto-generated tests, version control, observability, and model routing. When engineers create an agent, 25+ processes execute automatically, including research, validation, schema generation, test creation, and model routing optimization. Logic generates production-ready infrastructure in minutes instead of weeks.

The typed API layer auto-generates JSON schemas from the agent spec with strict input/output validation on every request. Spec changes update agent behavior without touching API schemas; integrations stay stable because the contract stays consistent. Schema-breaking changes require explicit engineering approval before taking effect, so domain experts can update business rules without accidentally breaking downstream systems.

Every agent generates a test suite automatically: 10 scenario-based synthetic tests covering typical use cases and edge cases, with multi-dimensional scenarios including conflicting inputs, ambiguous contexts, and boundary conditions. Each test receives a Pass, Fail, or Uncertain status. Teams can add custom test cases or promote any historical execution into a permanent test case with one click. Version control tracks every spec change with immutable versions, one-click rollback, and diff comparison. Intelligent model orchestration routes requests across OpenAI, Anthropic, Google, and Perplexity based on task type, complexity, and cost, without requiring manual model selection, although users can also choose to peg to specific models if they prefer.

DroneSense used Logic to reduce document processing time from 30+ minutes to 2 minutes per document, a 93% reduction, with no custom ML pipelines.

What It Leaves to You

Logic is declarative: teams define what, not how. Engineers who need explicit control over execution graphs, custom state machines, or node-level orchestration won't find those primitives here. Complex workflows requiring cycles, human-in-the-loop approval gates, or multi-agent handoff protocols are outside Logic's current scope; multi-step agent workflows are on the roadmap but not yet available. Logic also does not support custom model fine-tuning, self-hosted deployment, or real-time streaming responses.

When to Use It

Logic fits when the engineering problem is shipping AI agents to production without building infrastructure. Classification, extraction, routing, scoring, moderation, content generation: these patterns deploy as production APIs with full infrastructure included. For teams where AI capabilities enable something else (document extraction feeding workflows, content moderation protecting marketplaces, invoice processing accelerating operations) Logic handles the undifferentiated infrastructure so engineers focus on core product work.

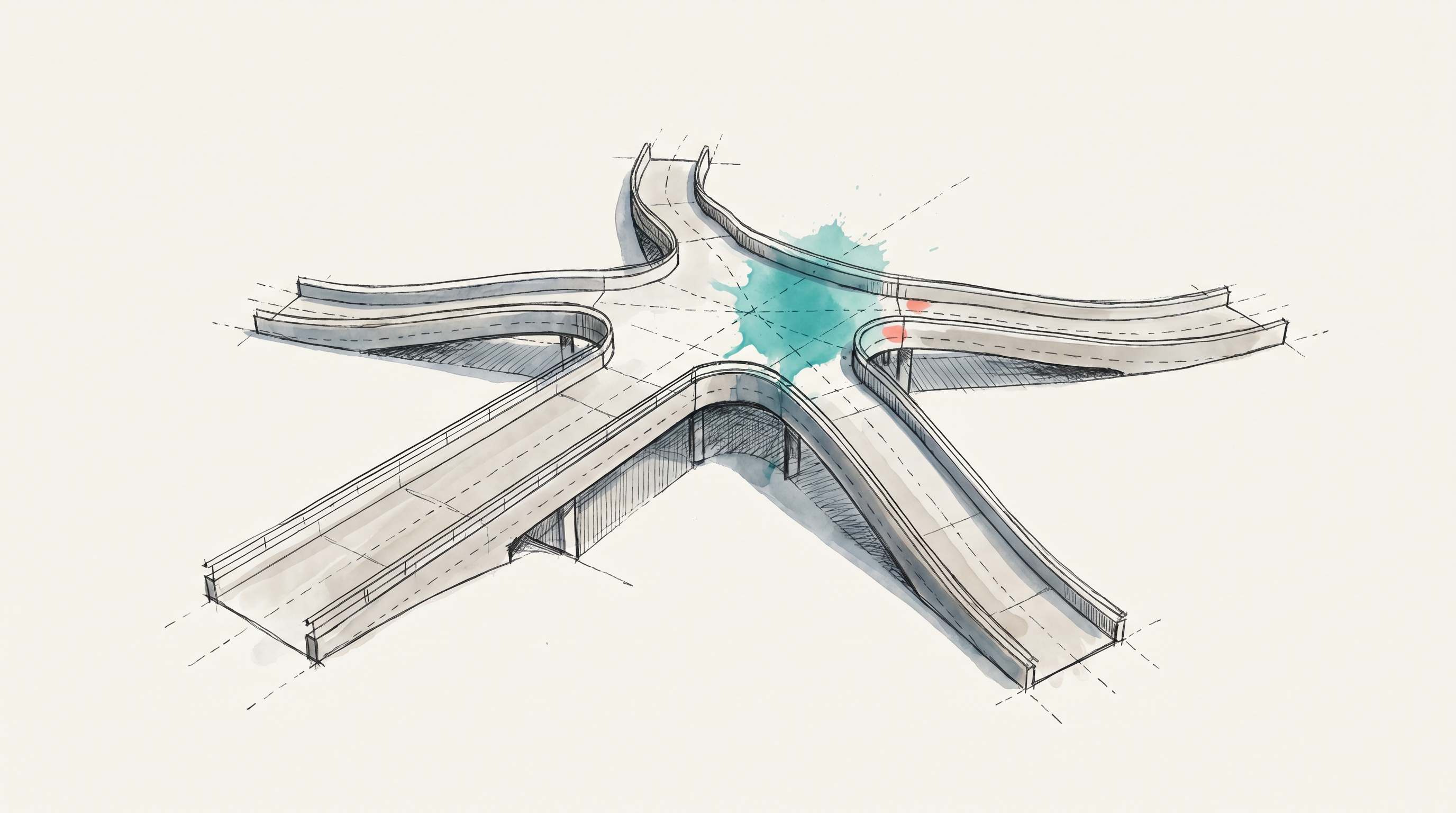

Decision Framework: When to Use Each

The LangGraph vs LangChain question has a straightforward answer from the vendor itself: LangGraph is increasingly positioned as the path for more advanced agent workflows. The harder question is whether to adopt the LangGraph ecosystem, build custom orchestration, or offload infrastructure entirely.

Choose LangChain alone when workflows are linear, predictable sequences: format a prompt, call a model, parse the output. Prototyping and demos where speed matters more than production robustness. Multi-provider abstraction for simple integrations.

Choose LangGraph when the workflow structurally requires cycles, conditional branching on LLM output, human-in-the-loop approvals, or persistent state across sessions. The team should have engineers experienced with distributed systems concepts who can manage the graph execution model, state schema discipline, and the production infrastructure LangGraph leaves out.

Choose Logic when shipping agents to production matters more than controlling orchestration internals. Logic ships the production infrastructure (testing, versioning, API contracts, model routing) out of the box. Both LangChain and LangGraph leave that infrastructure to teams. After engineers deploy agents, domain experts can update rules if a team chooses to let them; every change is versioned and testable with guardrails the team defines.

Choose custom/direct APIs when the team has strong Python and async engineering skills, workflow patterns are novel enough that framework abstractions add more friction than value, and there is appetite to invest in building and maintaining orchestration, testing, and deployment infrastructure long-term.

The Recommendation

For most startup engineering teams evaluating LangGraph vs LangChain, the honest assessment is that neither tool solves the production infrastructure problem on its own. LangChain's linear model hits a ceiling quickly. LangGraph's graph model is architecturally sound for complex stateful workflows, but ships without testing, versioning, deployment, or model routing, and pulls teams toward LangSmith's commercial layer to fill the gaps. Both paths leave engineers building infrastructure instead of product features.

If the workflow requires cyclic execution, human-in-the-loop gates, or multi-agent systems with persistent state, LangGraph is the right orchestration layer, and the infrastructure investment is justified. For the broader category of AI agent workloads (classification, extraction, scoring, moderation, routing) Logic gets agents to production in minutes with the infrastructure stack included. Garmentory used Logic to scale content moderation from 1,000 to 5,000+ products daily. Review time dropped from 7 days to 48 seconds, and error rates fell from 24% to 2%.

The build-vs-offload decision mirrors choices engineers make every day: run a Postgres instance or use a managed database, build payment processing or integrate Stripe. When AI is a means to an end rather than the end itself, owning the infrastructure competes with features that directly differentiate the product. Logic processes 250,000+ jobs monthly with 99.999% uptime, backed by SOC 2 Type II certification. Start building with Logic.

Frequently Asked Questions

What infrastructure do engineering teams need beyond LangGraph's orchestration layer?

LangGraph provides graph orchestration, state management, and checkpointing. Production teams still need testing harnesses, CI/CD pipelines, state schema migration tooling, multi-model routing, and error-handling middleware around external integrations. LangSmith adds observability and evaluation. The key takeaway for implementation planning is that orchestration alone does not cover the production stack required to ship reliably or protect API contracts during ongoing iteration.

Can organizations move from LangGraph-based systems to Logic incrementally?

Logic agents deploy as standard REST APIs, so they can sit alongside existing LangGraph-based systems without a full migration. A practical rollout starts with workloads where LangGraph's orchestration complexity adds more burden than value. This approach lets organizations keep graph-based systems where cycles and persistent state matter, while moving simpler production workloads to Logic one agent at a time.

Does Logic support the cyclic execution patterns LangGraph enables?

Logic currently handles single-step agent workloads such as classification, extraction, scoring, moderation, and similar patterns. Multi-step agent workflows are on the roadmap but not yet available. Workflows requiring cycles, human-in-the-loop approval gates, or complex state machines are better served by LangGraph or custom orchestration. Logic is the better fit when the main requirement is production infrastructure around well-bounded agent behavior.

How does Logic handle model selection compared with LangChain's provider abstraction?

LangChain provides a unified interface for swapping between providers manually. Logic uses model routing across OpenAI, Anthropic, Google, and Perplexity based on task type, complexity, and cost, so engineers do not manage model selection directly. For organizations that need strict model pinning, Logic also provides a Model Override API that locks a specific agent to a specific model while keeping the rest of the production infrastructure unchanged.