AutoGen vs LangChain vs CrewAI: Comparing Across Tools (2026)

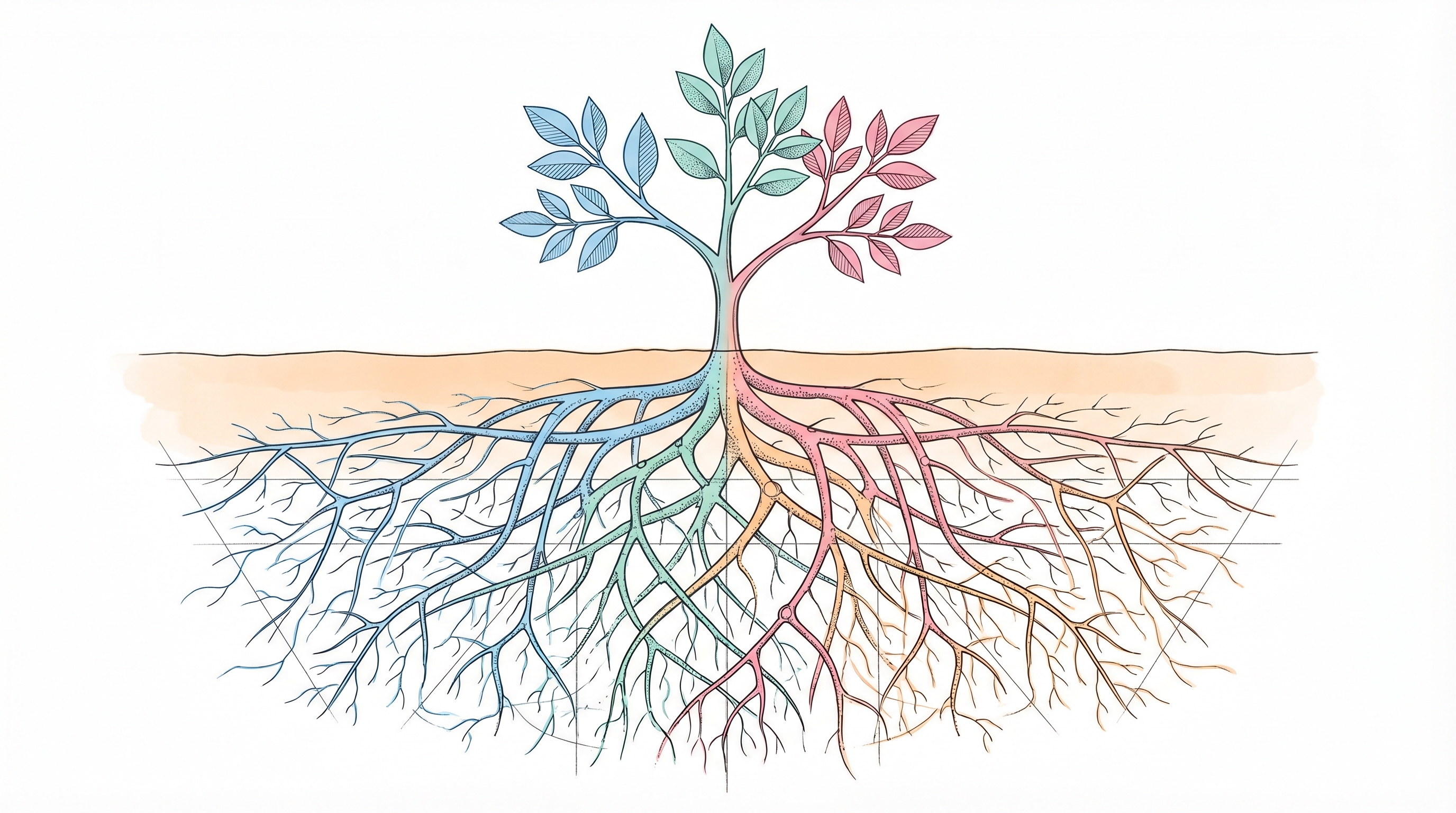

Every AI agent framework promises fast prototyping and multi-agent orchestration. The API call to an LLM is usually the easy part to wire up. Picking between AutoGen, LangChain with LangGraph, and CrewAI feels like the hard decision, but the real challenge sits one layer deeper: what happens after the prototype works.

The gap between "demo that impresses in a meeting" and "agent that handles production traffic reliably" is where framework choice actually matters. Testing, versioning, deployment pipelines, error handling, model routing, and execution visibility: none of these three frameworks include that infrastructure as a complete production layer. This comparison breaks down what each tool does well, what it leaves to engineering, and when a different approach makes more sense entirely.

AutoGen: Conversational Agents in Maintenance Mode

Microsoft's AutoGen uses a conversational group chat metaphor for multi-agent coordination. Agents communicate via asynchronous messages, taking turns in patterns like round-robin, model-driven selection, or directed graph flows. The v0.4 release was a ground-up rewrite adopting an actor model architecture, with three independently installable layers: autogen-core (foundations), autogen-agentchat (high-level API), and autogen-ext (extensions like Docker code execution and MCP support).

What it does well: Native code execution is AutoGen's strongest differentiator. The DockerCommandLineCodeExecutor provides sandboxed execution of model-generated code. That makes it a natural fit for research tasks and code generation workflows. The conversational model maps intuitively to scenarios where agents need open-ended collaboration. AutoGen also supports multi-language interoperability between Python and .NET agents.

What it leaves to you: AutoGen provides built-in state persistence and observability infrastructure, but it does not advertise a built-in testing harness, deployment guide, or prompt version control in its official documentation. A developer who ran AutoGen agents in production described the infrastructure gap on a public forum: staying alive for hours, recovering from crashes without losing context, exposing secure APIs, scaling under load, and persisting state across redeploys all required manual wiring with Redis, PostgreSQL, and Kubernetes. Filed GitHub issues (including #1311 and #1824) document agents getting stuck in conversation loops and tools being called excessively, both of which can become cost control problems without explicit termination conditions.

The strategic concern: AutoGen is maintained with a stable API and will continue to receive critical bug fixes and security patches. Microsoft positions teams toward its successor, the Microsoft Agent Framework, which integrates with Azure AI Foundry as a recommended backend. One developer summarized the pattern as perpetual migrations. Teams adopting AutoGen for new production systems should plan for a framework migration before their system reaches maturity.

LangChain and LangGraph: Maximum Control, Maximum Complexity

The LangChain ecosystem operates in distinct layers. LangGraph is the low-level orchestration runtime built on a stateful directed graph model. LangChain provides higher-level agent abstractions on top. LangGraph 1.0 shipped in October 2025 as the first stable major release in this framework category, and it can be used standalone without LangChain.

What it does well: LangGraph gives engineers the most fine-grained control of any framework in this comparison. Its graph execution model supports parallel fan-out and explicit state management through reducers, with execution described as inspired by Pregel's message-passing model. Error recovery in LangGraph is modeled within the graph using nodes, conditional edges, retry policies, and state inspection, and these flows can be tested through the graph's behavior. Built-in persistence means agents can resume after failures. Human-in-the-loop is native via interrupts that pause execution, allow state inspection and modification, then resume. Token costs stay predictable because each LLM call is a discrete, identifiable node in the graph.

What it leaves to you: Observability is commonly provided via LangSmith, a separate commercial product, though LangChain and LangGraph also document built-in and third-party observability options. Deployment infrastructure requires either the commercial LangGraph Platform or custom work. Testing harnesses, prompt version control, provider routing, and authorization controls are entirely a team's responsibility. LangGraph has a steeper learning curve. It requires users to understand concepts such as state reducers and the Command/Send primitives on top of LangChain concepts. A conditional workflow that takes minutes to describe can require significant LangGraph configuration.

Production teams have documented specific pain points. Octomind used LangChain for over 12 months before removing it entirely, reporting that rigid abstractions complicated their codebase and hindered productivity. LangChain's own founder acknowledged in the public discussion of that migration that the initial version "absolutely abstracted away too much." Developers report debugging through multiple abstraction layers to resolve a single API failure. LangChain's request handling has also surfaced undocumented default timeouts that caused production requests to fail without clear error signals.

{{ LOGIC_WORKFLOW: rewrite-copy-for-brand-and-seo | Rewrite copy for brand and SEO }}

CrewAI: Intuitive Prototyping, Unproven in Production

CrewAI uses a role-based "crew" paradigm where agents are defined with a role, goal, and backstory, then organized into crews that execute tasks sequentially or via a hierarchical manager agent. CrewAI OSS v1.0 shipped on October 20, 2025, and while LangChain is no longer a hard dependency, CrewAI was originally built on top of LangChain and maintains interoperability with it. Its Flows layer adds event-driven orchestration with typed state management for more complex workflows.

What it does well: CrewAI has the lowest onboarding friction of the three frameworks. The "team of specialists" metaphor maps to how many engineering teams already think about dividing work. YAML-first configuration keeps agent and task definitions readable. The Flows architecture, with @start() and @listen() decorators, provides clean event-driven control for workflows that go beyond simple sequential execution. Structured outputs via Pydantic models are supported at the task level.

What it leaves to you: At the OSS tier, CrewAI documents observability tools and deployment preparation. RBAC is available through CrewAI AMP or enterprise plans; audit trails and a dedicated OSS testing infrastructure are not explicitly documented. Developers report crew executions becoming slow due to abstraction overhead and role-playing prompts processed through full reasoning loops. The backstory field is mandatory for every agent, and CrewAI's prompting model can lead to higher token usage per step than equivalent workflows in LangGraph. Virtual environments can grow to several hundred megabytes due to dependency weight. That adds deployment friction.

More concerning are the silent failure modes. A filed GitHub issue (#3154) documents agents producing structurally valid output traces without actually invoking tools: the agent appears to complete work while inventing results. Developers have reported repeated tool-calling behavior in some agent frameworks without clear orchestration control. Community threads have raised the question of who is actually running CrewAI in production. The growing number of public production case studies indicates CrewAI is used for real-world deployments and enterprise use, though its production track record is still maturing compared to longer-established frameworks.

Logic: Spec-Driven Agents with Production Infrastructure Included

Logic takes a fundamentally different approach. Instead of providing orchestration primitives that an engineering team assembles into a production system, Logic transforms a natural language spec into a production-ready agent with typed API endpoints, auto-generated tests, version control, model routing, and execution logging included. Teams describe what the agent should do; Logic determines how to accomplish it and ships production infrastructure in minutes instead of weeks.

What it does well: The spec-driven approach removes the infrastructure layer that AutoGen, LangGraph, and CrewAI largely leave to engineering. API contracts are auto-generated as JSON schemas with strict input/output validation and backward compatibility by default: spec updates change agent behavior without touching the API schema. Logic generates 10 test scenarios per agent automatically. These scenarios cover edge cases with realistic data combinations, conflicting inputs, and boundary conditions. Each test receives Pass, Fail, or Uncertain status, and any historical execution can be promoted to a permanent test case. Version control is immutable: every version is frozen once created, with instant rollback and change comparison. Logic routes agent requests across OpenAI, Anthropic, Google, and Perplexity based on task type, complexity, and cost.

What it leaves to you: Logic focuses on production-ready single-agent use cases today, and publicly verified support for more complex multi-step orchestration is limited. Teams needing native code execution, complex graph-based state machines, or deep customization of orchestration patterns will find Logic's declarative model more constrained than LangGraph's graph primitives. Logic routes models automatically, which is a strength for most teams but a constraint for those who need granular per-task model selection beyond what the Model Override API provides.

Production evidence: Garmentory used Logic for internal operations and ecommerce content moderation. Processing capacity shifted from 1,000 products daily to 5,000+, review time dropped from 7 days to 48 seconds, and error rate fell from 24% to 2%. The system handles 190,000+ monthly executions across 250,000+ total products processed, while contractor headcount moved from 4 to 0 and the marketplace price floor dropped from $50 to $15. That is a concrete production outcome, not a prototype.

The real alternative to Logic is building that production infrastructure internally. That means testing harnesses, deployment pipelines, version control, error handling, and multi-provider routing on top of whichever framework a team chooses. Logic handles those production concerns so engineers focus on application logic. Logic serves both customer-facing product features and internal operations. After engineers deploy agents, domain experts can update rules if teams choose to let them: every change is versioned and testable with guardrails the engineering team defines, and failed tests flag regressions without blocking deployment.

Decision Framework: When to Use Each

The right choice depends on what a team is optimizing for.

Choose AutoGen when the workflow centers on code generation and execution with sandboxed Docker environments. Microsoft also provides a migration guide from AutoGen to the Microsoft Agent Framework, so migration considerations should be assessed before adopting it for new production systems.

Choose LangGraph when fine-grained control over execution flow matters most, workflows mix deterministic and agentic steps with complex state management, the team has graph programming experience, and engineers have capacity to build observability, testing, versioning, and deployment infrastructure independently.

Choose CrewAI when fast multi-agent prototypes are the priority, workflows map cleanly to sequential role-based handoffs, and the organization is willing to invest in the commercial AMP platform for production governance. It is best treated as a prototyping tool until production evidence matures further.

Choose Logic when the goal is to ship agents to production without building LLM infrastructure. Logic fits teams where AI capabilities enable the product rather than being the product itself: document extraction, content moderation, classification, scoring, and similar patterns where the spec-driven approach gets a team to production far faster than building the stack internally.

The Infrastructure Question Underneath the Framework Question

AutoGen, LangGraph, and CrewAI share the same production gap: no built-in testing, no prompt versioning, no deployment pipelines, no multi-provider routing, and no complete production infrastructure layer that ties validation, operations, and rollout together. The framework comparison matters less than the infrastructure decision. A team either budgets engineering time to build that layer on top of a framework, pays for a commercial platform like LangGraph Platform or CrewAI AMP, or builds directly on LLM provider APIs and skips framework complexity entirely.

For most startups where engineering bandwidth is the constraint and AI is a means to an end, building LLM infrastructure competes directly with building the product. Logic's spec-driven agents handle that infrastructure so teams ship what customers pay for. When an agent is created, 25+ processes execute automatically: research, validation, schema generation, test creation, and model routing optimization. That is the work engineers skip.

If the need is deep control over custom orchestration patterns, LangGraph gives engineers the tools to build exactly what they need. If the need is a quick prototype to validate a multi-agent concept, CrewAI gets teams there fastest. But if the goal is production agents with reliable infrastructure underneath, start building with Logic.

Frequently Asked Questions

What infrastructure gaps should teams expect across AutoGen, LangChain/LangGraph, and CrewAI?

None of the open-source runtimes ships a full production layer by default. Testing harnesses, prompt version control, deployment pipelines, provider routing, and authorization controls are all the team's responsibility. Observability may exist through products such as LangSmith or CrewAI AMP, and some built-in or third-party options also exist, but integration, operations, and rollout work still need to be planned separately.

How should teams get started with Logic for a production use case?

Start with a narrow use case: document extraction, content moderation, classification, or scoring. Logic fits best when the goal is to ship quickly without building testing, versioning, and model routing infrastructure internally. Describe the agent in a natural language spec, then use the generated typed API, auto-generated tests, version history, and execution logging to validate behavior before expanding into broader workflows.

When should teams avoid AutoGen for new production systems?

Long-term framework stability matters here. AutoGen still supports conversational multi-agent workflows well, especially sandboxed Docker-based code execution, but Microsoft is positioning teams toward the Microsoft Agent Framework. That creates migration risk. If a team already plans to invest heavily in production infrastructure, it should also account for likely migration work before the system reaches maturity.

How should teams evaluate Logic for multi-agent or highly customized workflows?

Logic is strongest for production-ready single-agent use cases today, where the application needs typed APIs, automated tests, version control, execution logging, and model routing without building those systems internally. Teams needing native code execution, complex graph state machines, or deep orchestration control may find LangGraph or AutoGen better aligned. The tradeoff is speed to production and infrastructure coverage versus flexibility in custom orchestration patterns.