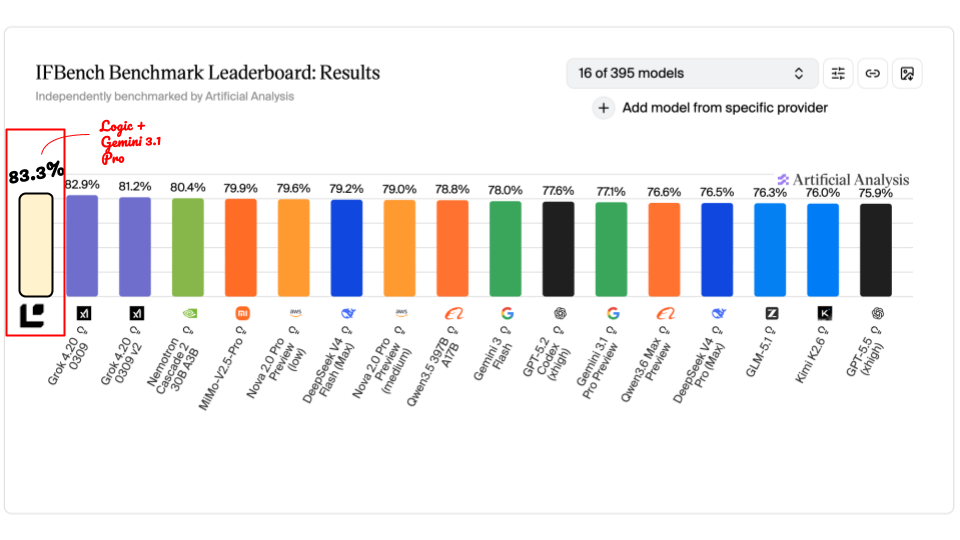

Logic scores 83.3% on IFBench, beating every model on the public leaderboard

Instruction following benchmarks are usually treated as model comparisons. Better model, better score.

Our IFBench run suggests that is only part of the story.

We tested Gemini 3.1 Pro Preview on Allen AI's IFBench in two ways: once as a direct model call, and once wrapped in a Logic agent generated from a task spec. The model was the same. The benchmark was the same. The prompts were the same.

The direct run scored 77.1%. The Logic run scored 83.3%.

That 6-point lift was enough to move the same base model above every result currently listed on the Artificial Analysis IFBench leaderboard as of April 2026.

What IFBench measures

IFBench was released by Allen AI and published at NeurIPS 2025. It’s a set of 294 tasks where each prompt includes one or more precise, verifiable constraints on the response. The constraints are things a human reviewer could check with a ruler, a word count, or a regular expression. Here is one prompt from the dataset, verbatim:

Create stairs by incrementally indenting each new line. Respond with three sentences, all containing the same number of characters but using all different words. What if Arya could disguise others as herself?

Every prompt in the benchmark looks something like this, with orthogonal constraints layered on top of a creative task and verified in a single pass.

IFBench was built with novel constraint types models haven't been trained on, so it measures whether a system can actually follow a rule the first time it sees one instead of pattern-matching something it has seen before.

Scoring is deterministic. A Python verifier checks each response against each constraint. There is no partial credit: either every constraint in the prompt is satisfied or the response fails. There is no model as judge, and no retries are allowed.

Where the 6 point edge comes from

There are two things helping Logic’s score here.

The first is the Logic harness. Every Logic agent sits inside a framework that does more than pass a prompt through to a language model. The harness validates input, enforces a structured output schema, routes the call, enforces the contract the spec describes, and more. All of this happens automatically for every spec you write.

The second is actually the contents of the spec itself. With Logic, the spec is the artifact that powers your agent. Everything else (schemas, tests, tool routing, retry behavior) is derived from it. We have found that a carefully written spec can have an outsized impact on the quality of the agent’s output.

The spec we used for this run is about 500 words and describes a general-purpose agent for tasks where the output has to conform to specific requirements stated in the prompt. You can import and run it yourself at this link.

The core of the spec looks like this:

How to approach the task

1. Read the prompt carefully. Identify the underlying task and identify every

requirement the response has to satisfy. Read the whole prompt before

drafting anything.

2. Draft a response that addresses the task. Answer the underlying question

or produce the requested artifact. Keep the requirements in mind while

drafting, but do not let requirement-tracking produce a stilted or

unnatural answer if it can be avoided.

3. Check the draft against every requirement, one at a time. Count exactly

when a count is specified. Check formatting rules character by character.

Check ordering, inclusion, exclusion, and structural rules precisely.

4. Revise if any requirement is not met, and continue until the response

satisfies every requirement.

5. Return the final response. Just the response. No explanation, no list

of requirements, no self-report.

The spec does not reference IFBench, the dataset, or the specific constraints being tested.

Why the spec matters as much as the model

If you had a team of humans doing this work, you would tell them to read the requirements carefully, draft an answer, check their own work against the requirements, and revise if anything is off. That advice is equally obvious for a model. The difference is that people tend to not actually write it down in as much detail for the model, which means the model has to guess what "good" looks like every time it runs.

On tasks like this one, moving from one frontier model to another usually shifts the score by a few points. In our test, adding a short, thoughtful spec on top of the same base model delivered more than that.

What this means for anyone building agents

When you are building an agent, once you’ve chosen a model with a baseline level of intelligence, the two highest-leverage decisions are what harness sits around the model and how clearly you have written the spec. Which specific frontier model you route to matters less than either of those.

Write a good spec, and Logic will produce an agent that follows it reliably in production.