AI Invoice Processing: Build the Pipeline or Offload It

Engineering teams routinely offload infrastructure they could build themselves. Managed databases instead of self-hosted Postgres. Stripe instead of custom payment processing. AWS instead of bare metal. The calculus is familiar: if the infrastructure isn't what differentiates your product, the engineering hours are better spent elsewhere. AI invoice processing looks like it should follow the same pattern: call an LLM API, parse the response, feed it to your accounting system.

The analogy breaks down at the reliability boundary. A managed database returns deterministic results. A payment processor either charges the card or doesn't. LLM-based invoice extraction returns structurally valid JSON with a hallucinated total that passes every schema check and posts to your ledger before anyone notices. The infrastructure required to make that extraction trustworthy in production: testing, validation, version control, model routing, and regression detection. That is where a short project quietly becomes something much larger.

Why Invoice Extraction Is Harder Than It Looks

In a demo, sending a PDF to GPT, Claude, or Gemini and asking for structured JSON works. Production surfaces the long tail: scanned invoices at odd angles, fax artifacts, vendor-specific layouts, and handwritten annotations overlapping printed text. Even if a model achieves 90% accuracy on individual fields, a figure that represents well-structured documents rather than the general case, the probability of a fully correct extraction across 10 fields drops to roughly 35%. Invoice extraction and financial-data generation can introduce errors, and hallucinated or misread values may propagate if outputs are not properly validated before downstream use.

Invoice extraction is particularly difficult for LLMs because the failure modes are subtle and self-reinforcing.

Schema compliance doesn't mean extraction accuracy. Provider-level structured output APIs guarantee that the response matches your JSON schema: correct field names, valid data types, proper enum values. Structured outputs themselves are handled at the API layer. What they do not guarantee is that the values correspond to what's actually in the source document. A schema-valid response containing "$234.4" when the invoice reads "$234.1" passes every automated check.

Line item tables compound errors across rows. OCR-based extraction introduces schema ambiguity errors, including wrong-row extraction where the model pulls a quantity from an adjacent row. The extracted value exists in the document, passes type validation, and produces no error signal.

Multi-page invoices fragment context. Line items spanning page breaks get split or lost. Maximum context windows differ from effective context windows: fitting the document into the window does not guarantee reliable processing of every section.

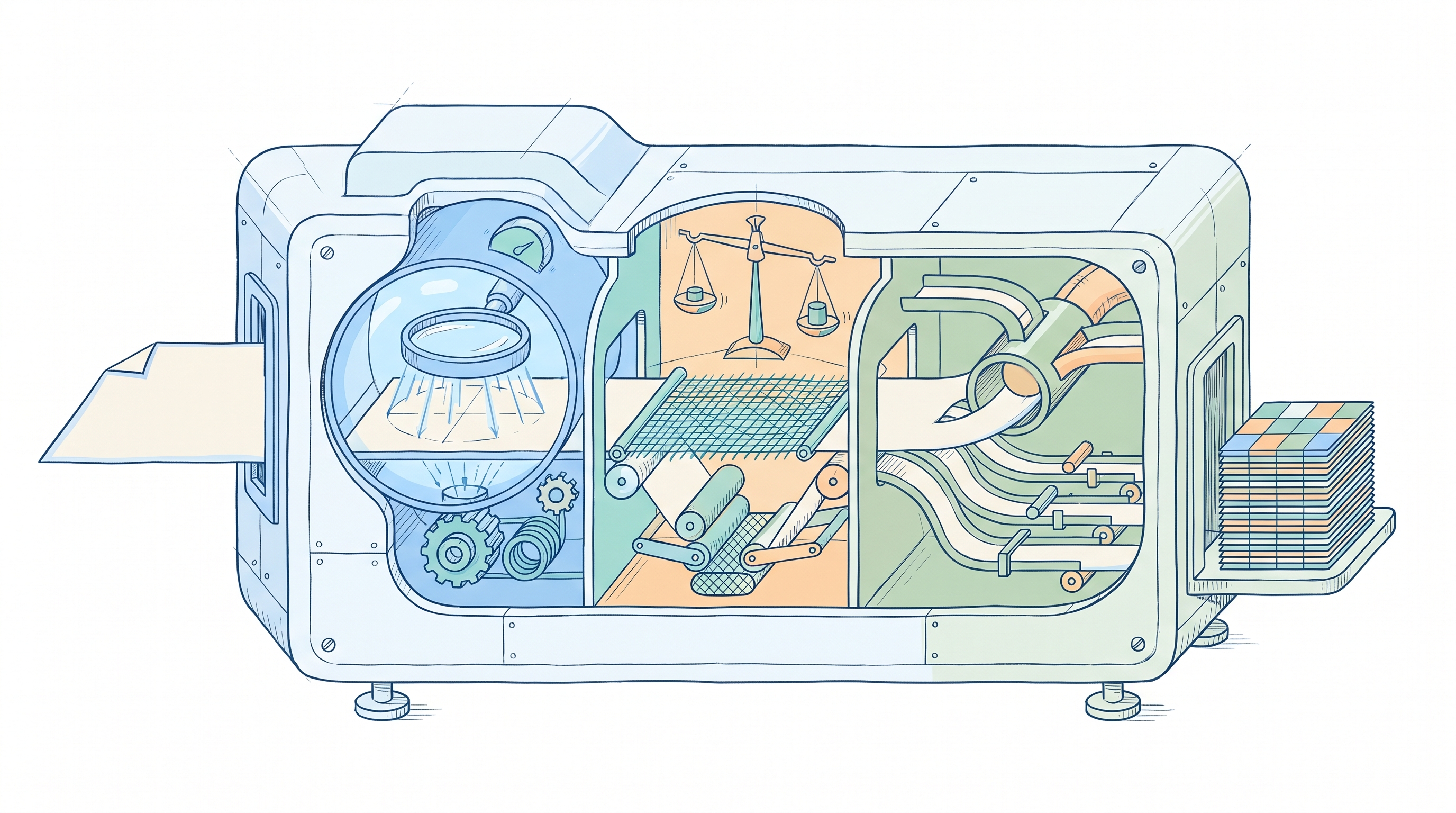

The Infrastructure Behind AI Invoice Processing

The extraction call is one component. Production-grade AI invoice processing requires an infrastructure layer that most teams significantly underestimate. Logic exists to handle this layer; more on that below. Here's what engineering actually has to stand up if they build it themselves:

Semantic validation beyond schema enforcement. Provider APIs handle structural guarantees: your JSON will match the schema. The remaining problem is semantic correctness: line items must sum to subtotals, subtotals must sum to totals. When they don't, the extraction contains errors that no schema check catches.

Prompt versioning coupled to evaluation. A version record without evaluation metrics is not a production version record. Every change, including ones classified as trivial, requires running the full evaluation suite. A minor wording adjustment can cause a measurable regression that only surfaces through evaluation.

A testing harness for non-deterministic outputs. LLMs produce different outputs from identical inputs. Traditional unit tests don't work. Teams need scenario-based evaluation suites covering common invoice formats, edge cases (merged cells, missing fields, multi-currency documents), and regression detection when prompts or models change.

Model routing and failover. Each model handles document types differently. Some trade raw accuracy for a greater tendency to abstain when uncertain, while others optimize for throughput at the expense of edge case handling. Production systems need routing rules plus failover.

Deployment pipelines with rollback. Prompt changes, model updates, and schema modifications all need coordinated deployment with rollback capability. No widely adopted open-source solution exists for this. And these components aren't independent: versioning requires the testing harness, and test results surface potential issues before deployment, so teams that estimate components independently will undercount total scope.

Where Logic Fits

Logic handles the infrastructure layer so engineering teams can focus on defining what the invoice processing agent should do rather than building the machinery around it. Engineers write a natural language spec describing extraction behavior: which fields to pull, how to handle missing data, what validation rules apply. Logic turns that spec into a production-ready agent with typed REST APIs, auto-generated tests, version control, and execution logging. You can prototype in 15-30 minutes and ship to production the same day. Behind that speed, 25+ processes execute automatically: research, validation, schema generation, test creation, and model routing optimization.

The spec can be as detailed or concise as the use case requires: a 24-page document with prescriptive input/output/processing guidelines, or a 3-line description. Logic determines what it needs to create the agent either way. When requirements change, updating the spec updates agent behavior instantly.

Here's what that includes:

Typed APIs with contract protection. Logic auto-generates JSON schemas from your agent spec with strict input/output validation and detailed field descriptions. Spec changes that update decision rules or extraction behavior apply immediately without touching the API schema. Schema-breaking changes like new required inputs or modified output structure require explicit engineering approval before taking effect. Domain experts on the finance or ops team can refine extraction rules weekly; your integrations stay stable because the API contract is protected by default.

Scenario-based test generation. Logic automatically generates 10 test scenarios based on your agent spec, covering typical invoice formats and edge cases: conflicting line item data, ambiguous tax fields, multi-page documents with boundary conditions. Each test receives a Pass, Fail, or Uncertain status. When tests fail, Logic provides side-by-side diffs showing expected versus actual output with clear failure summaries. Teams can add custom test cases for specific vendor formats or promote any historical execution into a permanent test case with one click.

{{ LOGIC_WORKFLOW: extract-structured-resume-application-data | Extract and transform structured application data }}

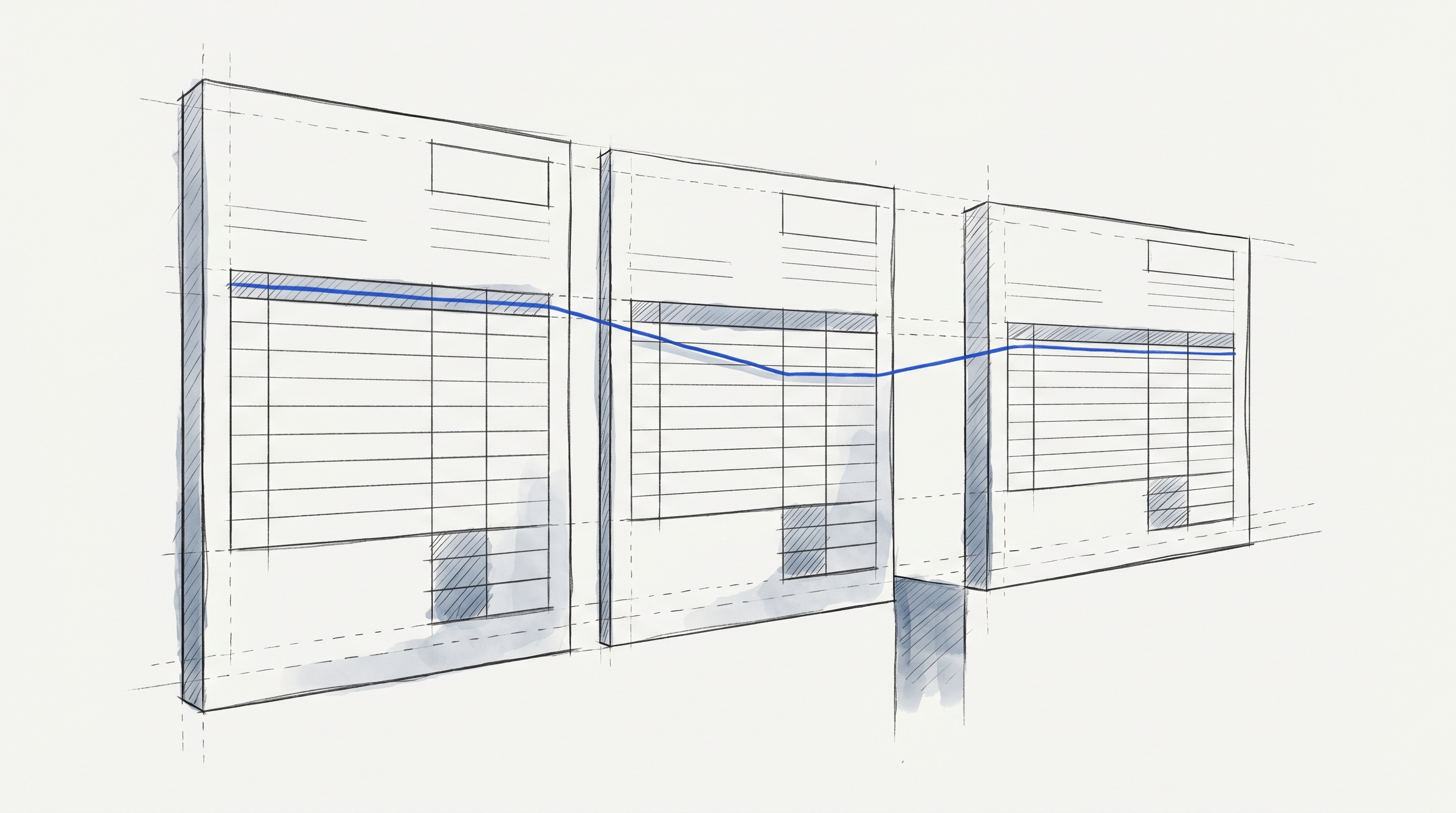

Immutable version control with instant rollback. Every spec version is frozen once created. Teams can pin agents to specific versions for production stability, compare changes across versions, and roll back with a single action. The full audit trail maintains compliance requirements that invoice processing systems typically demand.

Model orchestration across providers. Logic automatically routes requests across GPT, Claude, Gemini, and Perplexity based on task type, complexity, and cost. Engineers can inspect which model was used for any execution via execution logs without managing model selection or handling provider-specific quirks like different structured output implementations. For teams with compliance requirements, the Model Override API locks specific agents to specific models to support regulated workflows.

Native document processing. Logic handles PDF extraction natively, managing text extraction, font encoding, and layout parsing without external libraries like PyMuPDF or pdfplumber. Upload invoices directly; Logic manages the preprocessing layer that typically requires separate infrastructure.

Full execution logging. Every agent execution is logged with inputs, outputs, and decisions made. Teams can inspect any historical extraction, review the recorded decisions for each output, and promote any execution into a permanent test case directly. This provides the audit trail needed for financial document processing, without building a custom observability layer.

The real alternative to Logic is building all of this in-house. That means prompt management, a testing harness, versioning tied to evaluation, model routing, deployment pipelines, and document preprocessing, all architected to work together. Logic handles that production infrastructure so engineers ship AI invoice processing agents without adding infrastructure debt to the codebase.

AI Invoice Processing in Practice

DroneSense, which manages compliance documentation for drone operations teams, reduced document processing time from 30+ minutes to 2 minutes after deploying Logic agents. With Logic, no custom ML pipelines or model training were required. The ops team refocused on essential work instead of document processing.

Whether AI invoice processing is a customer-facing product feature (document extraction embedded in a SaaS product where users upload files) or an internal operation (purchase order processing that accelerates back-office workflows), the infrastructure requirements are the same. In both cases, engineers deploy and maintain the agents. The difference is who benefits from the output.

After engineers deploy agents, domain experts can update extraction rules if you choose to let them. The ops team refines validation rules, the finance team adjusts field mappings for new vendor formats, the compliance team updates classification criteria. Every change is versioned and testable with guardrails you define. Failed tests flag regressions but don't block deployment; your team decides whether to act on them or ship anyway.

When to Build, When to Offload

Building LLM infrastructure yourself makes sense when AI processing is central to what you sell: if extraction quality is what differentiates your product, owning the infrastructure lets you optimize in ways a general-purpose platform won't. Some compliance contexts also mandate that processing happens entirely within your own infrastructure.

For most teams, AI invoice processing enables something else. It feeds accounting workflows, accelerates AP operations, or reduces manual review costs. When AI is a means to an end, infrastructure investment competes with features that directly differentiate your product. Teams that experiment with tools like LangChain or similar frameworks still end up building testing, versioning, deployment controls, and validation around provider-level structured outputs themselves.

Cloud AI services like Amazon Bedrock or Google Vertex AI provide raw model access but leave the production infrastructure layer as an exercise for the reader.

Logic processes 250,000+ jobs monthly with 99.999% uptime over the last 90 days. Teams can validate a proof of concept in minutes and ship to production the same day. That's the difference between spending engineering cycles on undifferentiated infrastructure and spending them on the product your customers actually pay for. Start building with Logic.

Frequently Asked Questions

What types of invoices can LLM-based agents process?

Logic agents process PDFs, scanned documents, and structured data files. The spec-driven approach lets the agent adapt to different vendor formats without per-template engineering, and Logic handles text extraction, font encoding, and layout parsing internally. That makes it practical to process common invoice variations, including multi-page documents and line item tables, through the same typed API rather than maintaining separate preprocessing infrastructure.

How does AI invoice processing handle fields that don't exist in every document?

Logic uses typed input and output schemas that account for missing data instead of inventing plausible values. If a spec marks a field as optional, the agent returns structured JSON that reflects the gap. Teams can then validate that behavior with auto-generated tests covering cases where fields are present, absent, or ambiguous before spec changes reach production.

Does Logic replace the accounting or ERP system?

No. Logic extracts and structures invoice data, then exposes it through standard REST API endpoints that integrate with existing ERP platforms, AP workflows, or accounting systems. Logic handles the extraction and validation layer, while the downstream system remains the source of record for posting, reconciliation, and the broader financial workflow.

How do teams validate extraction accuracy for financial data?

Logic auto-generates scenario-based tests for edge cases such as conflicting line items and multi-page boundary conditions. Teams can add vendor-specific test cases or promote real executions into permanent tests with one click. Execution logging then provides visibility into inputs, outputs, and decisions made. Teams get a practical workflow for spotting regressions before they affect financial processes.

What happens when invoice formats change or new vendors are added? Teams update the agent spec to reflect the new fields, formats, or rules. Logic applies the updated extraction rules while keeping the API contract stable by default. Because each version is immutable, teams can compare versions, test changes, and roll back instantly if a new rule introduces regressions. Domain experts can also update rules when engineering allows