AI Document Classification: The First Step in Your Document Pipeline

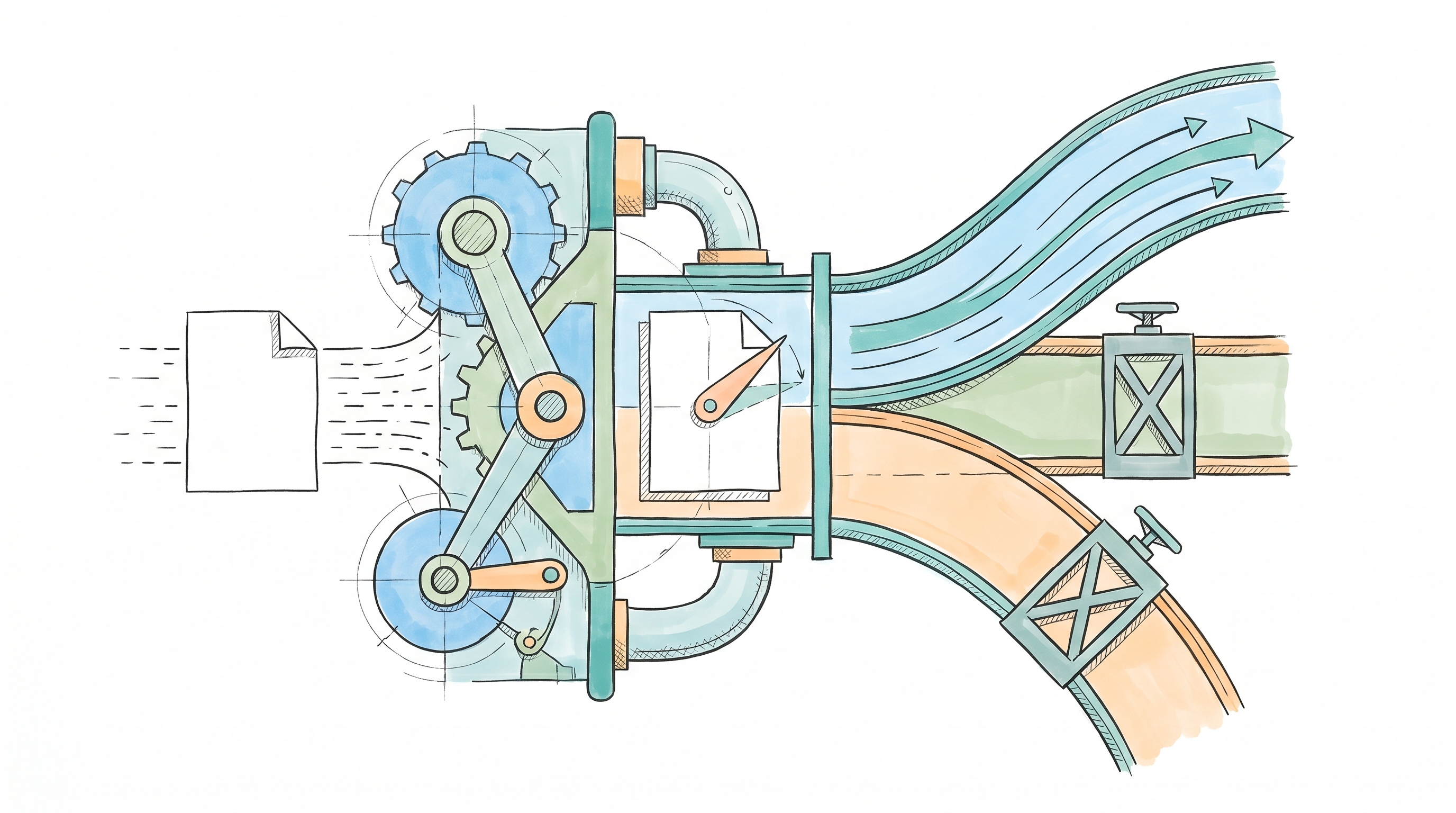

Every document processing pipeline starts with the same architectural decision: what type of document is this? Classification assigns the label that selects the extraction schema, the validation rules, and the routing rules for everything downstream. The doc_type variable parameterizes your entire pipeline. Get it right, and extraction, validation, and routing all operate on correct assumptions. Get it wrong, and the pipeline completes successfully, returns a well-formed JSON response, and emits data that is structurally valid but semantically wrong.

The challenge is that LLM-based classification introduces failure modes that didn't exist with rule-based or traditional ML classifiers. Non-determinism means the same document can receive different labels across runs. Hallucination means the model can generate categories that don't exist in your taxonomy. And because these failures produce clean HTTP 200 responses rather than thrown exceptions, they're invisible without purpose-built monitoring infrastructure. The gap between "classification works in testing" and "classification works in production" is where engineering teams lose time they didn't budget for.

Classification Configures Everything Downstream

AI document classification is the step that configures every subsequent step in your pipeline. A typical document processing architecture includes stages such as data capture, classification, extraction, enrichment, validation, and consumption. Classification occupies its position because its output is the parameter passed into every value-generating stage that follows.

That dependency is visible in code:

classification = classify_document(document_bytes, mime_type)

doc_type = classification["document_type"]

extracted = extract_document_data(document_bytes, mime_type, doc_type)

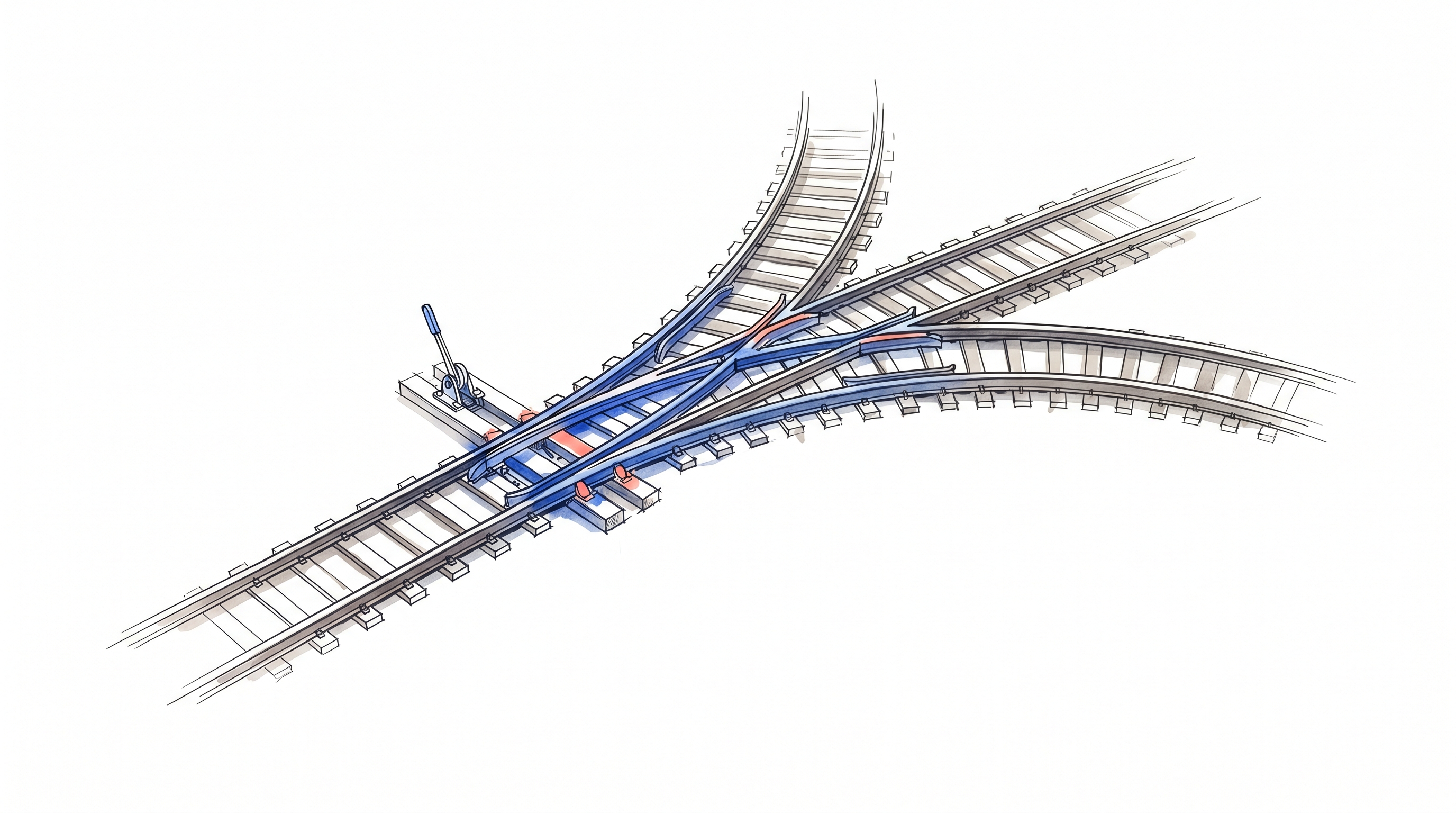

validation = validate_extraction(extracted, doc_type)The doc_type variable, assigned once at classification, threads through extraction and validation. The extraction schema, prompt template, and validation rules are all selected based on this single value. There is no re-classification gate after extraction. When classification is wrong, the pipeline runs to completion with wrong output rather than failing early with an observable error signal.

This creates three distinct failure modes that compound in production:

Wrong schema on wrong document type. A production pipeline post-mortem documented cases where documents sharing similar vocabulary get misclassified: hospital attestations classified as emergency invoices because both contain medical and financial terminology. The invoice schema then extracts line items, vendor names, and tax amounts from a document that doesn't contain them.

Schema-valid output with wrong data. The pipeline returns HTTP 200 and a well-formed JSON object. Every technical signal says success. The data is wrong. OCR failures produce garbled output that is visibly incorrect, but an LLM pipeline on a misclassified document produces clean, schema-conformant output that passes downstream validation.

Multi-document uploads breaking single-type assumptions. Users upload concatenated documents: a prescription, an invoice, and a payment receipt in a single PDF. A single-type classifier assigns one document type to content requiring three different schemas.

These failure modes are why teams building classification pipelines invest in typed schemas and automated testing from day one, rather than discovering these issues in production.

What LLMs Change About Classification

LLM-based AI document classification can substantially reduce labeled-data requirements compared with some traditional ML approaches, but it does not eliminate the need for labeled data altogether. A new document type can be prototyped without a training data collection cycle. Cross-domain generalization can work without retraining, though performance is often weaker than with in-domain supervised fine-tuning. These are capabilities that rule-based systems and some BERT-class encoders may struggle to match consistently, especially across domains or with minimal labeled data.

But LLMs also introduce production challenges that didn't exist before, and each one requires infrastructure to address.

Non-determinism breaks the testing contract. Rule-based systems are fully deterministic: same input, same output, every time. An answer-set consistency benchmark found pervasive inconsistency across models even in cases where the LLM can identify the correct answer. Engineers have found that setting temperature=0 is insufficient for reproducibility; underlying model computations and serving behavior can still break reproducibility regardless of parameter configuration.

Hallucination inflates your taxonomy. When classifying against a fixed set of categories, LLMs can generate labels that don't exist in your taxonomy. A comparative LLM classification study reported a consistent performance plateau across architectures, parameter counts, and model generations under zero-shot conditions. If your classification agents run in production, output validation layers checking returned labels against the target taxonomy become mandatory infrastructure.

Semantic bleed across domains. Documents containing vocabulary from multiple domains get misclassified because models latch onto surface-level terminology rather than document intent. A document classification discussion documented that language models misclassify insurance documents when keywords like "health" and "life" create ambiguity between financial and medical contexts. For production classification, especially with smaller models, structured annotation research recommends explicit, structured prompting over open-ended prompts.

Prompt brittleness produces format inconsistencies. LLMs can return "Smart televisions" in one run and "Smart TVs" in the next, creating duplicate categories in downstream systems. This is a structural characteristic of probabilistic text generation that requires explicit handling.

The Infrastructure Work Teams Don't Budget For

The production challenges above aren't theoretical. Each one demands a specific infrastructure response, and the total engineering investment for AI document classification consistently exceeds initial estimates. Structured outputs are available at the model API level, so schema conformance itself is a solved problem. The harder work is semantic validation: confirming that each field's value is within acceptable bounds for the document type, building structured failure paths for unclassifiable documents, and integrating all of that with the rest of the pipeline. That validation layer sits on top of three larger infrastructure concerns.

Prompt versioning and management. Minor prompt changes produce outsized accuracy shifts. If your pipeline relies on LLM classification, four distinct artifact categories must be versioned simultaneously: prompt templates, tool manifests, LLM configuration parameters, and associated resources. Changing one without versioning the others creates silent failures. And because LLM API providers can update model behavior without any code change on your side, classification pipelines are vulnerable to provider-side changes that break behavior without anyone deploying anything.

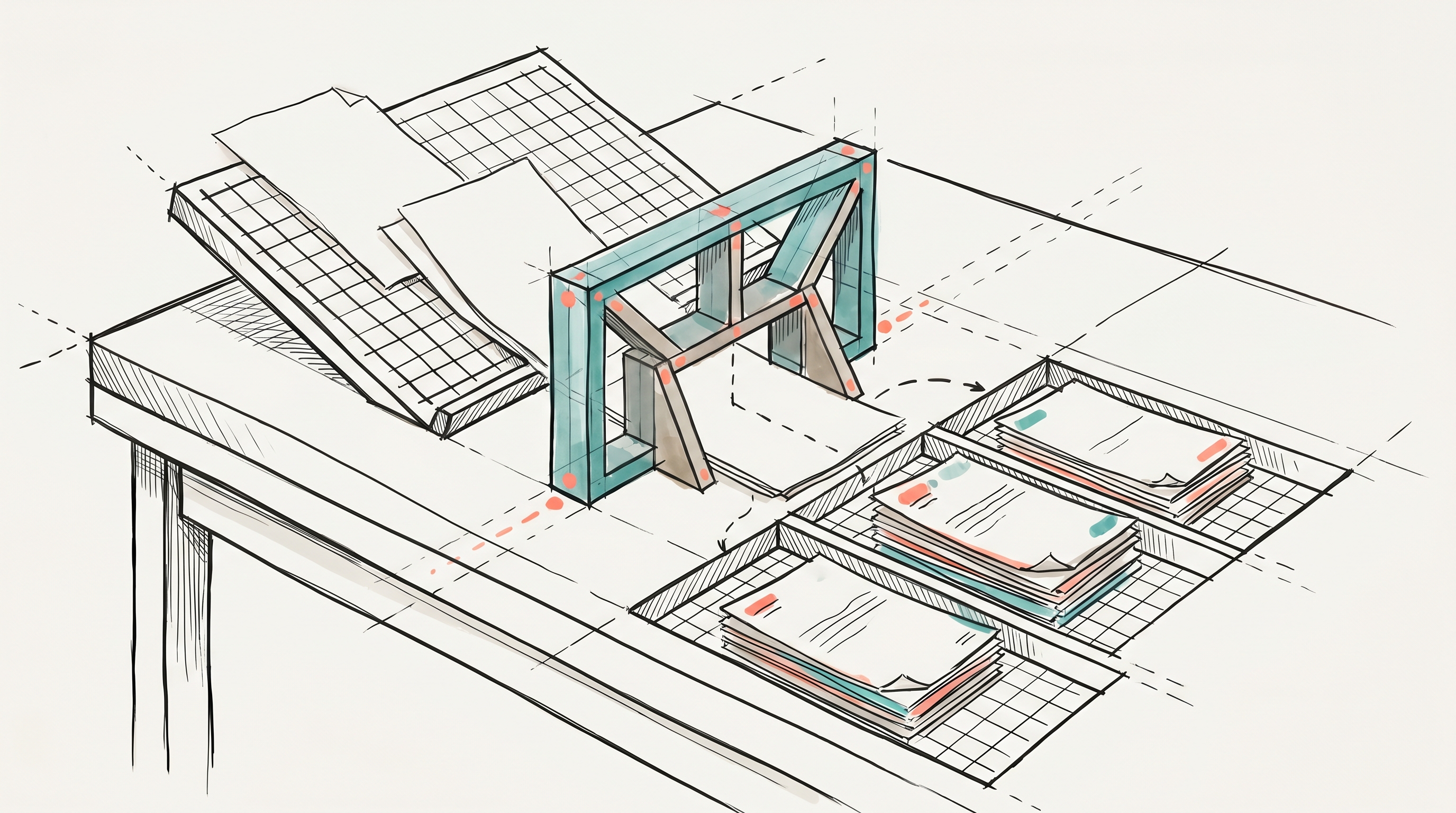

Evaluation and testing. LLM classification evaluation operates across two phases: pre-production regression testing across model versions and prompt revisions, and post-deployment monitoring for accuracy drift, cost, latency, and failure clusters. These phases feed each other; post-deployment monitoring generates inputs for the next regression cycle. Most accuracy degradation hides in the gap between evaluation test sets (clean, single-page, well-formatted documents) and production documents (multi-page, inconsistent formats, classification signals buried in the middle).

New document type onboarding. Each additional document type requires a new schema definition, new few-shot examples, updated validation rules, evaluation dataset expansion, and prompt regression testing on top of the prompt itself. The marginal cost is multiplicative in infrastructure surface area, not additive.

Teams experimenting with alternatives to LangChain discover that these tools provide orchestration primitives but leave prompt management, testing infrastructure, version control, error handling, and deployment pipelines as engineering exercises. Cloud services like Amazon Bedrock or Google Vertex AI provide model access along with some built-in capabilities: Bedrock offers versioning for flows and agents, and Vertex AI includes evaluation and experiment tracking. Engineers still build the testing, deployment, and monitoring layers themselves.

{{ LOGIC_WORKFLOW: extract-structured-resume-application-data | Extract and transform structured application data }}

Spec-Driven Classification with Logic

The real alternative to building all of this infrastructure is custom development, and most teams underestimate that work significantly. What starts as a short project often stretches well beyond initial estimates as engineers build testing harnesses, versioning systems, and deployment pipelines. Logic handles the entire infrastructure layer so engineers focus on defining classification behavior, not building the plumbing around it.

Logic transforms a natural language spec into a production-ready classification agent with a typed REST API. You describe the classification categories, the decision criteria, and the expected output structure. When you create an agent, 25+ processes execute automatically: research, validation, schema generation, test creation, and model routing optimization. The result is production-ready infrastructure in minutes instead of weeks.

For AI document classification specifically, several capabilities matter:

Typed APIs enforce taxonomy adherence. Logic generates typed JSON schema outputs from your agent spec with strict input/output validation enforced on every request. Your classification categories are defined in the schema; the agent's output always strictly matches it. This addresses the hallucination and category inflation problem at the infrastructure level rather than requiring a custom validation layer.

Auto-generated tests cover edge cases that teams won't anticipate. Logic generates test scenarios automatically based on your spec. Tests include multi-dimensional scenarios with realistic data combinations, conflicting inputs, ambiguous contexts, and boundary conditions. Each test receives one of three statuses: Pass, Fail, or Uncertain. When tests fail, Logic provides side-by-side comparison with clear failure summaries identifying specific fields that didn't match. Teams can also promote any historical execution into a permanent test case with one click.

Version control with rollback. Every published agent version is immutable and frozen once created. When classification criteria change, a new version is created, compared against the previous one, and deployed with one-click rollback available. This directly addresses the prompt versioning challenge: classification behavior, schema, and test results are all versioned together.

Intelligent model orchestration. Logic automatically routes agent requests across GPT, Claude, Gemini, and Perplexity based on task type, complexity, and cost. Engineers don't manage model selection or handle provider-specific quirks, which reduces model-management overhead and provider-specific integration work for classification agents.

Native document processing. Logic handles document extraction natively, including text extraction, font encoding, and layout parsing automatically. Classification agents receive parsed document content without a separate preprocessing layer.

Full execution logging. Every classification decision is logged with full input/output pairs, latency, model used, and cost. Teams query execution history to identify classification patterns, debug edge cases, and build regression test suites from real production data.

Your API contract is protected by default. When classification rules change, spec updates modify agent behavior without touching the API schema. Your input fields, output structure, and endpoint signatures remain stable. Schema-breaking changes require explicit confirmation before taking effect.

AI Document Classification in Production: DroneSense

DroneSense processes operational documents for public safety agencies and reduced document processing time from 30+ minutes to 2 minutes per document: a 93% reduction. No custom ML pipelines or model training required. The ops team refocused on mission-critical work instead of manual document handling.

After engineers deploy classification agents, domain experts can update rules if you choose to let them. The compliance team refines classification criteria; the ops team adjusts extraction rules. None of that consumes engineering cycles for routine updates. Every change is versioned and testable, with the guardrails you define preventing accidental integration breakage. Failed tests flag regressions but don't block deployment; your team decides whether to act on them or ship anyway.

Own the Classification Rules, Offload the Infrastructure

The own-vs-offload decision for document classification mirrors choices engineers make every day: run a Postgres instance or use a managed database, build payment processing or integrate Stripe. Owning LLM classification infrastructure makes sense when classification accuracy is the core product and competitive advantage. For most teams, classification enables something else: document extraction that feeds workflows, compliance routing that satisfies auditors, support ticket categorization that improves response times.

When AI document classification is a means to an end, owning the infrastructure competes with features that directly differentiate the product. Logic gives teams production classification APIs with typed outputs, auto-generated tests, version control, and execution logging: the infrastructure layer that can require substantial engineering time to build and ongoing engineering capacity to maintain. Logic processes 250,000+ jobs monthly with 99.999% uptime over the last 90 days.

The classification agent shipped on day one uses the same production infrastructure, whether it serves customers or internal teams. Engineers own the implementation and deployment in both cases. Logic handles the undifferentiated infrastructure work. Start building with Logic.

Frequently Asked Questions

What should teams implement first when deploying AI document classification?

Start with the pieces that prevent silent failure. Define the taxonomy and output structure first, then enforce them with typed schemas and validation. Next, add test coverage for ambiguous documents, conflicting inputs, and boundary conditions. Finally, enable versioning and execution logging before broad rollout. That order protects the API contract, exposes regressions earlier, and makes later prompt or model changes easier to compare and reverse.

Which metrics should teams monitor first after launch?

The earliest monitoring should focus on failure patterns that stay hidden behind successful responses. Track execution history for classification labels, latency, cost, and clusters of failures or ambiguous results. Review cases where output is schema-valid but semantically wrong, since those are the most dangerous production failures. Post-deployment monitoring should also feed the next regression cycle so production edge cases become permanent tests instead of recurring surprises.

How should teams handle PDFs that contain multiple document types?

Treat mixed-document PDFs as a structural risk, not as a normal single-label case. A single PDF can contain a prescription, an invoice, and a payment receipt, each requiring a different schema. Operationally, teams should test for concatenated uploads early, create failure paths for unclassifiable documents, and avoid assuming one doc_type can safely configure extraction and validation for the entire file.

What taxonomy mistakes create the most classification instability?

The most dangerous taxonomy problems are categories that overlap semantically or invite inconsistent wording. Documents sharing medical and financial vocabulary can trigger the wrong label, and prompt brittleness can produce near-duplicate categories such as alternate naming formats. Teams should keep categories explicit, validate outputs against the allowed taxonomy, and test ambiguous cross-domain cases before adding new document types to production.

How can domain experts update rules without increasing integration risk?

Safe rule updates depend on separation between behavior changes and schema changes. Domain experts can refine classification criteria or extraction rules when engineering teams allow it, but every change should remain versioned and testable. API stability improves when schema-breaking changes require explicit confirmation rather than shipping automatically. That workflow lets teams update business logic frequently while preserving stable endpoints, input fields, and output structures for downstream systems.